In the evolving landscape of artificial intelligence, multimodal large language models (LLMs) demand datasets that transcend text, incorporating images, videos, and structured data to unlock true versatility. Yet, securing these premium datasets amid rising concerns over provenance and privacy has become paramount. Blockchain technology emerges not as a buzzword, but as a strategic bulwark, ensuring onchain verified AI datasets that fuel fine-tuning without compromise. Platforms like FineTuneMarket. com are at the vanguard, enabling creators to monetize vision language datasets marketplace assets with perpetual royalties, all powered by seamless onchain payments.

Navigating Data Scarcity in Multimodal Fine-Tuning

Fine-tuning LLMs for multimodal tasks reveals stark realities: resource intensity and data quality gaps. Consider the Kaggle dataset for crypto and blockchain, offering 804 curated Q and A pairs, a solid start but limited in scope for vision-language integration. Similarly, efforts like fine-tuning Idefics-9B on A100 GPUs highlight computational hurdles, while SingleStore’s work with unstructured data underscores the power of images and videos in boosting model efficacy. Challenges persist, from non-IID data distributions in healthcare to architectural design processes blending generative AI and blockchain, as noted in recent MDPI studies.

![]()

These pain points are not mere technical footnotes; they represent macroeconomic shifts in AI development. As datasets become the new oil, their scarcity drives up value, much like commodities in inflationary cycles. Strategic players recognize that multimodal fine-tune data with verifiable origins will command premiums, fostering ecosystems where quality trumps volume.

Blockchain as the Keystone for Dataset Provenance

Blockchain’s immutable ledger transforms provenance datasets AI from aspirational to operational. Surveys on arXiv detail how it bolsters LLM security and safety, mitigating adversarial risks through decentralized verification. PrivyChain by PixelX exemplifies this, linking AI developers to rights-cleared private datasets via onchain licensing, preserving privacy and ownership. No longer do we rely on opaque intermediaries; instead, smart contracts enforce usage rights, enabling perpetual royalties for creators.

Strategic Advantages

-

Immutable provenance tracking: Blockchain ledgers ensure datasets’ origins and modifications are tamper-proof, fostering trust in multimodal LLM fine-tuning, as seen in PrivyChain by PixelX.

-

Privacy-preserving access via ZKPs: Zero-knowledge proofs enable secure data verification without exposure, exemplified in FLock Framework‘s decentralized fine-tuning (arXiv 2507.15349).

-

Economic incentives for sharing: Token rewards encourage premium data contributions, like FELT Labs using Ocean Protocol on Polygon.

-

Reduced attack surfaces: Decentralized setups minimize single points of failure in collaborative fine-tuning, per FLock reducing adversarial risks and healthcare federated LoRA (arXiv 2510.00543).

-

Royalties for ecosystem growth: Smart contracts automate ongoing royalties, sustaining contributions as in PrivyChain’s on-chain licensing marketplace.

This approach aligns with my long-term vision: in AI’s maturation, data assets appreciating over time eclipse short-term hype. Decentralized marketplaces, as envisioned in ResearchGate papers and LinkedIn discussions, eliminate third-party trust, paving the way for robust blockchain datasets LLM fine-tuning.

Pioneering Frameworks Reshaping Collaborative Learning

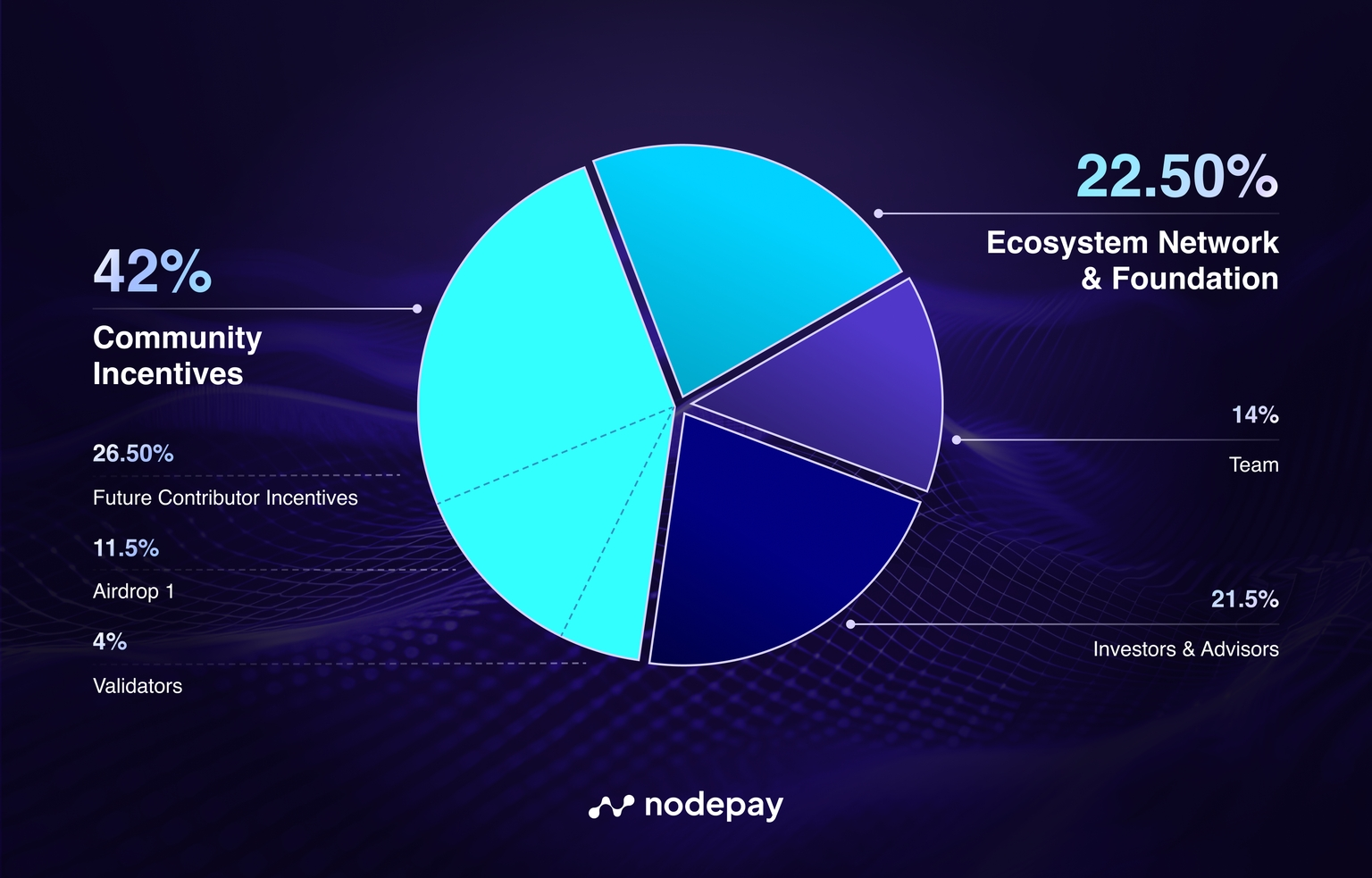

Recent breakthroughs cement blockchain’s role. The FLock framework, launched in July 2025, decentralizes fine-tuning for 70-billion parameter LLMs, slashing adversarial vulnerabilities and enhancing generalization via economic incentives. No central aggregator means fewer weak points, a strategic masterstroke validated empirically.

Federated approaches in healthcare, using LoRA and blockchain identities, tackle non-IID data across institutions, yielding fairer outcomes and stable convergence. FELT Labs leverages Ocean Protocol on Polygon for infrastructure-free fine-tuning on private data, while H2O. ai’s LLM Data Studio streamlines preparation for RLHF and beyond. These tools converge on a truth: blockchain-secured datasets are indispensable for multimodal prowess, from 3D printing benchmarks to architectural innovation.

Yet, as we stand in early 2026, the marketplace for these assets remains fragmented. FineTuneMarket. com bridges this, offering discovery and transactions optimized for developers and enterprises, where onchain verified AI datasets drive superior model performance.

Enterprises eyeing multimodal LLMs must pivot toward platforms that treat datasets as appreciating assets. FineTuneMarket. com stands out by streamlining discovery of vision language datasets marketplace offerings, where onchain payments ensure instant, borderless transactions. Creators embed royalties into every fine-tune, creating passive income streams reminiscent of fixed-income securities in uncertain markets.

Royalties as the New Inflation Hedge in AI Data Economies

From my vantage as a commodities veteran, datasets mirror rare earth elements: finite supply meets surging demand. Blockchain enforces scarcity through provenance, turning one-off sales into perpetual yields. Imagine curating a niche multimodal fine-tune data set for blockchain analysis; each subsequent use pays dividends, hedging against AI commoditization. This model disrupts traditional data brokers, who hoard without sharing value downstream. Instead, smart contracts automate fairness, aligning incentives across the supply chain.

Strategic adoption here trumps speculative frenzy. Platforms integrating zero-knowledge proofs, as in PrivyChain, allow selective disclosure, vital for sensitive sectors like healthcare or finance. Developers fine-tuning on verified crypto Q and A pairs or 3D printing benchmarks gain not just accuracy, but audit trails that regulators crave. Short-term noise around compute costs fades; long-term, these onchain verified AI datasets compound model edges.

Overcoming Fragmentation: A Unified Marketplace Vision

The current patchwork of arXiv surveys, Kaggle repos, and niche tools like SingleStore yields silos, stifling innovation. FineTuneMarket. com unifies this, optimizing for machine learning engineers who juggle vision-language tasks. Picture sourcing rights-cleared images paired with blockchain explainers, all tokenized for frictionless licensing. This isn’t incremental; it’s a phase shift, akin to how pension funds diversified into alternatives during low-yield eras.

Traditional Dataset Licensing vs. Onchain Royalties on FineTuneMarket

| Aspect | Traditional Licensing | FineTuneMarket Onchain Royalties |

|---|---|---|

| Creator Earnings 💰 | One-time flat fees or low royalties 📉❌ | Ongoing royalties per use, scaling with popularity 📈✨ |

| Developer Access 🔓 | Complex contracts, NDAs, manual payments & approvals 😩📜 | Frictionless instant purchase & access via blockchain ⚡🟢 |

| Vision-Language Datasets 🎨🤖 | Licensing hurdles for multimodal (images/text) data 🎥📄🚫 | Seamless royalties for premium vision-language fine-tuning 👁️💸 |

| Data Security & Privacy 🛡️ | Centralized, vulnerable to breaches & provenance issues 👻 | Blockchain-secured ownership & privacy-preserving licensing ⛓️🕶️ |

| Monetization Potential 🚀 | Limited by upfront sales 📊 | Higher earnings from collaborative LLM fine-tuning (e.g., FLock-inspired) 🌟📊 |

Challenges linger, sure. Resource demands for IDEFICS-9B scale-ups persist, and non-IID data vexes federated setups. Yet blockchain frameworks like FLock prove scalable, fine-tuning massive models collaboratively without single points of failure. H2O. ai’s workbench complements this, prepping unstructured multimodal inputs for blockchain-secured pipelines. The result? Models that generalize across domains, from architectural sketches to crypto analytics.

For researchers and enterprises, the calculus is clear: invest in provenance datasets AI today to dominate tomorrow’s inference economy. As macroeconomic cycles favor hard assets, tokenized datasets offer yield without custody risks. FineTuneMarket. com positions users at this inflection, where strategic dataset curation yields outsized returns. The ecosystem matures not through hype, but through verifiable value chains that reward foresight.

Stakeholders from startups to funds should scout these marketplaces now. The fusion of blockchain and multimodal fine-tuning isn’t a trend; it’s infrastructure for AI’s next decade, where data sovereignty drives competitive moats.