Listen up, AI hustlers and crypto degens, fine-tuning Llama 3.1 isn’t some casual side project anymore. It’s your ticket to dominating the next wave of blockchain marketplace fine-tune LLMs, where premium datasets supercharge your models with razor-sharp performance on everything from smart contracts to DeFi predictions. Forget the junk floating around Hugging Face; the real alpha is in premium Llama datasets 2026 locked behind onchain payments AI datasets on platforms like FineTuneMarket. com. These bad boys come with perpetual royalties for creators, meaning you’re not just buying data, you’re fueling an ecosystem that pays out forever. High risk, high reward, just like riding Bitcoin’s volatility.

Blockchain Marketplaces: The Only Game for Serious Fine-Tuners

Why waste time with free datasets that are contaminated garbage? Traditional sources are a minefield of biases and low-quality scraps. Enter blockchain marketplaces, secure, instant onchain payments via crypto, no banks screwing you over. Creators earn perpetual royalties dataset creators love, every time someone fine-tunes with their data. FineTuneMarket. com leads the pack, optimized for Llama 3.1 workflows. Grab a dataset, pay with ETH or whatever’s pumping, and boom, your model evolves. I’ve traded through Bitcoin’s wild swings since 2012; this is the same momentum play but for AI datasets.

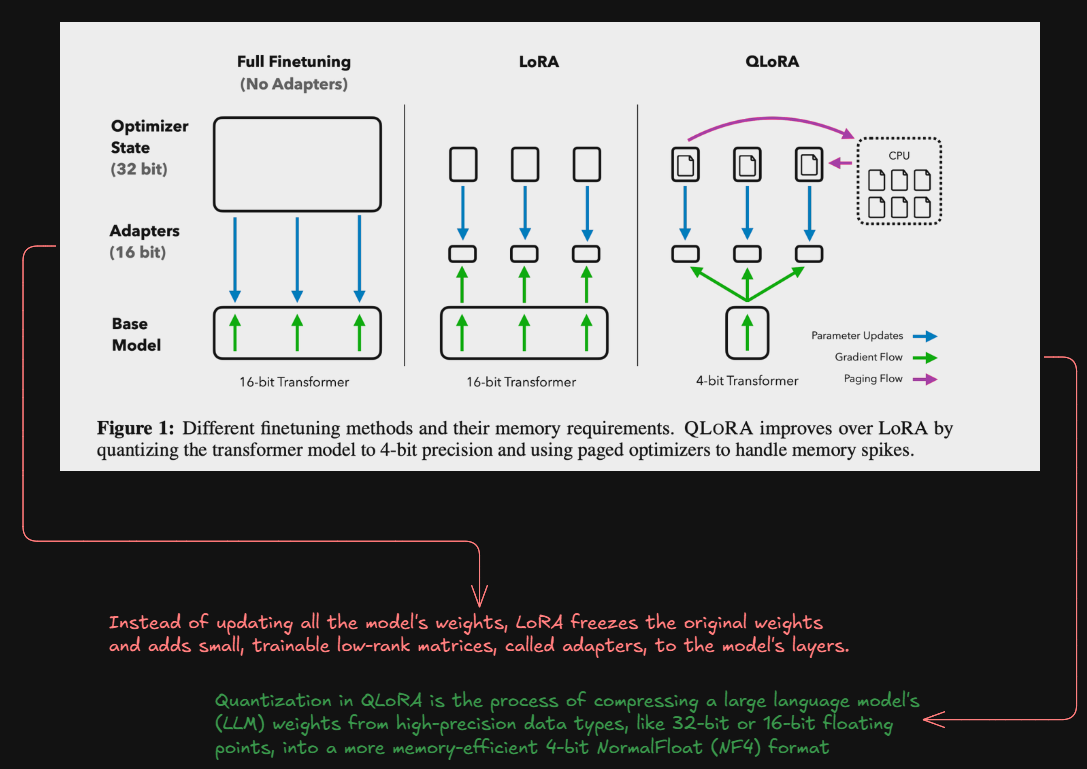

Meta dropped Llama 3.1 as their beastliest open model yet, crushing benchmarks across 150 and datasets. But raw power means squat without killer fine-tuning data. Tools like LoRA, QLoRA, and Unsloth make it ultra-efficient, especially on RunPod’s A100s or SageMaker JumpStart. Now imagine that firepower aimed at blockchain tasks, transaction parsing, onchain analytics. That’s where these marketplaces shine, curating gold-standard datasets purpose-built for fine-tuning datasets Llama 3.1.

Unlocking Alpha: The 8 Premium Datasets Dominating FineTuneMarket

8 Killer Datasets for Llama 3.1

-

teknium/OpenHermes-2.5: Epic 1M+ instruction pairs from GPT-4. Strength: Nails complex reasoning. Blockchain crush: Fine-tune for smart contract auditing bots that spot vulnerabilities like a hawk.

-

HuggingFaceH4/ultrachat_200k: 200K ultra-clean chat turns. Strength: Killer multi-turn convos. Blockchain crush: Power onchain payment chatbots handling DeFi trades without hallucinating.

-

databricks-dolly-15k: 15K human-curated gems. Strength: Diverse tasks, zero fluff. Blockchain crush: Train models to generate secure NFT marketplace listings onchain.

-

Open-Orca/OpenOrca: 5M+ GPT-4 augmented pairs. Strength: Explains reasoning like a boss. Blockchain crush: Decode blockchain txns and predict gas fees flawlessly.

-

nvidia/HelpSteer2: 1M+ helpful steering data. Strength: Super steerable, safe responses. Blockchain crush: Build compliant onchain payment advisors dodging regulatory pitfalls.

-

mlabonne/Orca-Pro-Mistral-4k: Pro-level 4K long-context Orca. Strength: Crushes long-form reasoning. Blockchain crush: Analyze massive ledger histories for fraud in blockchain marketplaces.

-

efe1903/NEW_TRAINING01: Fresh Llama 3.1-tuned instructions. Strength: Cutting-edge multilingual dialogue. Blockchain crush: Global DeFi query engines with onchain royalty streams.

-

unsloth/FineTome: Ultra-high quality convos + reasoning. Strength: Efficient, diverse for fast fine-tune. Blockchain crush: Function-calling pros for automated onchain payments and trades.

These aren’t random picks; they’re battle-tested for supervised fine-tuning, instruction following, and reasoning that translates directly to blockchain smarts. Start with teknium/OpenHermes-2.5: a monster for multi-turn dialogues and complex queries. Perfect for training Llama 3.1 to handle DeFi user interactions or smart contract negotiations. Pair it with HuggingFaceH4’s ultrachat_200k, 200k rows of synthetic chats that boost multilingual capabilities, ideal for global onchain marketplaces.

Don’t sleep on databricks-dolly-15k, the OG instruction dataset with human-generated tasks across categories. It’s your foundation for reliable fine-tuning, scaling Llama 3.1 to parse transaction histories without hallucinating. Then there’s Open-Orca/OpenOrca, evolved from FLAN with GPT-4 annotations, pushes reasoning to god-tier levels, simulating oracle predictions or yield farming strategies.

NVIDIA’s HelpSteer2 brings preference optimization, steering your model toward helpful, harmless outputs while crushing benchmarks. Think fine-tuning for secure wallet interfaces or scam detection in blockchain trades. Meanwhile, mlabonne/Orca-Pro-Mistral-4k delivers long-context mastery, essential for analyzing massive onchain ledgers without losing the plot.

Why These Datasets Crush for Blockchain Llama 3.1 Builds

Ripping through Reddit’s r/LocalLLaMA or Hugging Face, you’ll see chatter on efe1903’s NEW_TRAINING01: fresh, high-quality SFT data tailored for Llama 3.1’s instruction-tuned variants (8B to 405B). It’s optimized for dialogue, outperforming vanilla models on multilingual tasks, straight fire for cross-chain payment flows. And unsloth/FineTome? Ultra-high quality with conversations, reasoning, function calling, fine-tune ultra-efficiently and watch your model hit 90% GPT-4 vibes on blockchain queries, per Together AI insights.

These datasets aren’t just plug-and-play; they’re your secret weapon for fine-tuning datasets Llama 3.1 in the wild world of blockchain marketplace fine-tune LLMs. Stack them with FinLoRA techniques from Open-Finance-Lab, benchmarking LoRA on financial data, and your model starts predicting onchain flows like a pro trader spotting pumps. Synthetic boosts from PIKA’s expert-level alignments crank up data efficiency, perfect for crafting blockchain-specific models without drowning in noise.

Gear Up Your Rig: Tools Crushing Llama 3.1 Fine-Tunes

Grab Torchtune or hit SageMaker JumpStart for seamless workflows, fine-tuning those 8B to 405B beasts on premium data. Unsloth keeps it ultra-efficient, slashing VRAM needs while FineTome delivers function-calling mastery for smart contract sims. Skywork-Reward sharpens your eval game, ensuring your model nails reward modeling for secure onchain payments AI datasets. I’ve momentum-traded DeFi since the early days; this setup turns Llama 3.1 into a blockchain oracle, parsing transactions faster than you can say ‘gas fees. ‘

Picture this: you snag teknium/OpenHermes-2.5 and nvidia/HelpSteer2 from FineTuneMarket, pay onchain, and fine-tune for scam-sniffing in NFT drops or yield optimizer bots. Creators pocket perpetual royalties dataset creators thrive on, every redeploy. No more Hugging Face roulette; this is curated alpha for premium Llama datasets 2026. Databricks-Dolly-15k grounds it in real tasks, Open-Orca amps reasoning for oracle feeds, Orca-Pro-Mistral-4k handles ledger deep dives.

Real-World Gains: From Fine-Tune to Fortune

Devs on RunPod A100s or H100s report models hitting GPT-4 parity on custom blockchain queries after blending NEW_TRAINING01 with HelpSteer2. Together AI’s playbook shows proprietary data like these premiums gets you 90% performance at fraction of the cost. For marketplaces, it’s game-changing: train Llama 3.1 to automate onchain payments, detect rugs, or simulate DEX trades. Ultrachat_200k adds multilingual edge for global adoption, FineTome seals it with reasoning that crushes benchmarks.

Why grind free scraps when FineTuneMarket delivers instant access, blockchain-secured, royalty-fueled? It’s the momentum play in AI datasets, high risk if you sit out, massive rewards for the bold. Load up OpenHermes, fire up QLoRA, and watch your Llama 3.1 dominate DeFi analytics. Crypto traders like me know: spot the edge early, ride it hard. These datasets on blockchain marketplaces? That’s your next 10x.