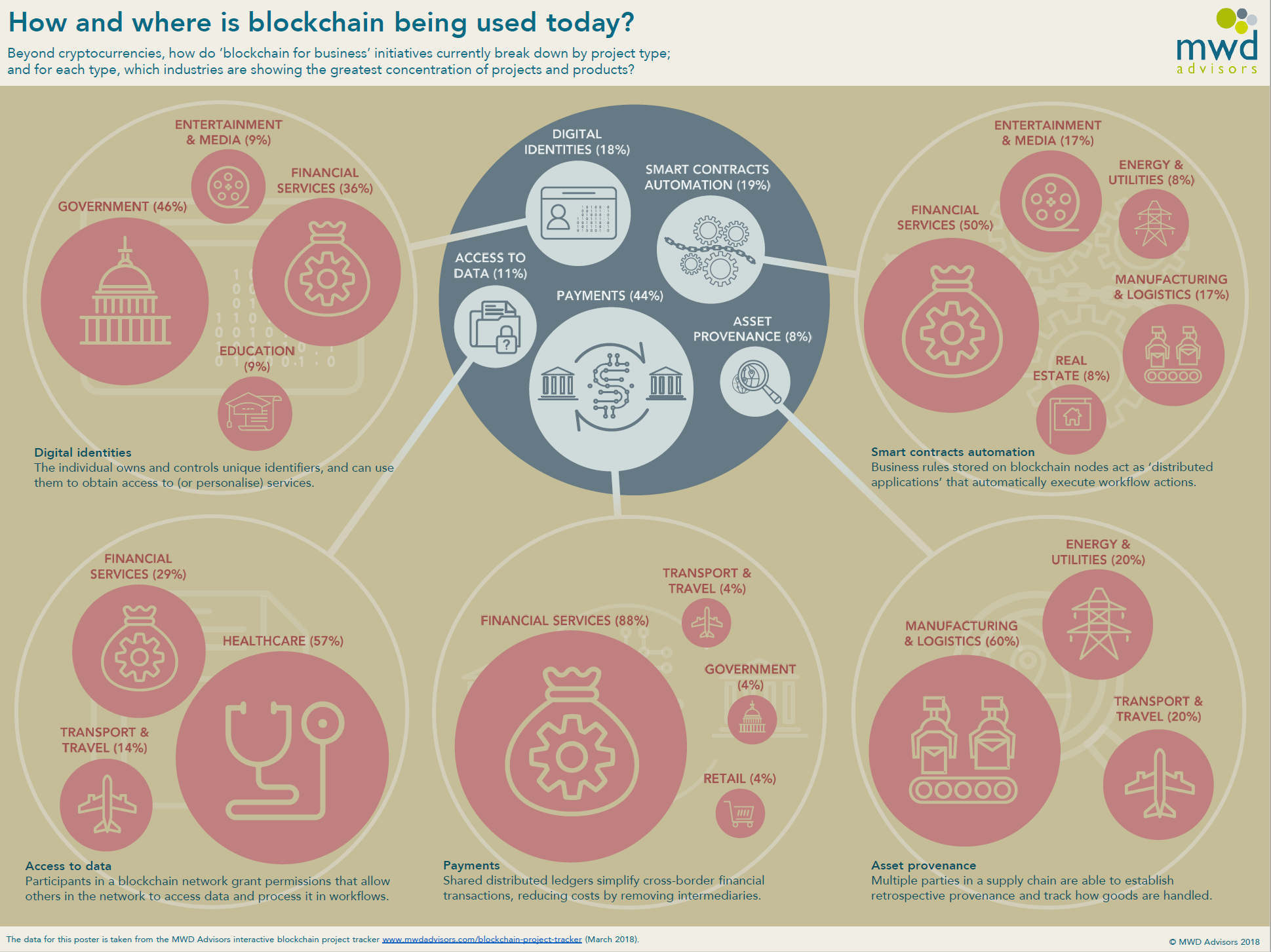

In the accelerating race to build more capable large language models, supervised fine-tuning datasets stand out as the linchpin for precision alignment. As we hit 2026, developers and enterprises are pivoting from generic web scrapes to premium, domain-specific collections that deliver measurable lifts in model performance. Onchain marketplaces like FineTuneMarket. com are reshaping this landscape, offering secure tokenization of datasets with perpetual royalties for creators, all powered by blockchain’s immutable ledger.

Key Benefits of Premium SFT Datasets on Onchain Markets

-

Ongoing Royalties: Creators earn perpetual royalties via smart contracts on dataset usage and resales, as enabled by blockchain marketplaces.

-

Quality Assurance: Curated, licensed, deduplicated data like SyndiGate and Benzinga datasets ensures high standards for reliable LLM fine-tuning.

-

Domain Specificity: Tailored datasets such as NIFTY Financial News and CFGPT for finance/crypto align LLMs precisely with onchain applications.

-

Immutable Provenance: Blockchain registration verifies data origin and integrity throughout the LLM lifecycle.

-

Decentralized Incentives: Token rewards motivate high-quality contributions, powering open AI training as in decentralized RL frameworks.

Supervised fine-tuning refines base LLMs by training on labeled input-output pairs tailored to niche applications, from financial forecasting to blockchain agent behaviors. Data from Rain Infotech underscores how domain-specific labels align models tightly with business needs, often yielding 20-30% gains in task-specific accuracy over pre-trained baselines. Yet, the real game-changer is accessibility via onchain datasets marketplaces, where scarcity of high-quality data meets decentralized distribution.

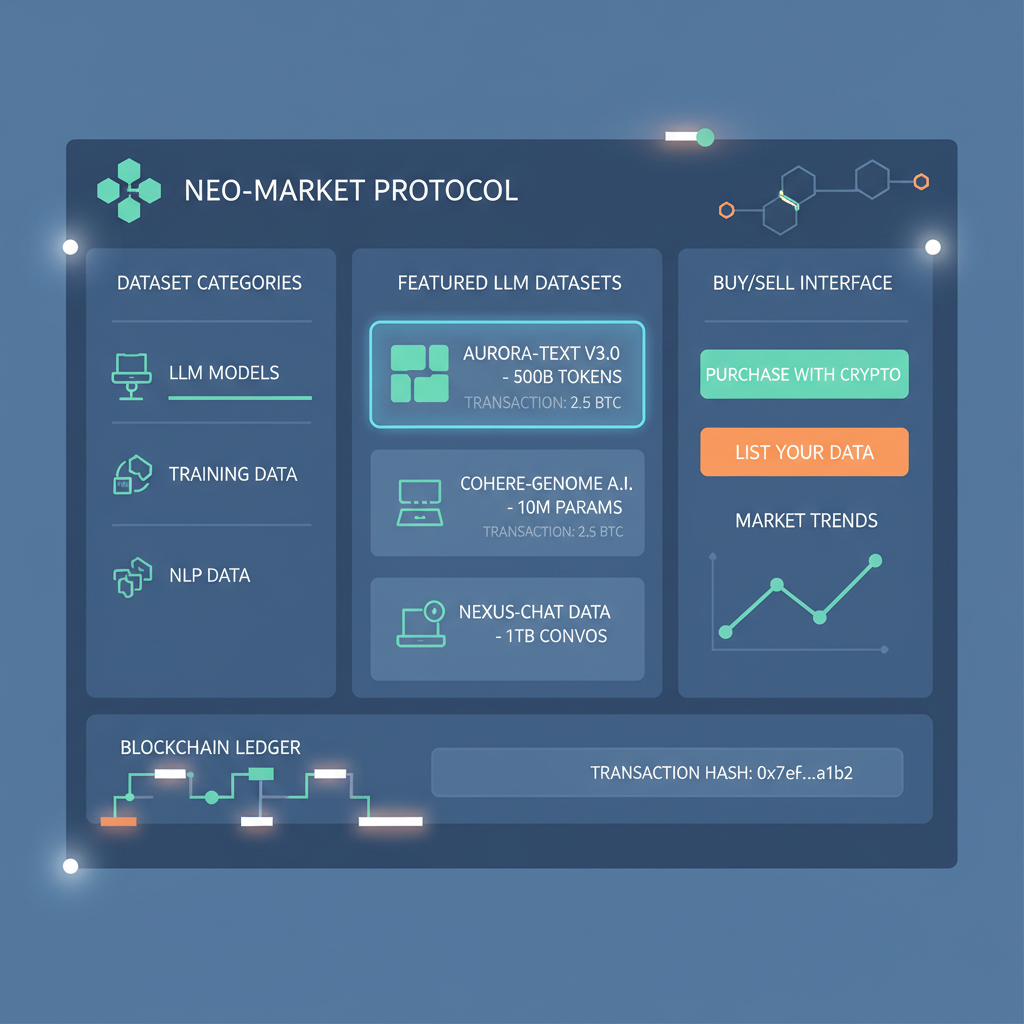

Blockchain’s Role in Democratizing LLM Fine-Tuning Data

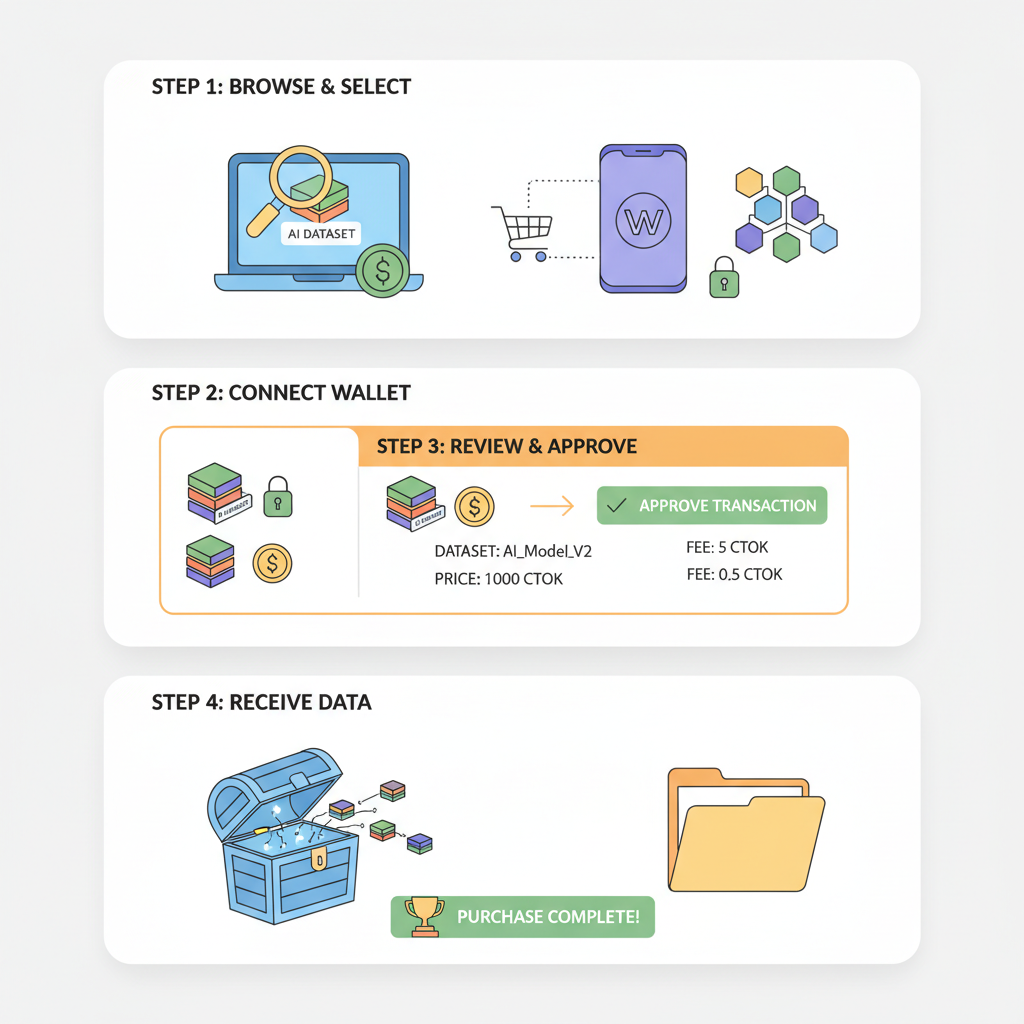

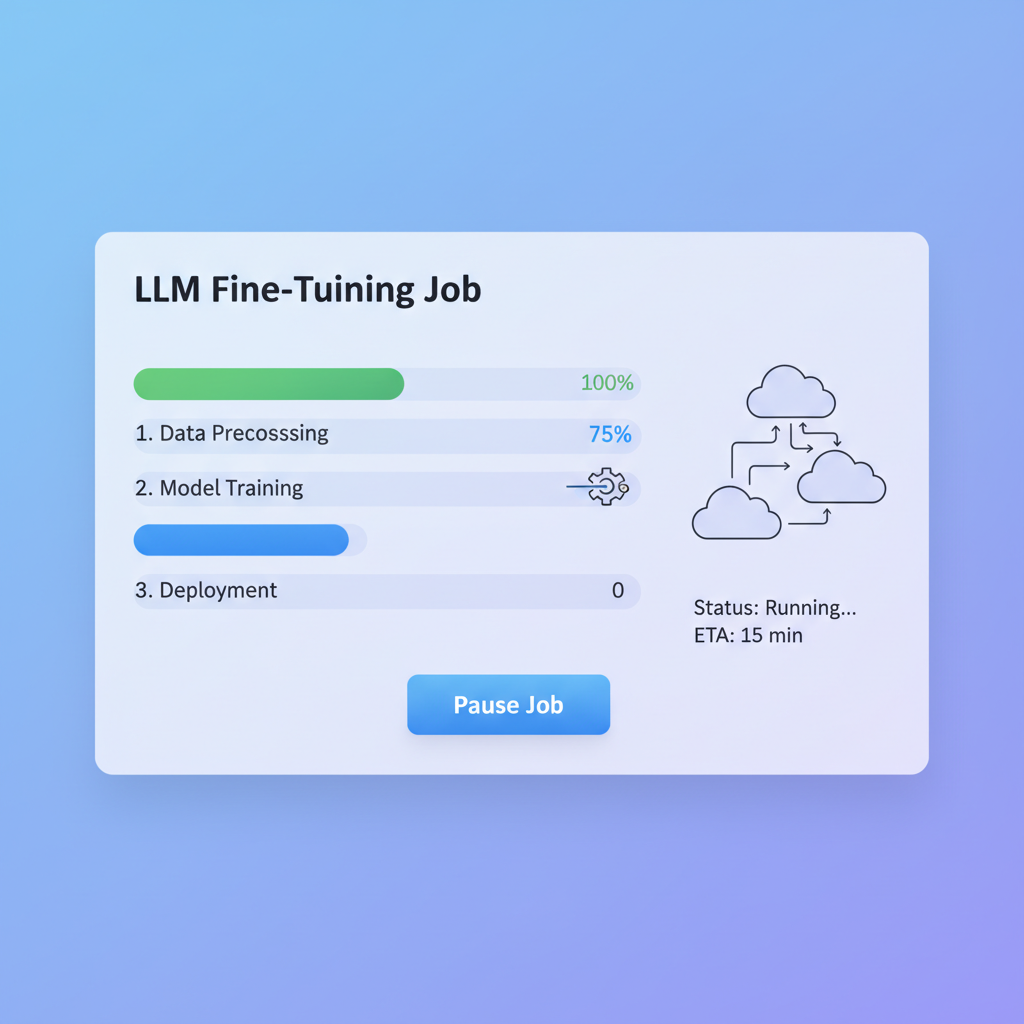

Integrating blockchain into the LLM lifecycle, as outlined in recent ScienceDirect frameworks, starts with dataset registration on immutable chains. This ensures provenance, preventing poisoned data that plagues centralized repositories. Galaxy Research highlights how fine-tuning workflows, unlike compute-heavy pre-training, thrive in decentralized setups with minimal bandwidth demands. Miners and node operators can now contribute to RLHF or SFT cycles, earning tokens for compute and curation.

From my vantage as a portfolio manager blending quant models with macro trends, this setup mirrors tokenized real-world assets: datasets become investable primitives. Creators embed smart contracts for royalties on every downstream use, fostering a flywheel of quality inflows. Platforms like those topping SiliconFlow’s 2026 list, Hugging Face, Axolotl, are increasingly onchain-compatible, streamlining purchases for fine-tune LLMs royalties.

Standout Premium Datasets Powering SFT in 2026

As of February 2026, onchain marketplaces host curated gems like the NIFTY Financial News Headlines Dataset. Split into NIFTY-LM for causal language modeling and NIFTY-RL for alignment, it packs deduplicated headlines with market metadata, ideal for forecasting models. Hugging Face hosts it, but onchain versions enable fractional ownership and royalties.

SyndiGate and Benzinga elevate the game with fully licensed corpora: multilingual publications spanning global news, perfect for multilingual LLMs. These aren’t raw scrapes; they’re machine-readable, rights-cleared troves supporting supervised fine-tuning datasets across finance, geopolitics, and beyond.

RefinedWeb’s 600 billion token extract proves web data, when rigorously filtered, rivals human-curated sets, a boon for broad-domain fine-tuning. CFGPT’s CFData, with 141 billion tokens in Chinese financial analytics, targets six tasks from analytics to advisory, showcasing how premium AI datasets blockchain can specialize Eastern markets. Platypus’s Open-Platypus subset delivers leaderboard-topping efficiency, using fractions of compute for strong quantitative metrics.

Navigating Formats and Workflows for Onchain SFT

Red Hat’s insights into dataset formats reveal the shift from structured JSON to tokenized streams optimized for autoregressive training. Tools like LLM-AutoDP on GitHub automate processing, boosting win rates over 80% on medical benchmarks, extend that to crypto Q and A from Kaggle’s 804-pair set, and blockchain agents emerge sharper. Yuval Avidani notes fine-tuning trumps RAG for tone consistency, a must for enterprise deployments.

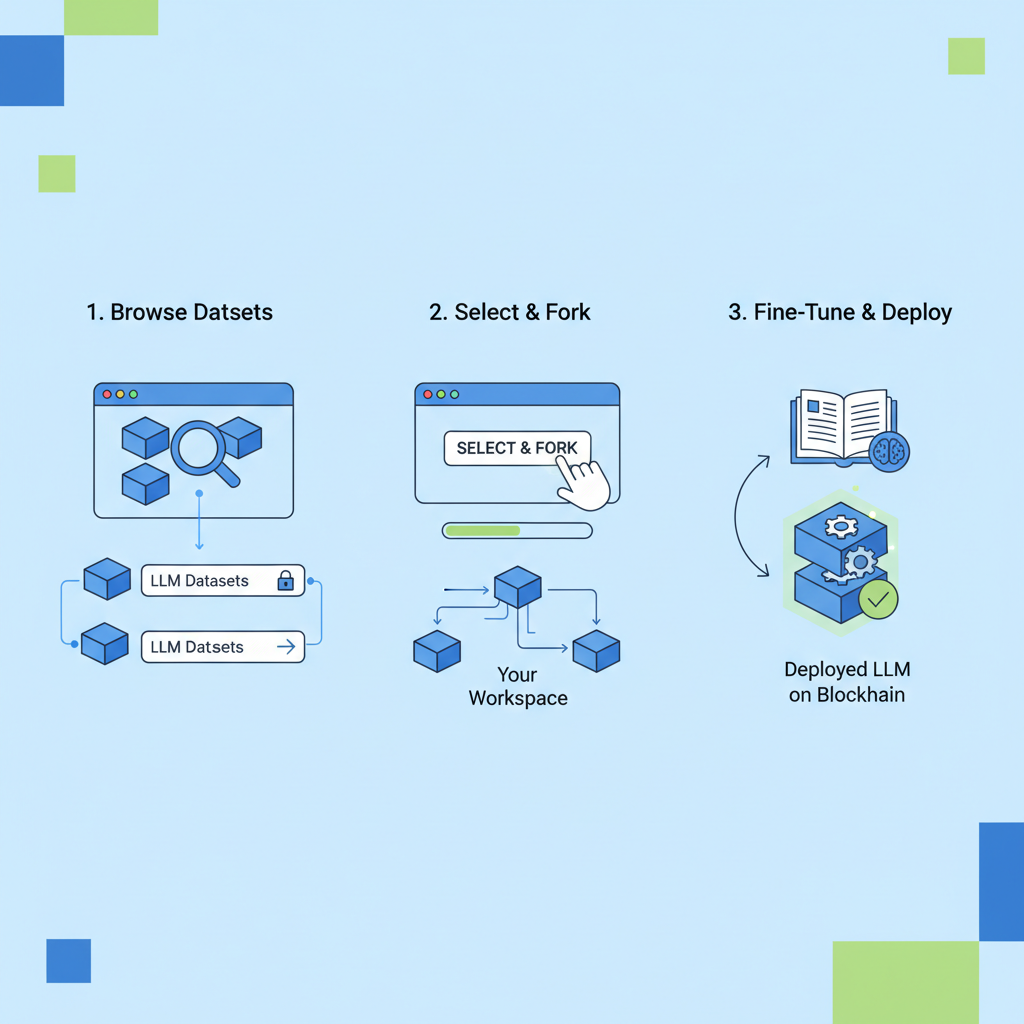

Onchain marketplaces cut friction: instant payments, verifiable scarcity, and composability with DeFi yields on idle datasets. For quants like me, this means diversified exposure to AI infra without custody risks. Kaggle’s crypto dataset hints at explosive growth in niche SFT, where onchain datasets marketplace listings could command premiums as agentic LLMs proliferate.

Decentralized reinforcement learning setups, as detailed by Jung-Hua Liu, position miners to fine-tune agentic LLMs directly onchain, hosting model instances and earning for RL contributions. This scales supervised fine-tuning datasets beyond silos, with blockchain ensuring tamper-proof provenance for every label.

Premium Platforms & Strategies for SFT Datasets on Onchain Marketplaces

| Platform/Strategy | Key Features | Royalties & Alpha | Use Cases |

|---|---|---|---|

| SiliconFlow + LLaMA-Factory | Top 2026 fine-tuning platforms 🚀 Low-bandwidth SFT & RLHF Open source LLM support |

5-10% perpetual royalties 💰 Mirrors tokenized funds Tamper-proof blockchain provenance |

Backtesting crypto strategies Autonomous agents for trades SiliconFlow integration for efficient training |

| Hugging Face + NIFTY Dataset | Premium financial headlines 📈 NIFTY-LM for SFT, NIFTY-RL for alignment Deduplicated news with metadata |

5-10% perpetual royalties 💰 Tokenized access on marketplaces Immutable onchain logs |

Forecasting trades/auditing <1% error Autonomous blockchain agents Financial market prediction |

| Kaggle Crypto Q&A | 804 curated crypto/blockchain Q&A pairs 💹 Domain-specific labeled data Ideal for supervised fine-tuning |

5-10% perpetual royalties 💰 Alpha via onchain licensing Provenance-verified datasets |

Crypto Q&A alignment Blockchain tool-learning agents Domain adaptation for DeFi queries |

| SyndiGate Premium Datasets | Fully licensed multilingual corpora 🌍 High-quality global publications Machine-readable for LLM training |

5-10% perpetual royalties 💰 Tokenized fund-like royalties Tamper-proof dataset registration |

International finance SFT Multilingual market analysis Enterprise AI applications |

| Benzinga Datasets | Licensed financial content collections 📰 Premium news & analytics corpora Supports ML fine-tuning needs |

5-10% perpetual royalties 💰 Onchain marketplace alpha Blockchain provenance tracking |

Financial forecasting agents Trade auditing with LLMs Global content for backtesting |

| Platypus + Decentralized RL | Quick refinement w/ Open-Platypus ⚡ 80%+ win rates on processed data Merged fine-tuned LLMs topping leaderboards |

5-10% perpetual royalties 💰 Decentralized miner rewards Immutable training logs onchain |

Agentic LLMs for blockchains Efficient RLHF in crypto Autonomous execution standards |

Quality metrics back the shift. Platypus models topped leaderboards using lean datasets, while RefinedWeb’s deduped tokens matched curated baselines at scale. For Chinese markets, CFGPT’s 141 billion tokens across six tasks illustrate domain depth, now tokenizable for global access via premium AI datasets blockchain.

Workflows simplify too. Start with dataset discovery on onchain datasets marketplace listings, purchase via wallet, then pipe into Axolotl for SFT. GitHub’s LLM-AutoDP handles preprocessing, yielding 80% and wins on benchmarks. No more ETL nightmares; blockchain verifies freshness and licensing in one tx.

This ecosystem fosters innovation loops. Researchers fine-tune on SyndiGate’s multilingual news, enterprises adapt for compliance agents, devs bootstrap crypto oracles. Galaxy’s bandwidth analysis confirms: SFT’s low sync needs suit decentralized nets, slashing costs 70% versus cloud monopolies.

Risks persist, balanced against upsides. Data drift demands periodic retrains, but onchain audit trails mitigate. Overfitting lurks in narrow sets, yet diversification across NIFTY, Benzinga, Platypus hedges that. As FRM-certified, I stress position sizing: allocate 10-20% of AI infra budgets to tokenized datasets for uncorrelated returns.

Looking ahead, 2026 marks the inflection. With agentic LLMs demanding specialized fine-tune LLMs royalties, marketplaces evolve into full-stack hubs: datasets, compute bounties, model vaults. FineTuneMarket. com leads, blending discovery with onchain economics for sustainable growth. Developers win sharper models, creators perpetual income, investors diversified alpha. Balance, indeed, propels this frontier.