Large language models (LLMs) have transformed industries, yet their fine-tuning remains hobbled by data scarcity. Developers face skyrocketing training costs, legal hurdles from lawsuits, and GPU shortages that make scaling specialized models a nightmare. Premium datasets on onchain AI dataset marketplaces offer a disciplined path forward, much like value investing in undervalued assets with long-term yields.

Recent reports paint a stark picture. AI startups grapple with expenses tied to fine-tuning LLMs and inference, exacerbated by token management and litigation risks. Blockchain enters as a decentralized savior, addressing GPU scarcity while enabling secure data exchanges. Think of it: foundation models narrowed into task-specific datasets accelerate development, echoing efficient capital allocation in equity markets.

The Data Crunch Squeezing LLM Performance

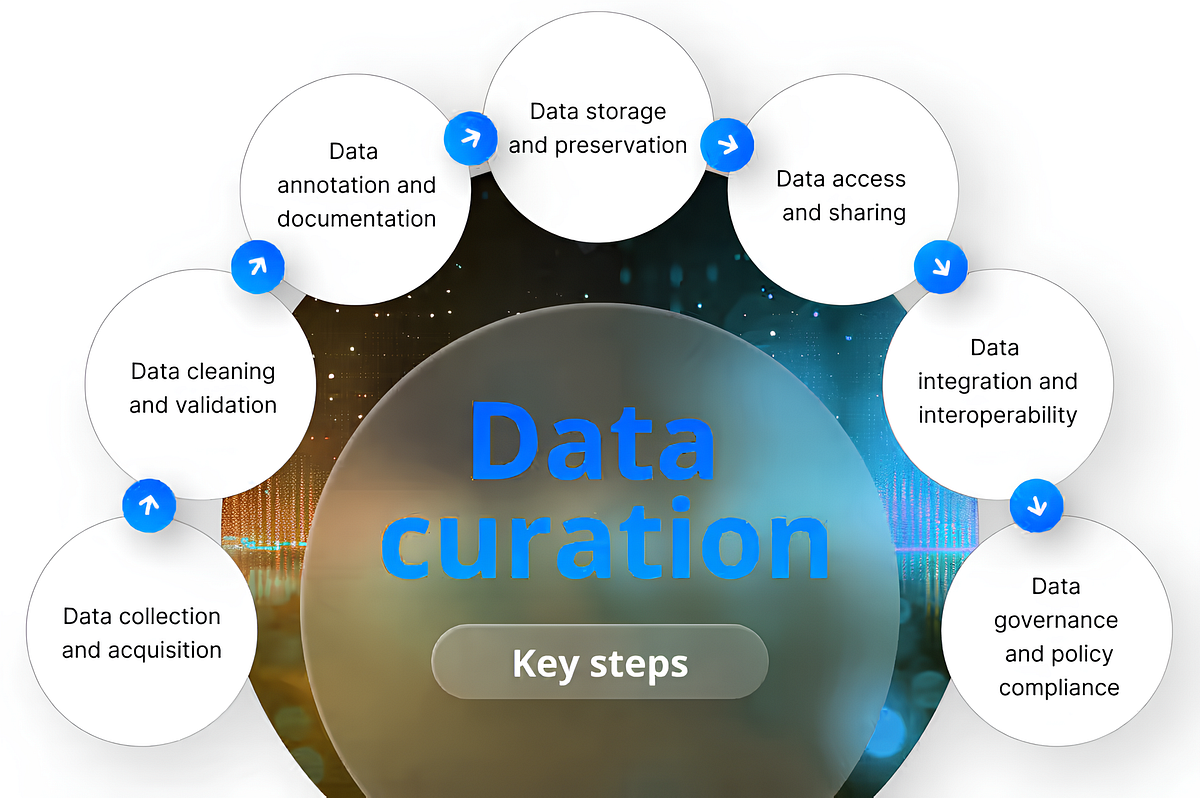

Fine-tuning LLMs demands high-quality, domain-specific data, but supply lags. Web scraping invites lawsuits, synthetic data struggles with fidelity, and public sources lack depth for niches like finance or healthcare. Enter premium fine-tuning data scarcity solutions: curated collections that boost accuracy without ethical pitfalls. From FinLoRA’s 19 financial datasets benchmarking LoRA methods to Wealth Management QA pairs for conversational agents, these resources prove targeted data trumps volume.

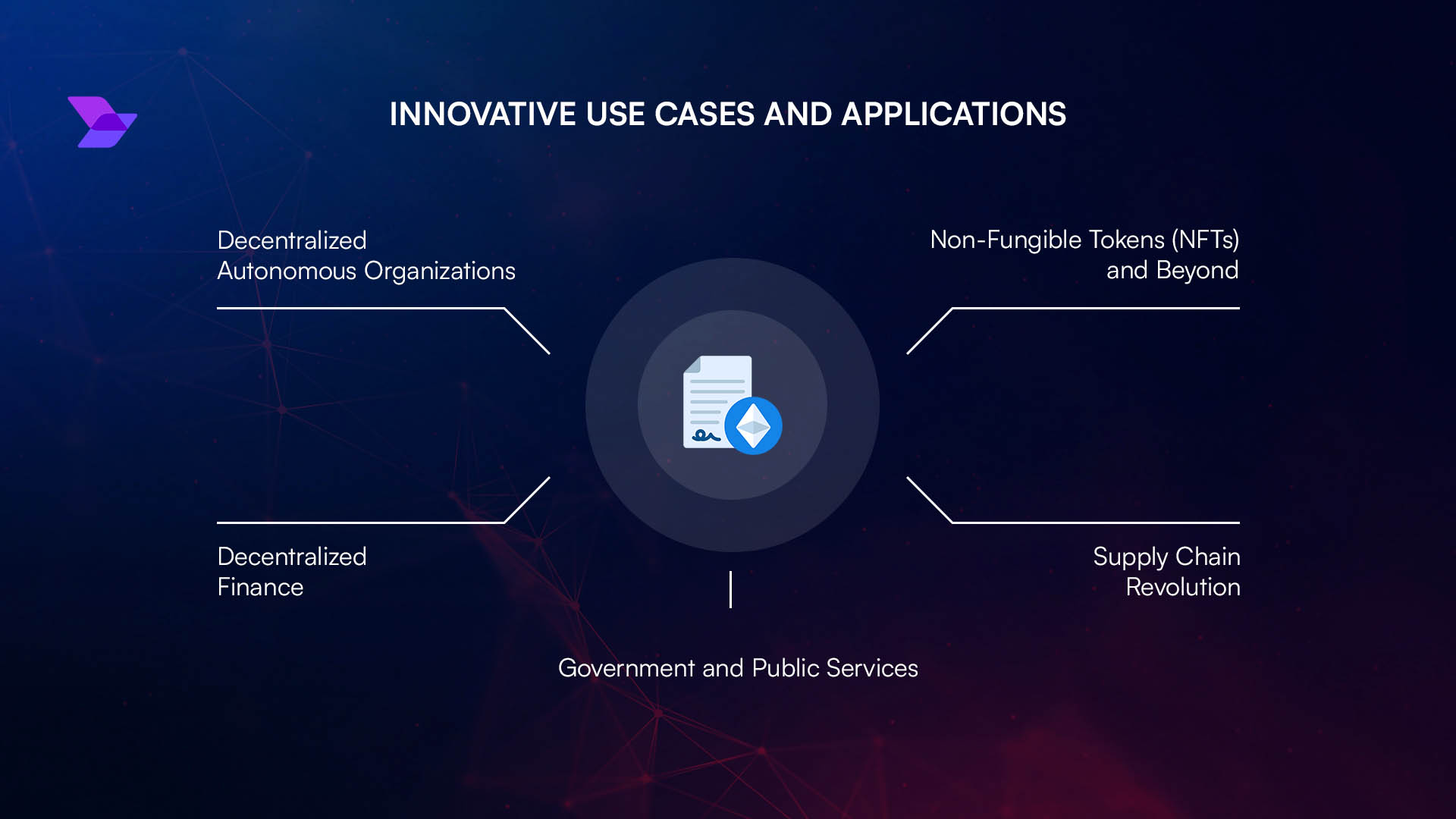

Yet, traditional acquisition is messy. Negotiations drag, quality varies, and privacy regulations loom, especially in FinTech. Onchain platforms flip this script, tokenizing datasets for instant access and perpetual royalties – a nod to dividend aristocrats paying out indefinitely.

Onchain Marketplaces Unlock Specialized Datasets for LLMs 2026

Blockchain marketplaces like FineTuneMarket. com pioneer this space, streamlining discovery and purchase of LLM fine-tuning datasets. Creators earn blockchain royalties for AI datasets on every use, fostering an ecosystem where data becomes a compounding asset. Platforms such as OpenDataBay offer legal exchanges in text, image, and multimodal formats, sidestepping scraping woes. DataXID generates privacy-safe synthetics mirroring real patterns, slashing compliance costs.

Key Onchain Marketplace Advantages

-

Secure Payments: Blockchain transactions ensure trustless, tamper-proof payments without intermediaries, reducing fraud risks in high-value dataset trades.

-

Perpetual Royalties: Smart contracts automatically distribute ongoing royalties to creators on resales or uses, fostering sustainable data economies.

-

Instant Access: Post-payment, datasets unlock immediately via decentralized storage like IPFS, enabling rapid LLM fine-tuning workflows.

-

Domain-Specific Curation: Tailored datasets for niches like finance (e.g., NIFTY Financial News, FinLoRA), optimizing LLM performance in specialized tasks.

-

Reduced Legal Risks: Licensed platforms like OpenDataBay provide compliant data, mitigating scraping lawsuits and privacy issues amid rising AI training costs.

Consider the NIFTY Financial News Headlines Dataset: dual versions for supervised fine-tuning and RLHF, packed with deduplicated headlines and metadata. Such tools elevate LLMs in market forecasting, where generic pre-training falls short. Onchain venues amplify this by ensuring provenance and incentivizing quality through economic loops – usage drives token utility, binding supply to demand.

Why Premium Data Outperforms in Fine-Tuning Economics

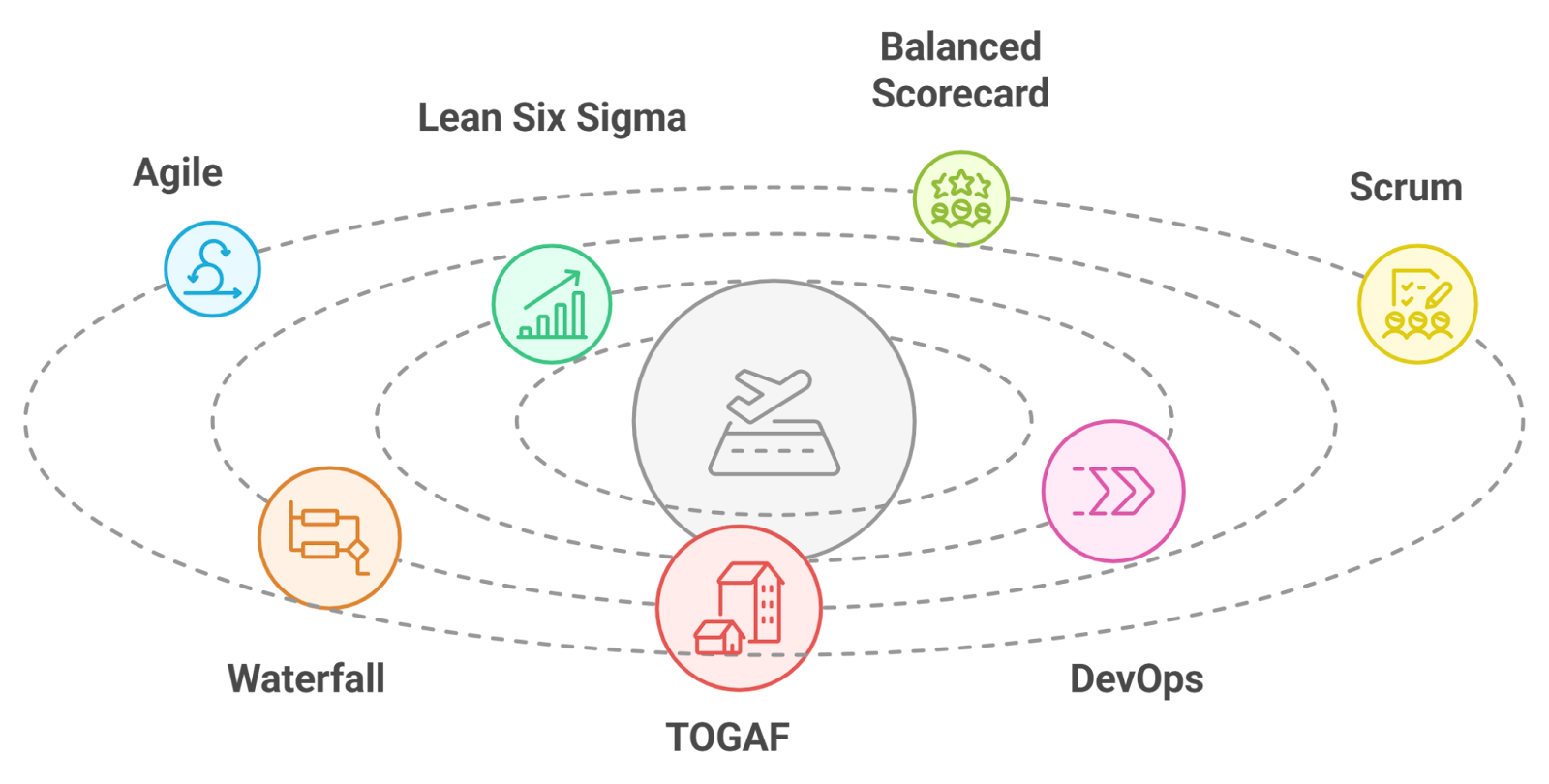

Investors know: cheap inputs yield volatile returns. Similarly, free datasets breed noisy models prone to hallucinations. Premium ones, vetted and enriched, deliver precise adaptations. GSMI 5.0 highlights how narrowed datasets speed AI apps; pair that with LoRA efficiency from FinLoRA, and costs plummet while performance soars. Amazon’s rumored content marketplace signals mainstream validation, but onchain leads with transparency and creator rewards.

Trends reports forecast convergence: multi-agent LLMs, graph retrieval, and Web3 security. Here, datasets aren’t mere fuel; they’re equity stakes in AI’s future, rewarding patient builders with royalties akin to blue-chip dividends.

Picture a wealth management firm fine-tuning an LLM for personalized advice. Generic data yields bland responses; premium financial datasets forge sharp insights, much like dissecting balance sheets for hidden value. Platforms like FineTuneMarket. com turn this into reality, with onchain payments ensuring frictionless deals and royalties flowing back to creators indefinitely.

Financial Domain: Where Premium Data Delivers Alpha

Financial applications spotlight the edge of specialized datasets for LLMs 2026. FinLoRA’s benchmarks across 19 datasets reveal LoRA’s prowess on professional tasks, from earnings prediction to risk assessment. The Wealth Management QA dataset, blending synthetic and real pairs, equips models like Mistral for nuanced client dialogues. NIFTY’s headlines, deduplicated and metadata-rich, sharpen forecasting via supervised or RLHF routes.

Comparison of Key Financial LLM Datasets

| Dataset | Focus | Size | Best Use | Pros/Cons |

|---|---|---|---|---|

| FinLoRA | LoRA benchmarking on general/professional financial tasks | 19 curated datasets | Fine-tuning with LoRA methods for diverse financial tasks | ✅ Open-source & diverse ✅ Covers broad financial domains ❌ High computational needs for benchmarks |

| Wealth Management QA | Conversational QA for wealth management | Hybrid synthetic/real QA pairs | Fine-tuning GPT, Mistral, OpenELM for financial conversations | ✅ Privacy-safe & domain-specific ✅ Suitable for conversational agents ❌ Synthetic data may miss nuances |

| NIFTY | Financial news headlines with market indices | Deduplicated headlines (NIFTY-LM for SFT, NIFTY-RL for RLHF) | Supervised fine-tuning & RLHF for market forecasting | ✅ Publicly available ✅ Rich metadata & indices ❌ Limited to headlines |

These aren’t toys; they’re production-grade tools slashing hallucination risks and boosting ROI. Onchain marketplaces aggregate them, adding blockchain provenance to verify integrity – crucial amid FinTech regulations demanding audit trails. Synthetic options from DataXID complement by generating compliant volumes, preserving patterns without PII leaks.

Economics favor the premium route. Training costs balloon post-lawsuits, per USTechTimes, while GPU crunches persist. Narrow datasets cut compute needs dramatically; LoRA adapters fine-tune with fractions of parameters. Royalties incentivize curation, creating flywheels: more quality data draws users, spiking usage-linked tokens. It’s value investing distilled – buy low-effort data now, harvest compounding yields later.

Navigating Risks and Scaling with Onchain Infrastructure

Legal shadows linger. Web scraping sparks suits; Amazon’s publisher talks hint at licensed shifts, but onchain precedes with decentralized governance. Multi-agent systems, as in projectzero. io’s ledger-backed layers, demand trusted data feeds. Premium sources provide that bedrock, integrated via graph retrieval for contextual depth.

Scalability shines too. OpenDataBay’s multimodal support spans code to video, fitting evolving LLM diets. Developers sidestep negotiation quagmires, grabbing instant access post-payment. For enterprises, this means faster iteration: prototype a trading bot Monday, deploy Tuesday.

Challenges persist – dataset discoverability, standardization – but marketplaces evolve. Future Today Institute’s trends eye AI-blockchain fusion; expect agentic workflows querying onchain repos dynamically. In finance, where milliseconds mean millions, premium fine-tuning data scarcity solutions aren’t luxuries; they’re necessities for alpha generation.

FineTuneMarket. com exemplifies maturity: optimized for ML engineers, it hosts datasets boosting model precision across visions and language. Creators pocket royalties per fine-tune, mirroring aristocrats’ reliability. As 2026 unfolds, this model scales the AI economy, turning data droughts into abundance. Patient allocators, take note: the real returns lie in quality over quantity, secured onchain.