Listen up, AI hustlers: you don’t need a mountain of garbage data to turn a base LLM into a reasoning beast. The LIMA paper blew the doors off that myth, proving supervised fine-tuning datasets as small as 1,000 high-quality examples can rival bloated instruction-tuned models. But here’s the kicker, in this cutthroat AI race, scraping shady forums or begging for scraps won’t cut it. You need 1000 and high-quality datasets for supervised fine-tuning LLMs, and onchain marketplaces are your golden ticket. Forget legal nightmares and endless negotiations; blockchain-powered platforms deliver premium goods with royalties baked in.

Quality Over Quantity: Lessons from LIMA and Beyond

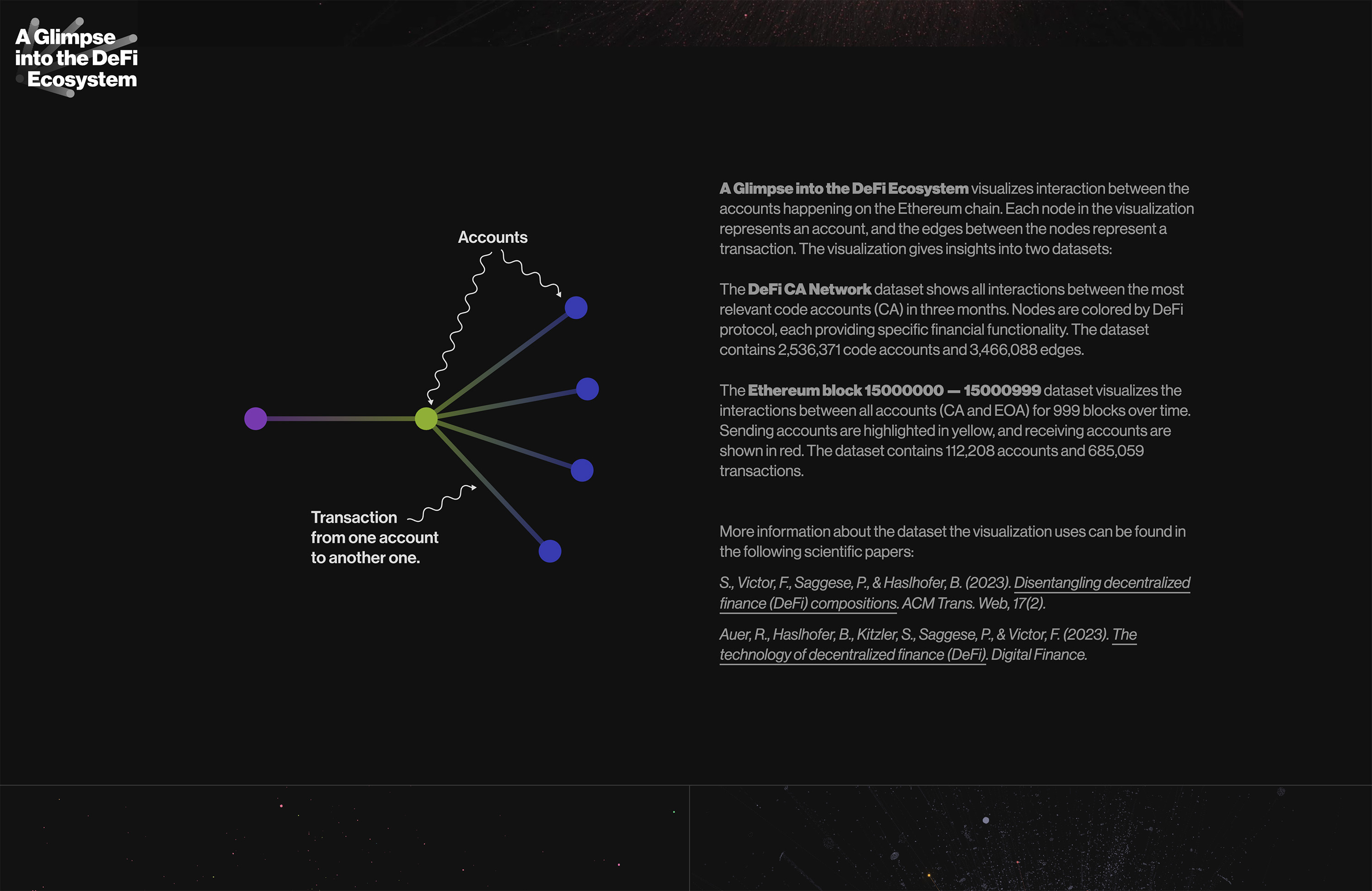

That DeepLearning. AI buzz? Researchers nailed reasoning boosts with just 1,000 curated examples. No joke, a 65B LLaMA model punched way above its weight post-fine-tune. Sebastian Raschka hammered it home on Substack: minimal LLM fine-tuning data 1000 examples gets you 90% there if it’s gold-tier. Rain Infotech echoes this, starting with 1,000 quality pairs and augmenting smartly. Meanwhile, Kaggle’s dropping 804 crypto-blockchain Q and A gems, perfect for niche DeFi bots. But scaling to 1,000 and ? That’s where general-purpose lists shine, like that Reddit r/LocalLLaMA repo curating supervised fine-tuning goldmines.

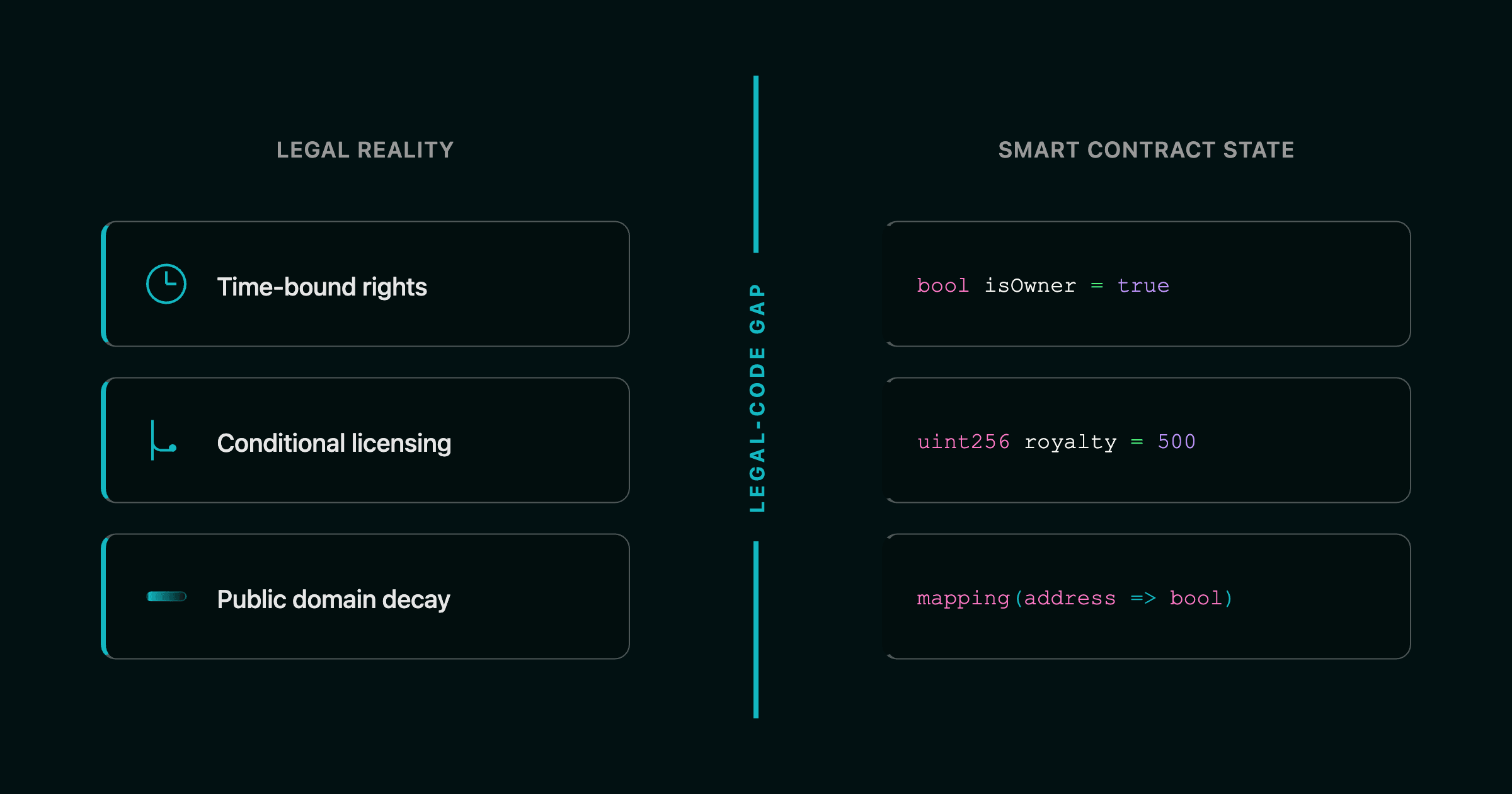

Databricks’ ecommerce QA hybrid? Synthetic wizardry for GPT or Mistral. Point is, fine-tune LLM datasets royalties aren’t optional anymore; creators deserve perpetual cuts, and smart platforms enforce it onchain.

Onchain Marketplaces Crushing the Dataset Game

Enter the onchain dataset marketplace revolution. FineTuneMarket. com leads the charge: discover, buy, sell specialized datasets for LLMs, vision models, whatever. Onchain payments mean instant, secure drops, no middlemen skimming. Creators rake in royalties every time their data fires up a model. OpenDataBay? Lightning-fast legal fine-tuning hub: text, images, code, even agentic trajectories. Three steps: exchange, buy, sell. Zero scraping drama.

Key Onchain Platforms and Resources for Sourcing 1000+ High-Quality LLM Fine-Tuning Datasets

| Platform | Data Types | Key Features |

|---|---|---|

| OpenDataBay | Text, Image, Audio, Video, Code, Agentic Trajectories, 3D Spatial, Tabular, Time-Series, Human Feedback, Synthetic | 3-step buy/sell/exchange, no scraping, legal fine-tuning, onchain marketplace |

| DataXID | Synthetic, privacy-safe | Domain-specific, zero leaks, blockchain-based, high-fidelity mirroring |

| FineTuneMarket | LLM/Vision datasets | Onchain royalties, perpetual earnings |

| Shaip | Domain-specific, multimodal | Custom curation, RLHF, error detection, regulatory compliance |

| Amazon Bedrock | Synthetic | Generate data via larger models for fine-tuning smaller models, fully managed service |

| llm-datasets (GitHub) | General-purpose mixtures, domain-specific | Curated list of 1000+ high-quality datasets for supervised fine-tuning |

DataXID’s blockchain synthetic data? Mirrors real stats without exposing PII, slamming domain accuracy while dodging regs. Shaip curates custom SFT datasets, RLHF, multimodal madness. Amazon Bedrock generates synthetic QA fodder to fine-tune lean models cheap. GitHub’s llm-datasets repo lists mixtures for generalists handling wild queries.

Stacking 1000 and Datasets Like a Pro

Aggressive sourcing starts with mixing sources. Grab Kaggle’s crypto pack, layer Reddit-curated generals, hit OpenDataBay for volume. Aim for diversity: 300 reasoning pairs, 400 domain-specific like ecommerce, 300 synthetic augments. LXT’s expert-led collection scales it pro-level; arXiv papers scream high-quality text is king for efficient models. Neptune. ai spills: fine-tuning’s printing cash, $100M and ARR drivers.

5 Killer Onchain Marketplace Wins

-

Instant blockchain payments: Ditch slow wires—settle deals in seconds on OpenDataBay, no banks holding you back!

-

Perpetual creator royalties: Smart contracts pay creators forever—buy once, they eat for life on blockchain platforms like DataXID.

-

Legal, no-scrape datasets: Score 1000+ clean, consented datasets legally via OpenDataBay—kiss scraping lawsuits goodbye!

-

Niche crypto/DeFi domains: Dive into specialized datasets like Kaggle’s 800+ blockchain Q&A pairs—perfect for LLM fine-tuning in crypto wilds.

-

Synthetic privacy boosts: DataXID’s blockchain synth data keeps PII locked down while mimicking real stats—fine-tune without leaks!

But don’t just hoard data like a paranoid squirrel; validate that stack ruthlessly. Run quick evals on subsets using tools from the llm-datasets repo to spike reasoning scores. Mix in premium AI datasets blockchain style from FineTuneMarket. com, where onchain royalties keep creators pumping out fresh drops. Picture this: your DeFi bot fine-tuned on Kaggle’s 804 crypto Q and As plus synthetic augments from DataXID, crushing market predictions without a whiff of PII leaks.

Pitfalls That’ll Tank Your Model: Steer Clear or Bust

Listen, I’ve traded enough rug pulls in crypto to spot dataset disasters a mile away. First trap: homogeneous crap. Stack only one domain, and your LLM chokes on edge cases. Solution? Diversify like a portfolio boss, 40% general reasoning from LIMA-style curations, 30% niche like Databricks ecommerce, 30% synthetic from Shaip or Bedrock. Second: low-quality noise. arXiv nails it, extensive text must be pristine; junk in, junk out amplified. Skip free-for-all scrapes; hit onchain dataset marketplace pros for vetted gold.

Third, ignore scale at your peril. LXT’s expert-led ops prove scalable collection wins, but bootstrap with 1,000 then iterate. Oversight? Neglect royalties. Platforms enforcing perpetual cuts via blockchain? That’s the flywheel fueling endless innovation. Neptune. ai’s tea: fine-tuning’s a $100M ARR beast because data loops pay creators to refine.

Monetize Your Edge: From Fine-Tune to Fortune

Here’s where it gets juicy. You’ve stacked your supervised fine-tuning datasets, model’s ripping queries. Now flip it: upload to FineTuneMarket. com, snag onchain payments, perpetual royalties every redeploy. Creators on OpenDataBay exchange agentic trajectories or time-series gold, buyers fine-tune Mistral beasts for retail bots. Shaip’s RLHF datasets? Gold for chat UX. DataXID’s privacy-safe synths? Enterprise catnip, compliant and accurate.

Real talk: this ecosystem’s exploding because LLM fine-tuning data 1000 examples proves lean wins, but onchain scales it viral. GitHub lists arm you for mixtures handling crypto volatility or multilingual madness, per Rain Infotech. I’ve seen traders fine-tune bots on blockchain Q and As, spotting momentum plays humans miss. High risk, high reward, fortune favors the bold hustling datasets now.

Stack smart, source onchain, dominate the AI arena. Your model’s next reasoning leap awaits, royalties fueling the fire.