In the rapidly evolving landscape of artificial intelligence as of April 2026, computer vision datasets have become the lifeblood of advanced model training, yet their creators often struggle to capture lasting value. Platforms like OpenLedger's Datanets and Bounding. ai are changing this dynamic by integrating blockchain technology, allowing sellers to embed perpetual royalties directly into dataset transactions. This shift promises not just one-time sales but ongoing revenue streams tied to every subsequent use, secured by smart contracts' immutability. For dataset providers, it's a cautious step toward financial resilience in an industry prone to boom-and-bust cycles.

The allure of AI dataset sales lies in their potential to fuel fine-tuned models for applications from autonomous vehicles to medical imaging. Traditional marketplaces treat data as a commodity: upload, sell, forget. But with onchain mechanisms, provenance is etched indelibly on the blockchain. Every annotation, every labeled image contributes to a verifiable record, mitigating risks of duplication or misattribution that plague centralized repositories.

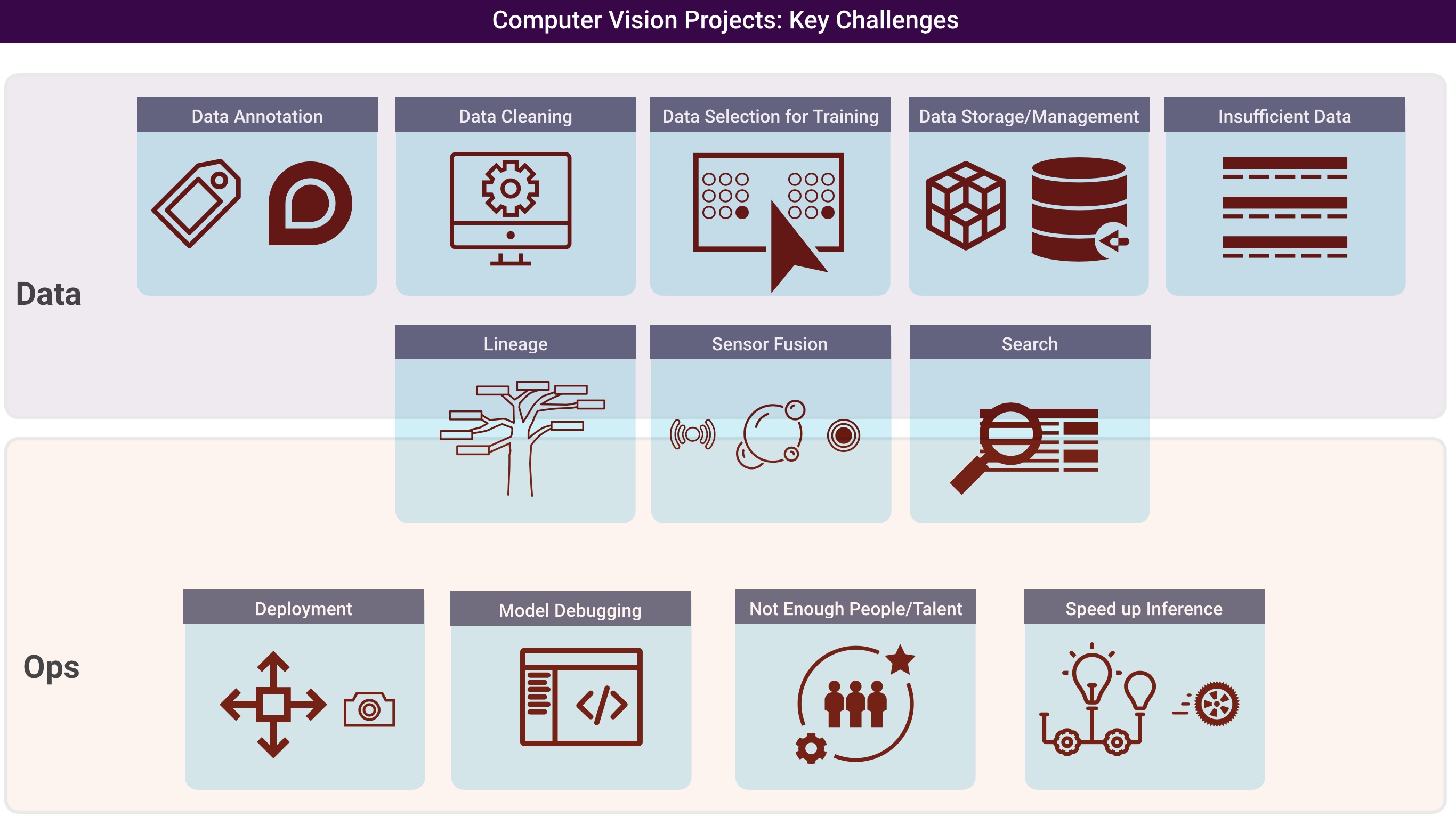

Why Computer Vision Datasets Demand Onchain Protection

Computer vision stands apart due to its voracious appetite for high-quality, domain-specific data. A single mislabeled bounding box can cascade errors through object detection pipelines, eroding model reliability. In 2026, decentralized marketplaces address this by incentivizing precision through royalties. Contributors to OpenLedger's Datanets, for instance, earn based on their data's downstream impact, measured via onchain metrics of model performance gains. This isn't mere speculation; it's a risk-managed approach where value accrues proportionally to utility.

Key Onchain Royalty Advantages

- Transparency: Immutable onchain records of dataset contributions, as in OpenLedger's Datanets platform, ensure verifiable attribution for computer vision data sellers.

- Perpetual Income: Smart contracts enable ongoing royalties from data usage in AI training, exemplified by platforms like Codatta and OpenLedger.

- Quality Incentives: Royalties tied to data impact encourage high-quality computer vision datasets, improving model performance on platforms like OpenLedger.

- IP Enforcement: Blockchain smart contracts automate copyright protection and licensing for datasets, reducing disputes in AI marketplaces.

- Marketplace Liquidity: Onchain royalties boost trading of computer vision datasets on platforms like Bounding.ai, fostering efficient markets.

Consider the pitfalls of off-chain sales: opaque licensing leads to underpayment, while data scraping undermines exclusivity. Blockchain flips this script. Smart contracts automate royalty distribution whenever a dataset slice trains a vision transformer or powers inference in edge devices. From my vantage as a risk consultant, this programmable enforcement reduces counterparty default risk to near zero, a rarity in digital asset trades.

Mechanics of Perpetual Royalties in Practice

At the core of perpetual royalties blockchain systems is the fusion of NFTs and usage oracles. A computer vision dataset is tokenized as an NFT, with metadata linking to off-chain storage via IPFS for scalability. Usage triggers micropayments: fine-tuning a YOLO model on your traffic sign dataset? A fraction flows back automatically. Platforms like Story Protocol extend this with verifiable training traces, ensuring royalties only on proven contributions.

Model training and quality checks occur with full onchain traceability. Contributors earn royalties. - EigenCloud on OpenLedger

This model echoes derivatives markets I advised on for decades: hedge against obsolescence by capturing tail value. Yet caution prevails; oracle reliability remains a vulnerability. Faulty impact attribution could inflate or deflate payouts, demanding rigorous auditing. Bounding. ai mitigates this by focusing on labeled image niches - think satellite imagery or defect detection - where verification is straightforward.

Smart contract templates from AIIP-Chain and similar protocols standardize terms, mapping datasets to unique tokens. Sellers set parameters: 5% royalty on resales, 2% on inference calls. Enforcement is passive, aligning with my mantra: protect capital first. Early adopters report 3x revenue longevity over fiat sales, per IEEE insights on NFT-linked AI assets.

Navigating Risks in Fine-Tuning Datasets Marketplaces

Entering fine-tuning datasets marketplaces requires discernment. Hype around crypto AI agents, as critiqued in arXiv's review of token projects, often masks centralization. True decentralization demands liquid secondary markets, which OpenLedger fosters via agent discoverability. Sellers must vet platforms for oracle decentralization and royalty vesting schedules to avoid illiquidity traps.

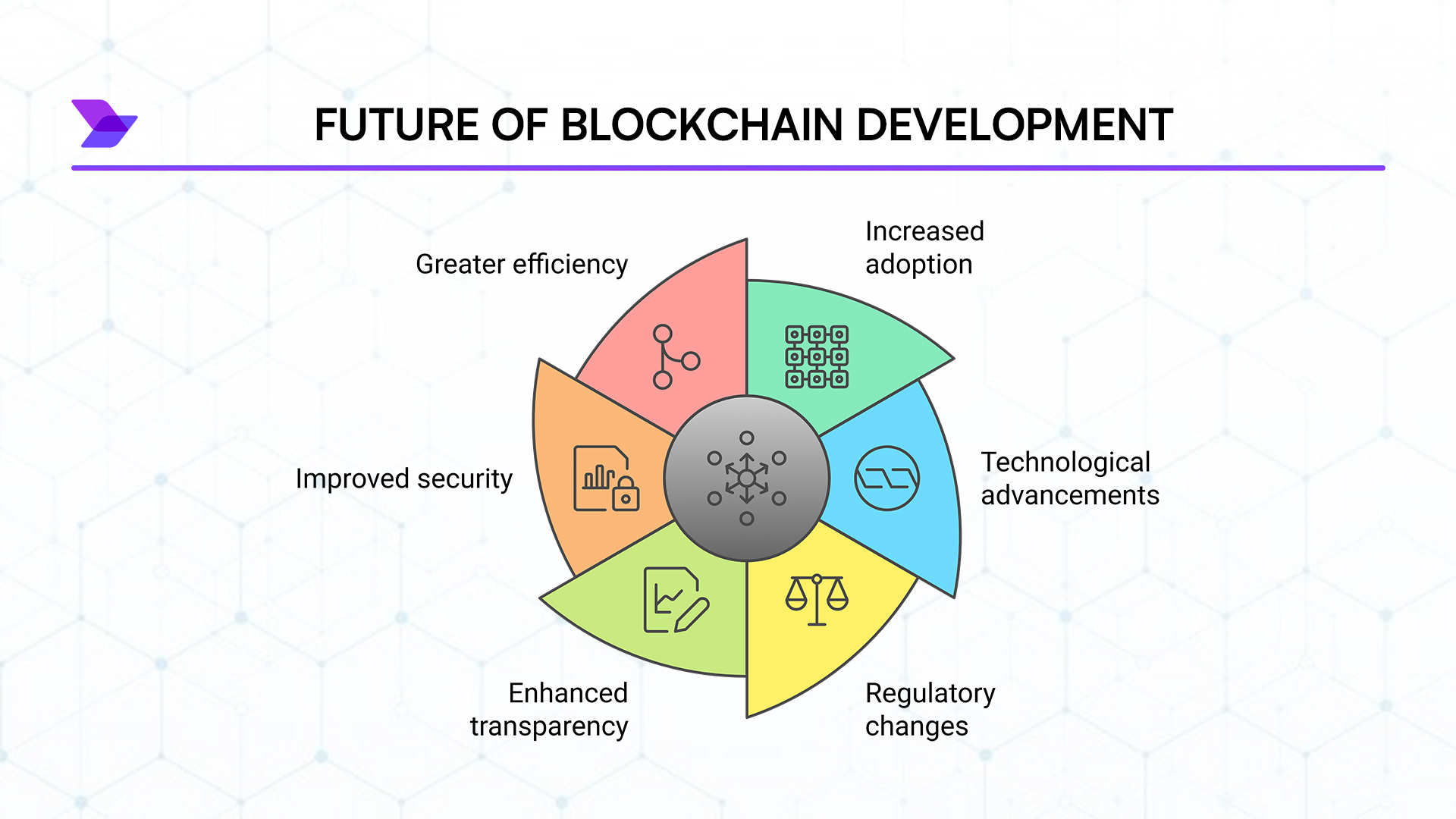

Regulatory headwinds loom; while blockchain ensures pseudonymity, KYC for high-value datasets may emerge. My advice: diversify across chains, starting with Ethereum L2s for cost efficiency. Codatta's vision of royalties on any AI use case underscores the upside, but execution varies. Bounding. ai's focus on computer vision carves a defensible niche, rewarding curators of rare edge cases like low-light pedestrian detection.

From a risk management perspective, the key is stress-testing these platforms against usage volatility. Computer vision datasets for niche applications - drone surveillance or retinal scans - command premiums precisely because scarcity drives demand. Sellers who embed onchain royalties position themselves for compounded returns, but only if the underlying oracle ecosystem matures.

Platforms Leading the Charge in 2026

OpenLedger's Datanets stands out for its onchain recording of every dataset contribution, tying royalties to tangible model improvements. Bounding. ai complements this by specializing in labeled image datasets, creating a vibrant marketplace where developers pay upfront for access while creators reap perpetual shares. These platforms, powered by smart contracts, enforce attribution transparently, a bulwark against the data commoditization that has long eroded margins in AI dataset sales.

Tokenizing your dataset begins with curation: ensure annotations meet industry standards for bounding boxes, segmentation masks, or keypoints. Upload to IPFS, mint as NFT, and configure royalty splits via standardized contracts. Test with a small cohort of fine-tuners to validate oracle feeds. This methodical onboarding minimizes exposure to smart contract exploits, a concern I've flagged in countless audits.

Story Protocol's verifiable training traces add another layer, proving your data's lift in metrics like mAP or IoU. Early metrics from these ecosystems show contributors capturing 15-20% more lifetime value than traditional sales, per insights from IEEE and Binance analyses. Yet, I urge caution: secondary market depth varies. Platforms with agent integration, like those supporting AGIX-paid fine-tuned models, offer liquidity edges.

Real-World Impact and Seller Strategies

Imagine curating a dataset of rare industrial defects for quality control vision systems. Sold once on Bounding. ai, it generates royalties each time a manufacturer's fine-tuned model detects flaws in real-time production lines. This tail revenue mirrors the long-dated options I structured for institutions - low upfront cost, asymmetric upside. Diversify holdings across verticals: medical imaging today, AR overlays tomorrow.

| Platform | Focus | Royalty Mechanism | Risk Profile |

|---|---|---|---|

| OpenLedger Datanets | Full traceability | Impact-based micropayments | Low (decentralized oracles) |

| Bounding. ai | Labeled images | Per-use splits | Medium (niche verification) |

| Story Protocol | Verifiable training | Proven contribution shares | Low (audit trails) |

Strategies for sellers evolve with the space. Start small, monitor onchain dashboards for usage patterns, and iterate datasets based on buyer feedback loops. My experience with stress testing underscores vesting royalties over flat fees; it cushions against model obsolescence as vision architectures advance to multimodal paradigms.

Critics decry blockchain overhead, but L2 scaling has slashed fees below $0.01 per transaction by 2026. For enterprises, this democratizes access while rewarding independents. Codatta's broader vision hints at royalties spanning all AI uses, but computer vision's tangible outputs make it the proving ground.

Providers who embrace fine-tuning datasets marketplace dynamics today build moats around their IP. Perpetual royalties aren't a panacea - oracle centralization and regulatory flux demand vigilance - but they recalibrate power from aggregators to originators. In an industry where data fuels trillion-dollar valuations, securing your slice onchain is prudent capital preservation. Forward-thinking creators will thrive here, turning pixels into persistent prosperity.

No comments yet. Be the first to share your thoughts!