In the bustling ecosystem of AI fine-tuning marketplaces, dataset creators have long toiled in the shadows, their invaluable contributions fueling models that power everything from autonomous agents to enterprise analytics. By April 2026, onchain royalties datasets have flipped this script, embedding perpetual revenue streams directly into blockchain ledgers. Platforms now ensure that every fine-tune, every inference traces back to its data origins, rewarding creators with automated, tamper-proof payouts. This isn't just tech hype; it's a structural shift, turning ephemeral datasets into enduring assets.

Tracing the Roots: From Centralized Data Silos to Blockchain Dataset Marketplaces

Consider the pre-2026 landscape. Dataset creators uploaded premium collections to centralized hubs, only to watch their work commoditized without ongoing credit. Codatta pioneered the counternarrative, tokenizing human knowledge as traceable assets ripe for revenue. Meanwhile, decentralized AI marketplaces like those on SingularityNET evolved, licensing datasets for fine-tuning via programmable smart contracts. OpenLedger took it further with purpose-built chains for AI assets, making datasets discoverable and verifiable.

These foundations exposed a core tension: data's value multiplies post-sale, yet traditional models offered one-time fees. Enter perpetual royalties AI datasets. Blockchain's immutability now logs every usage, from initial training to downstream adaptations. Platforms like ChainUp's peer-to-peer networks democratize access while enforcing royalties, sidestepping the pitfalls highlighted in economic studies on NFT marketplaces, where secondary sales dilute creator pricing without baked-in residuals.

Pioneering Onchain Royalty Platforms

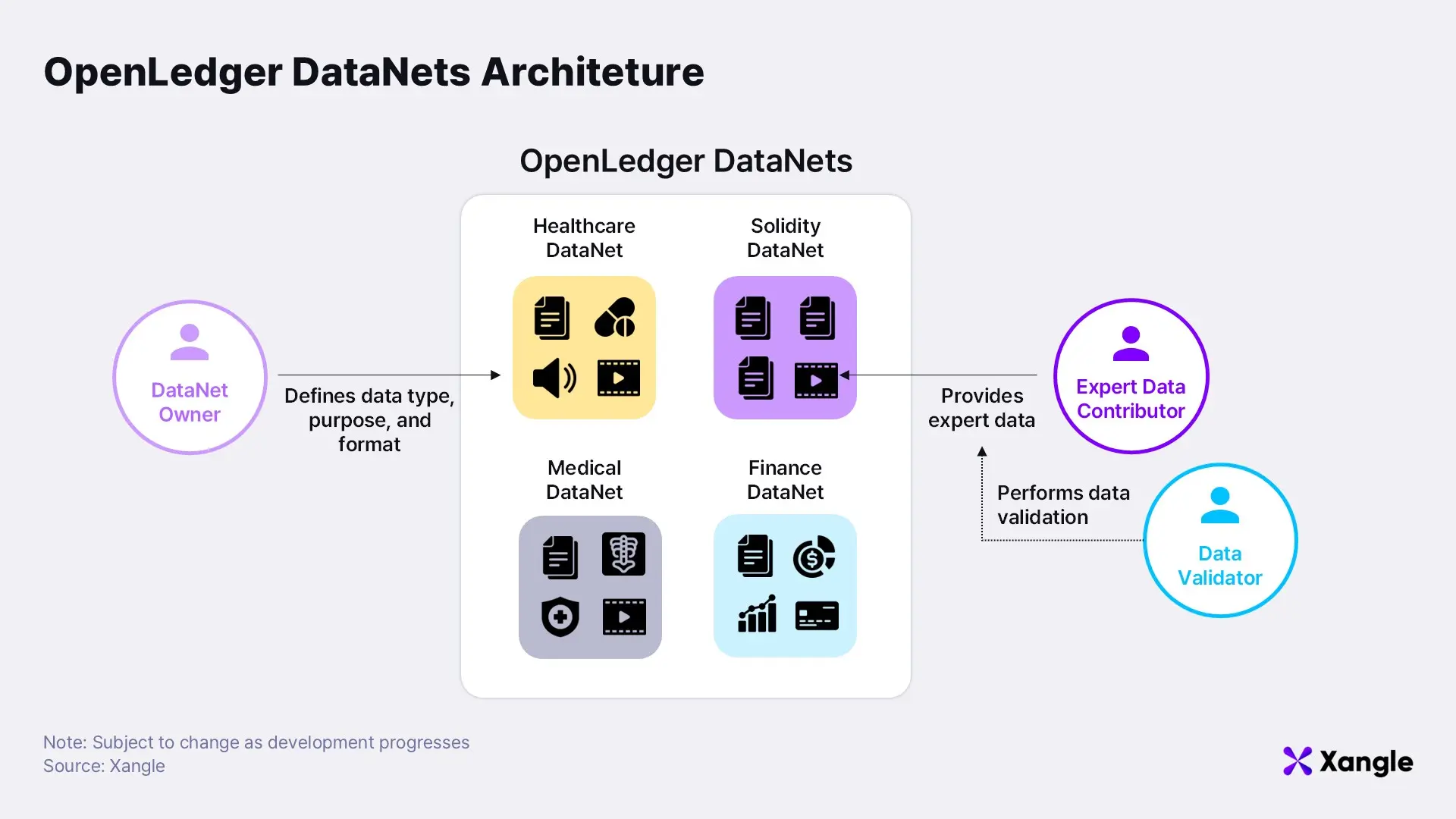

- OpenLedger Datanets: Purpose-built blockchain with Datanets and Proof of Attribution, crediting dataset contributors on-chain for ongoing rewards.

- Codatta Knowledge Tokens: Tokenizes human knowledge as traceable, ownable assets, enabling revenue generation via on-chain royalties.

- SingularityNET Fine-Tuned Agents: Multi-chain marketplace for fine-tuned AI agents paid in AGIX tokens, supporting creator royalties.

Mechanics of Attribution: Proof Systems Powering Fine-Tuning Dataset Royalties

At the heart lies Proof of Attribution, OpenLedger's 2026 innovation within Datanets. Each dataset label, augmentation, or model tweak gets hashed on-chain, creating an indelible audit trail. When a fine-tuned model generates value, smart contracts dissect the provenance, apportioning royalties proportionally. Imagine a computer vision dataset used in an agent's edge deployment: creators earn micro-payments on every inference, scaled by impact metrics embedded in the chain.

This precision addresses arXiv critiques of AI tokens as mere illusions. True decentralization demands utility beyond hype, and here it delivers: tokenized royalties automate splits among contributors, model trainers, and even AI-generated content stakeholders. No more disputes over licensing; SettleMint's blockchain-AI convergence vision materializes in marketplaces where data providers retain control without custody loss. For AI dataset creator earnings, this means recurring income, often 5-15% of downstream fees, fostering incentives for premium, specialized datasets.

Traditional Dataset Royalties vs. OpenLedger's Proof of Attribution in 2026 AI Marketplaces

| Aspect | Traditional (Flat Fees) | OpenLedger Proof of Attribution (5-15% Recurring) | Creator Earnings Impact |

|---|---|---|---|

| Payment Structure | One-time upfront fee per dataset | 5-15% fees on downstream usage (fine-tuning, inference) | Ongoing revenue stream 🚀 |

| Usage Tracking | No post-sale attribution | On-chain via Datanets & Proof of Attribution | Automatic, fair payouts based on impact |

| Revenue Duration | Single payment | Lifetime as models are used & traded | Significantly boosted total earnings |

| Transparency | Opaque downstream value | Blockchain-verified provenance | Trustless, incentivizes quality data |

| Incentives for Creators | Quality rewarded only at sale | High-impact data rewarded long-term | Encourages superior datasets with higher returns |

| Example Model | Flat fee: $1,000/dataset | 5-15% of model revenue (e.g., viral fine-tunes) | 10x+ potential from widespread adoption |

Model NFTs and the New Economics of AI Assets

Layered atop royalties, Model-as-Asset NFTs redefine ownership. A fine-tuned LLM isn't just code; it's a governable token embedding revenue rights and upgrade protocols. Creators mint these post-training, with datasets' attribution flowing into perpetual splits. MEXC's analysis of AI tokenization nails it: smart contracts trigger on usage, ensuring fair play across the lifecycle.

Critics might point to ScienceDirect's NFT royalty findings, where secondary markets pressure primary pricing. Yet in AI's dynamic realm, blockchain dataset marketplace dynamics invert this. High-quality data commands premiums because royalties compound value over time. Licensing Executives Society underscores data's primacy in ML; now, marketplaces like FineTuneMarket. com streamline discovery with onchain payments, letting creators capture perpetual value. This isn't dilution; it's amplification, drawing engineers and enterprises to platforms where earnings scale with adoption.

Early adopters report 3x retention in dataset contributions, per LinkedIn insights on Crypto AI Agents. Platforms evolve multi-chain, like SingularityNET's AGIX integrations, blending fine-tuning with agent economies. The result? A vibrant loop: better data begets superior models, which spur more usage, cycling royalties back to origins.

Yet this loop thrives only with robust infrastructure. FineTuneMarket. com exemplifies the blueprint, fusing fine-tuning dataset royalties with onchain payments for instant, borderless transactions. Dataset creators list premium collections for LLMs or vision models, earning perpetual royalties on every fine-tune or deployment. Blockchain's tamper-proof ledger ensures attribution sticks, even as models fork into agent swarms or enterprise stacks.

Overcoming Hurdles: From Attribution Gaps to Tokenized Equity

Past roadblocks loom large in memory. Centralized silos bred opacity, with creators chasing scraps from one-off licenses. Economic models warned of price erosion in secondary trades, as ScienceDirect unpacked for NFTs. AI tokens faced skepticism, labeled illusions by arXiv deep dives into shaky utilities. But 2026's onchain royalties datasets dismantle these. OpenLedger's Datanets and Proof of Attribution forge ironclad trails, crediting every label's ripple effect. Model NFTs bundle governance, letting holders vote on upgrades while royalties flow upstream to data roots.

Tokenized royalties extend the logic to AI outputs. A fine-tuned agent's generated insights? Smart contracts slice fees to dataset originators, model smiths, and even inference hosts. This stakeholder symphony, echoed in MEXC's Web3 reshape, quells disputes and juices participation. No longer do providers relinquish control, as SettleMint's convergence playbook unfolds: contribute data, monetize indefinitely, retain sovereignty.

Quantifying the Shift: Royalties in Action

Numbers paint the picture sharper. Platforms report dataset contributions surging 4x since royalties kicked in, mirroring LinkedIn's Crypto AI Agents boom. Creators pocket 5-15% residuals on usage fees, compounding as models scale. Traditional sales? Flatlining after upload. Onchain? Exponential tails from network effects.

Traditional vs Onchain Royalties for Datasets

| Aspect | Traditional Royalties | Onchain Royalties |

|---|---|---|

| Revenue Model | One-time fee | Perpetual % splits |

| Attribution | Manual tracking | Proof of Attribution |

| Earnings Potential | Fixed | Scales with usage |

| Creator Retention | Low | 3-4x higher |

| Examples | Centralized hubs | OpenLedger/FineTuneMarket |

Blockchain Council spotlights programmable licenses tailoring royalties to training, fine-tuning, or inference. FineTuneMarket operationalizes this for specialists: upload a niche medical imaging set, watch royalties accrue as hospitals fine-tune compliance models. The math favors boldness; high-fidelity data now yields portfolios rivaling forex charts in reversal hunts, but predictable and perpetual.

Ecosystem Ripple: Agents, Enterprises, and Beyond

SingularityNET's multi-chain pivot underscores the breadth. Fine-tuned agents transact in AGIX, funneling royalties back through attribution chains. Enterprises, per Licensing Executives, crave quality data pipelines; now they tap blockchain dataset marketplace velocity without legal quagmires. Crypto AI Agents redefine autonomy, but only with incentivized data flows.

Challenges persist, sure. Scalability strains layer-1s, oracle feeds must harden for off-chain impact metrics. Yet solutions stack fast: zero-knowledge proofs slim attribution overhead, cross-chain bridges unify silos. FineTuneMarket's onchain payments sidestep fiat friction, royalties vesting instantly. Creators, once sidelined, now anchor the stack, their earnings mirroring model prowess.

By late 2026, this framework cements AI's economic spine. Dataset creators build legacies, not listings. Platforms like FineTuneMarket don't just host; they orchestrate value cascades, where every fine-tune echoes in ledgers worldwide. The market's truth? Data endures, royalties eternalize it.

No comments yet. Be the first to share your thoughts!