Picture a digital bazaar where streams of data flow like rivers of gold, each dataset a shimmering token pulsing with the lifeblood of artificial intelligence. Developers no longer scour shadowy forums or beg centralized gatekeepers for AI fine-tuning datasets blockchain treasures. Instead, they trade them on onchain dataset marketplaces, as fluidly as swapping Ethereum for Bitcoin, with smart contracts humming the orchestra of instant ownership transfer. This is no distant utopia; it's unfolding now, a symphony where data providers compose perpetual royalties and machine learning engineers conduct symphonies of model precision.

In this emergent ecosystem, fine-tuning datasets for language models or computer vision tasks become liquid assets. Platforms like FineTuneMarket. com pioneer instant onchain payments for fine-tuning dataset purchases, arming ML teams with immediate access to specialized troves. Blockchain's immutable ledger ensures provenance, slashing fraud risks that plague traditional data trades. Creators embed royalties into every token, earning indefinitely as their datasets fuel iterations across the AI cosmos.

The Blockchain-AI Convergence Ignites Dataset Liquidity

Markets crave liquidity, and data has long been the bottleneck. Enter decentralized AI marketplaces, peer-to-peer arenas democratizing AI construction. ChainUp envisions them as fortresses future-proofing innovation, where datasets morph into tradable commodities. The Onchain Foundation heralds this as the 2025 killer combo for businesses: blockchain securing AI's voracious data appetite.

Consider Bittensor, the open-source protocol weaving decentralized machine learning networks. Its SN13 subnets pulse with incentives for dataset contributions, turning raw data into tokenized value. Traders now eye Bittensor SN13 datasets buy opportunities, speculating on data veins that power superior models. Meanwhile, Reppo's data broker agents automate discovery, bridging supply and demand in this nascent data trading DEX for machine learning.

Pioneering Onchain AI Platforms

- FineTuneMarket: Delivers instant onchain payments, empowering ML teams with seamless access to specialized datasets for visionary language and vision model fine-tuning.

- Bittensor: Fuels decentralized ML networks where models collaborate blockchain-style, monetizing datasets like crypto assets for a democratized AI future.

- OpenxAI: Tokenizes datasets via Ethereum's ERC-721/1155, unlocking transparent, secure trading of AI assets in an open, ownership-driven ecosystem.

- PredictChain: Builds decentralized marketplaces for collaborative data sharing, slashing centralization and igniting open AI innovation worldwide.

Pioneers Tokenizing the Data Frontier

Finetuning. AI stands as a beacon, a marketplace for sharing, selling, and snapping up datasets sculpted for model mastery. Here, a vision dataset for autonomous drones or a niche corpus for medical diagnostics trades hands via blockchain, birthing hyper-specialized AIs. OpenxAI elevates this with Ethereum's ERC-721 and ERC-1155 standards, minting non-fungible and semi-fungible tokens that encode ownership and access. Transparency reigns; every transaction etches into the chain, verifiable by all.

PredictChain pushes further, crafting decentralized bazaars to shatter centralized cloud monopolies. Collaborative data pools emerge, where researchers contribute slices and reap shared bounties. This isn't mere trading; it's ecosystem genesis, fostering open AI unbound by Silicon Valley silos. Nansen's AI-driven onchain tools already dissect these flows, revealing patterns in dataset velocity akin to crypto whale movements.

Royalties and Incentives: The Economic Engine

At the heart beats perpetual royalties, the composer's cut in our data symphony. Upload a dataset to an onchain marketplace, and smart contracts automate micro-payments on every fine-tune. A language model dataset used in a viral chatbot? Residuals flow eternally. This mirrors music NFTs but for ML fuel, inverting the extractive model where big tech hoards data windfalls.

Bittensor incentivizes quality through subnet competitions, where superior datasets climb leaderboards and command premiums. Token Metrics streams onchain metrics, grading these assets like blue-chip cryptos. Developers wield autonomous agents, as Moralis tutorials demonstrate, to snipe undervalued datasets mid-chain. Gate. com spotlights AI agents reshaping DeFi; extend that to data DEXes, and personalization surges: your model queries the chain for the perfect fit.

Amberdata underscores onchain data's role in algo trading; now apply it to datasets themselves. Predict surges when a hot sector like vision tasks booms, drawing speculators. Ethical guardrails emerge too, Custodin Systems-style, flagging privacy pitfalls in LLM reliance. Yet the momentum builds, a tidal wave cresting toward ubiquitous onchain dataset marketplace adoption.

Speculators sharpen their tools, charting dataset trajectories like seasoned crypto traders eyeing altcoin pumps. But beneath the hype swirls complexity: data quality varies wildly, and provenance proofs demand rigorous verification. Platforms counter with AI graders, akin to Token Metrics' proprietary scores, scanning for noise, bias, and freshness before minting tokens. Reppo's data broker agents emerge as vigilant scouts, negotiating deals across chains, ensuring only prime cuts hit the data trading DEX for machine learning.

Navigating the Data DEX: Strategies for Builders and Traders

Imagine your ML pipeline as a high-frequency trading desk. Onchain feeds from Nansen or Amberdata light up screens with real-time dataset metrics: usage velocity, fine-tune success rates, royalty yields. Builders deploy autonomous agents, Moralis-style, to bid on undervalued gems in vision or NLP niches. A dataset surging in Bittensor SN13 datasets buy frenzies signals breakout potential, fueling models that outpace centralized rivals.

Comparison of Top Onchain Dataset Platforms

| Platform | Features | Token Standards | Key Benefits |

|---|---|---|---|

| FineTuneMarket | Instant payments, Royalties | Custom (Onchain payments) | Immediate dataset access, Ongoing revenue for providers 💰 |

| Bittensor | Decentralized ML incentives | Native (TAO token) | Democratized ML training, Incentive-aligned contributions 🧠 |

| OpenxAI | Tokenized datasets and models | ERC-721, ERC-1155 | Transparent ownership, Secure Ethereum transactions 🔒 |

| PredictChain | Collaborative pools | Not specified | Decentralized collaboration, Open AI ecosystem 🌐 |

Traders stack positions in dataset baskets, hedging across modalities. A boom in DeFi AI agents, as Gate. com chronicles, spills into data markets: personalized feeds curate datasets matching your model's DNA. Gateways like ChainUp fortify these DEXes, blending liquidity pools with oracle-verified quality scores. The result? Frictionless discovery, where a drone engineer's query yields tokenized payloads in seconds.

Ethical Currents and Privacy Tides

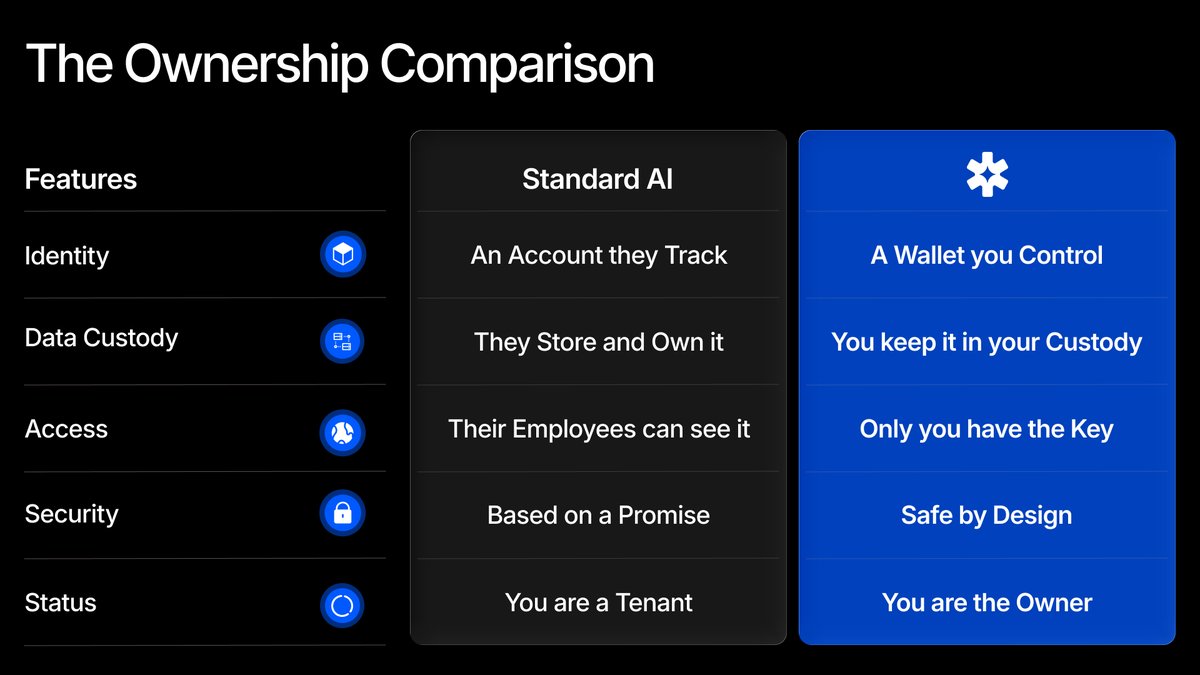

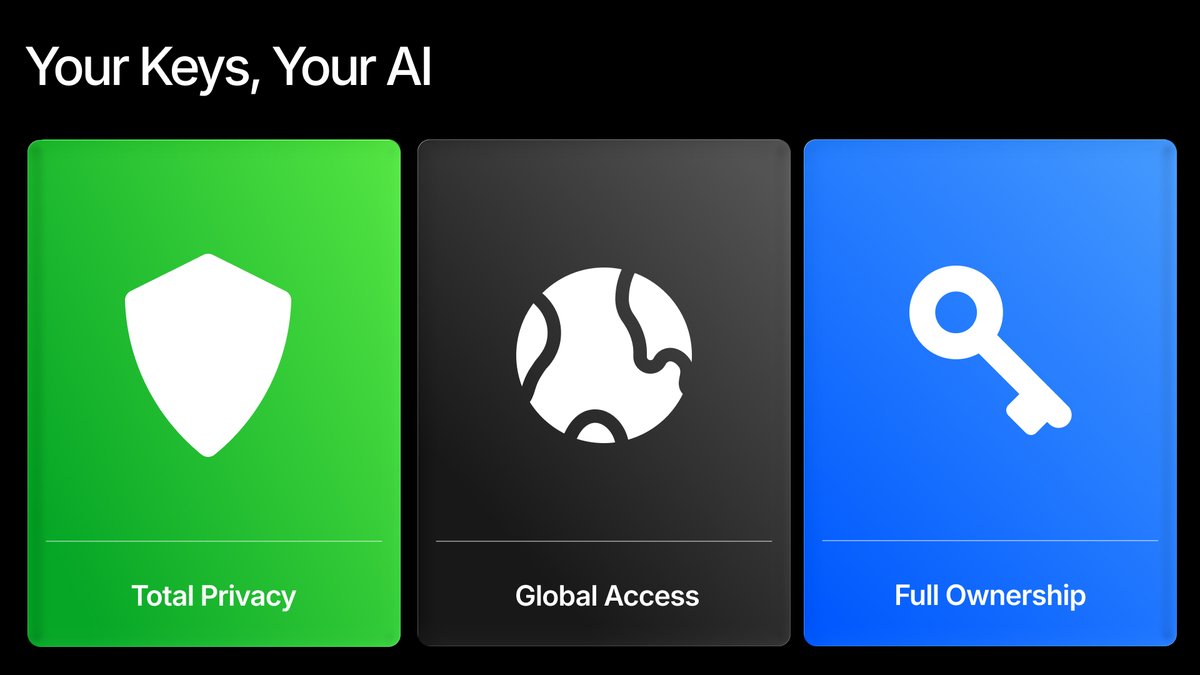

Custodin Systems reminds us: unchecked LLMs breed shadows. Onchain marketplaces illuminate them, baking privacy into protocols. Zero-knowledge proofs cloak sensitive data during trades, while differential privacy layers scrub identifiers. Creators opt-in to audits, earning premium badges that boost token floors. This isn't afterthought compliance; it's woven into the economic fabric, rewarding stewards over scrapers.

Yet sovereignty beckons. PredictChain's vision dismantles cloud fiefdoms, empowering global contributors. A farmer in Kenya tokenizes crop imagery, fueling precision ag models; residuals fund the next harvest. Bittensor subnets democratize validation, crowd-sourcing quality sans gatekeepers. The Onchain Foundation's 2025 playbook unfolds: businesses thrive by tapping this confluence, where AI hungers meet blockchain's feast.

Visionaries glimpse the horizon: datasets as foundational primitives, composable like Lego in the AI stack. Autonomous agents orchestrate fine-tunes, querying DEXes for optimal blends, iterating models in perpetual evolution. Royalties cascade through DAOs, funding open bounties for frontier data like quantum simulations or exascale climate sets.

In this bazaar, value accrues to originators, not intermediaries. ML engineers ascend from data beggars to asset allocators, portfolios diversified across AI fine-tuning datasets blockchain vaults. Speculative froth tempers into mature markets, with index funds tracking sector surges. Reppo agents evolve into full-fledged quants, alpha-hunting across chains.

The symphony swells. Platforms like FineTuneMarket conduct the opening movement, Bittensor improvises the crescendo, OpenxAI harmonizes standards. As onchain analysis tools sharpen, from Nansen's ML probes to Token Metrics' grades, traders decode the signals. Gateways to DeFi intelligence pave the path, where data fuels not just models, but self-sustaining economies.

Stake your claim in this unfolding epic. Mint your dataset, fine-tune your edge, trade the future. The blockchain-AI convergence isn't a trend; it's the new bedrock, where datasets dance as eternal assets in the grand marketplace of minds.

No comments yet. Be the first to share your thoughts!