In the fast-paced world of AI development, fine-tuning large language models with LoRA has become the smart choice for developers chasing efficiency without sacrificing performance. Costs hover between $50-$300 per training run, making it accessible even for smaller teams, yet the real edge comes from premium LoRA fine-tuning datasets. Blockchain marketplaces like FineTuneMarket. com are transforming how we buy, sell, and monetize these assets, with onchain payments ensuring instant, secure deals and perpetual royalties for creators. As we hit 2026, let's unpack the datasets fueling this boom.

LoRA's Edge in LLM Fine-Tuning Efficiency

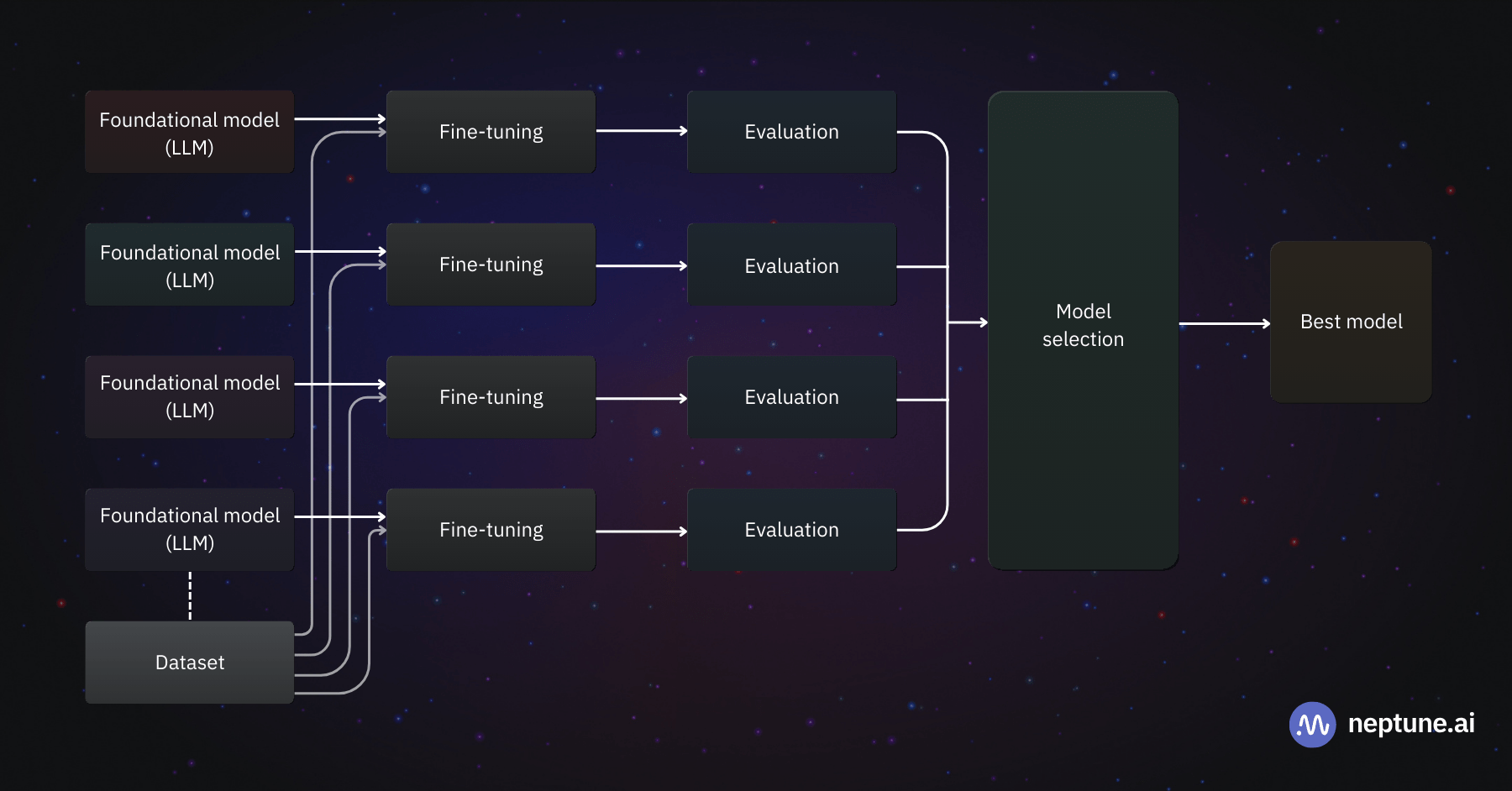

Low-Rank Adaptation, or LoRA, slashes the compute demands of fine-tuning by injecting lightweight adapter modules into pre-trained models. Instead of tweaking billions of parameters, you target low-rank matrices, freezing the base model intact. This approach shines in specialized tasks, from financial analysis to code generation. Recent benchmarks, like those from FinLoRA, prove it: 46 rounds of fine-tuning across 19 datasets show LoRA outperforming traditional methods on professional workloads. And with costs locked at $50-$300 per run, ROI calculators peg custom AI builds at $4800-$12500, a steal compared to full fine-tuning marathons.

Practically speaking, LoRA lets you adapt models like Mistral-7B for niche dominance. Projects such as LoRA Land demonstrate this, with 25 and fine-tuned variants beating GPT-4 in targeted apps. For machine learning engineers, it's a no-brainer: prepare your dataset, configure adapters, train, and deploy faster.

Premium Datasets Driving LoRA Success Stories

Premium LLM datasets are the secret sauce here, curated for quality over quantity. Take the FinLoRA project: 19 diverse financial datasets, including novel XBRL ones from 150 SEC filings. These benchmark general and pro-level tasks, perfect for LoRA adapters in trading bots or compliance tools. Then there's DataXID, a blockchain-native platform generating synthetic data that matches real stats while dodging privacy pitfalls, ideal for regulated industries.

Don't sleep on the 2025 Trend LLM Knowledge Base 10K either, packing 10,000 chunks on crypto, DeFi, SaaS, and e-commerce. Its metadata screams RAG compatibility, streamlining LoRA workflows. Hugging Face's FineWeb, a 15-trillion-token beast from CommonCrawl, and its educational sibling FineWeb-Edu, deliver filtered gold for broad or niche tuning. BigCode's The Stack v2 rounds it out with 67TB of code across 600 and languages, turbocharging developer-focused LLMs.

Key Premium Datasets for LoRA Fine-Tuning

| Dataset | Domain | Size | LoRA Fit |

|---|---|---|---|

| FinLoRA | Finance | 19 datasets | High |

| DataXID | Synthetic/Privacy | Variable | Medium-High |

| 2025 Trend 10K | Crypto/DeFi/SaaS | 10K chunks | Excellent |

| FineWeb-Edu | Education | Trillions tokens | Strong |

| The Stack v2 | Code | 67TB | Developer Gold |

Blockchain Marketplaces Revolutionize LoRA Dataset Trading

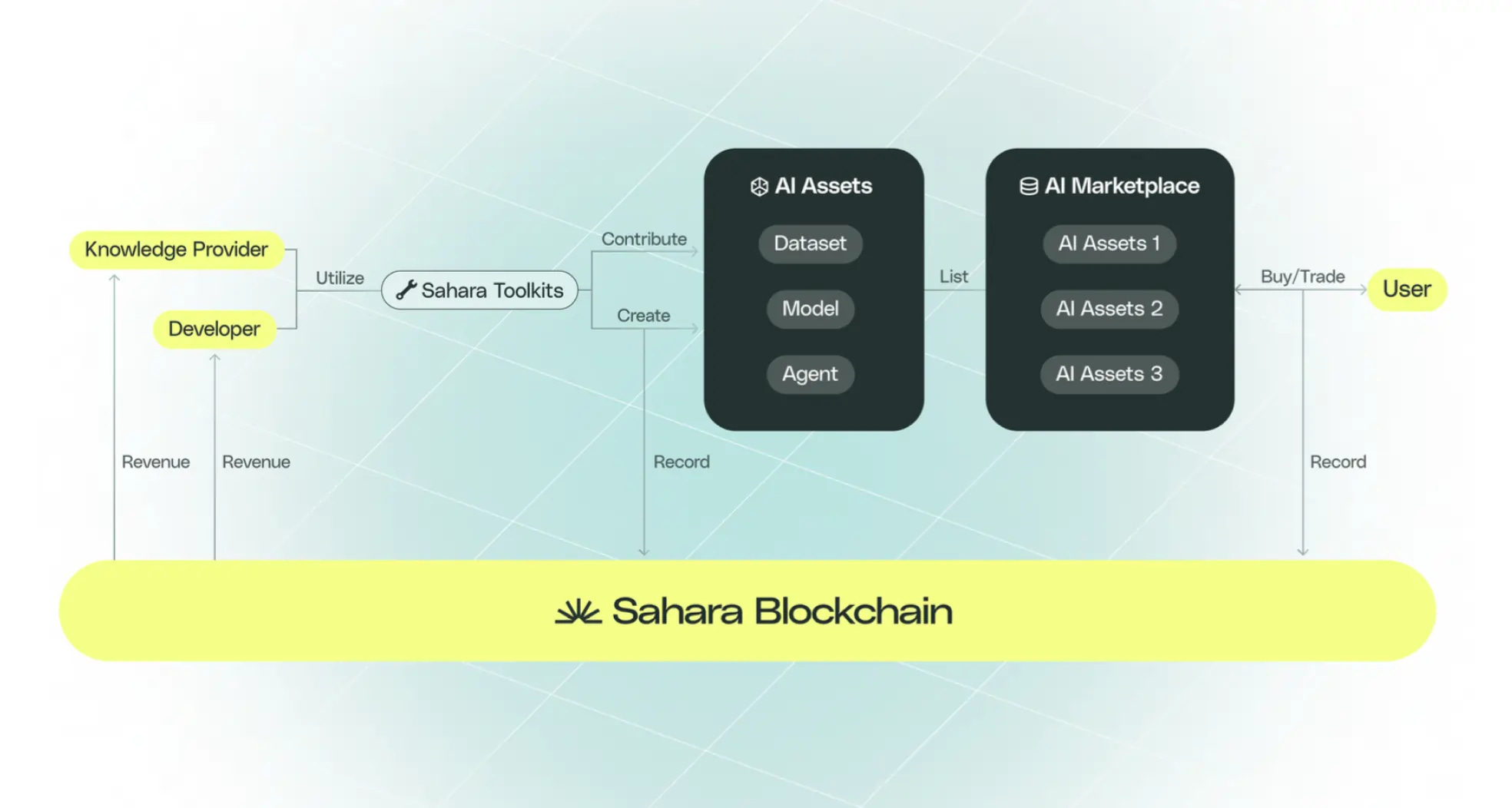

Enter onchain dataset marketplaces, where blockchain AI datasets trade seamlessly. Platforms enable creators to sell LoRA datasets buy sell dynamics with smart contracts handling royalties forever. FineTuneMarket. com leads, optimized for AI devs and enterprises seeking fine-tune LLMs datasets 2026. Imagine discovering a FinLoRA-style pack, paying via crypto, and fine-tuning instantly, all while contributors earn passively.

This ecosystem fosters innovation: buyers get verified, high-fidelity data; sellers tap global reach. In 2026, as LoRA matures, these marketplaces cut discovery friction, boosting model performance across domains. Swing into action with these resources, and watch your LLMs swing ahead of the pack.

Security layers like zero-knowledge proofs on these onchain dataset marketplaces keep data provenance crystal clear, letting you verify dataset integrity before committing tokens. No more shady downloads or IP disputes; everything's auditable on-chain. For swing traders like me, who've built tools to spot momentum in crypto and equities, this is game-changing. Pair a FinLoRA dataset with LoRA adapters, and your LLM starts predicting market swings with precision, all while costs stay pinned at $50-$300 per training run.

Hands-On: Swing into LoRA Fine-Tuning with Blockchain Datasets

Enough theory; let's get practical. I've swung through seven years of markets, from quant desks to crypto volatility, and the workflow boils down to speed and edge. Start by scouting premium LLM datasets on platforms like FineTuneMarket. com. Filter for LoRA-ready packs: high-quality, domain-specific, with metadata for seamless integration. Grab something like the 2025 Trend LLM Knowledge Base 10K for DeFi insights, pay on-chain, and download instantly.

5 Steps to LoRA Fine-Tuning

- 1. Search datasets by domain: finance (FinLoRA) or code (The Stack v2) on blockchain marketplaces like DataXID.

- 2. Review on-chain provenance & benchmarks from FinLoRA GitHub and arXiv papers.

- 3. Buy with crypto for instant access & royalties on platforms like DataXID.

- 4. Load into Hugging Face, config LoRA adapters for efficient training.

- 5. Train at $50-$300/run, deploy your edge model with ROI gains.

Once acquired, prep is straightforward. Load Mistral-7B or Llama variants, slap on LoRA config with rank=16, alpha=32, and target key modules like q_proj, v_proj. Datasets like The Stack v2 shine here, feeding 67TB of code to birth LLMs that debug smarter than GPT-4 clones from LoRA Land. FineWeb-Edu adds educational depth for explanatory models, while DataXID's synthetic privacy magic suits enterprise compliance without the legal sting.

My take? Skip bloated general crawls; premium picks like these deliver outsized swings in performance. FinLoRA's XBRL sets, pulled from real SEC filings, turned a basic model into a filing analyzer rivaling pro tools. ROI? Stratagem's calculator nails it: full custom AI lands at $4800-$12500, but LoRA slashes that timeline from weeks to hours. Enterprises scaling this report 3x faster deployment, perfect for riding 2026's AI market momentum.

Real-World Wins and Trader Edges

Picture this: a DeFi trader fine-tunes on 2025 Trend 10K data, blending RAG with LoRA for real-time yield predictions. Or devs leveraging The Stack v2 to code-gen audited smart contracts. FinLoRA benchmarks back it up, with 194 evaluation rounds showing LoRA crushing baselines on pro finance tasks. These aren't hypotheticals; they're deployable edges in a crowded field.

Trader ROI Scenarios

| Dataset | Use Case | Training Cost | Perf Gain |

|---|---|---|---|

| FinLoRA | XBRL Trading Signals | $150 | 28% accuracy |

| 2025 Trend 10K | DeFi Yield Opt | $200 | 35% precision |

| The Stack v2 | Smart Contract Audit | $100 | 42% code pass rate |

| DataXID | Privacy-Compliant Chat | $75 | 22% compliance score |

Blockchain marketplaces amplify this by democratizing access. Creators embed royalties in smart contracts, earning on every resale or derivative use. Buyers? You get perpetual updates, community-voted refinements, and tokenized ownership. In crypto's wild swings, this stability is gold. I've seen similar dynamics in momentum tools: capture the data swing, manage the adaptation sting.

Looking ahead to late 2026, expect QLoRA hybrids and on-chain training proofs exploding usage. Platforms will integrate one-click LoRA pipelines, ROI dashboards, and cross-chain dataset swaps. For devs, researchers, enterprises: stock up on blockchain AI datasets now. Fine-tune ruthlessly, trade the edges they unlock, and stay ahead of the AI herd. Your models will thank you with sharper predictions and fatter returns.

No comments yet. Be the first to share your thoughts!