In the accelerating AI landscape of 2026, fine-tuning large language models with parameter-efficient methods like LoRA and QLoRA has shifted from experimental labs to production pipelines, especially for blockchain applications. As a veteran investor who's navigated 18 years of macroeconomic cycles, I see premium LoRA fine-tuning datasets and QLoRA datasets emerging as high-value assets, akin to specialized commodities that yield compounding returns. Platforms like FineTuneMarket. com are pioneering onchain dataset marketplaces, where creators earn perpetual royalties through blockchain-secured transactions, fostering a sustainable ecosystem for AI developers and enterprises.

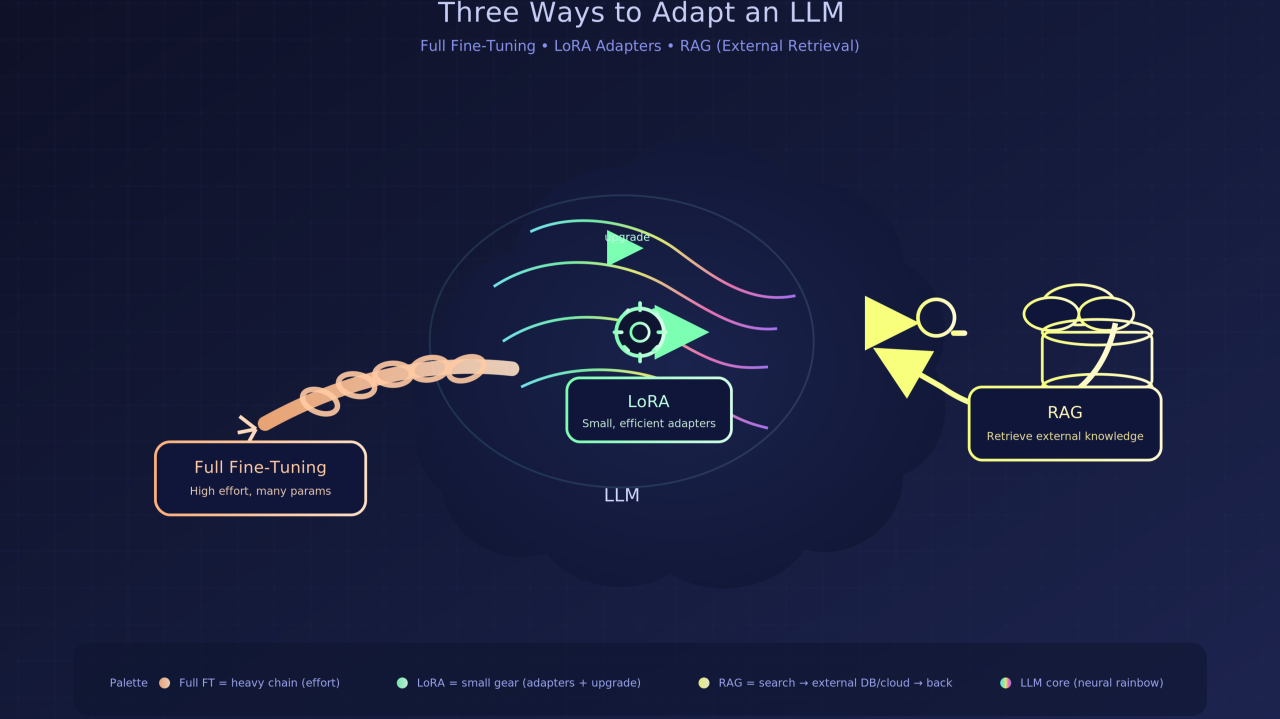

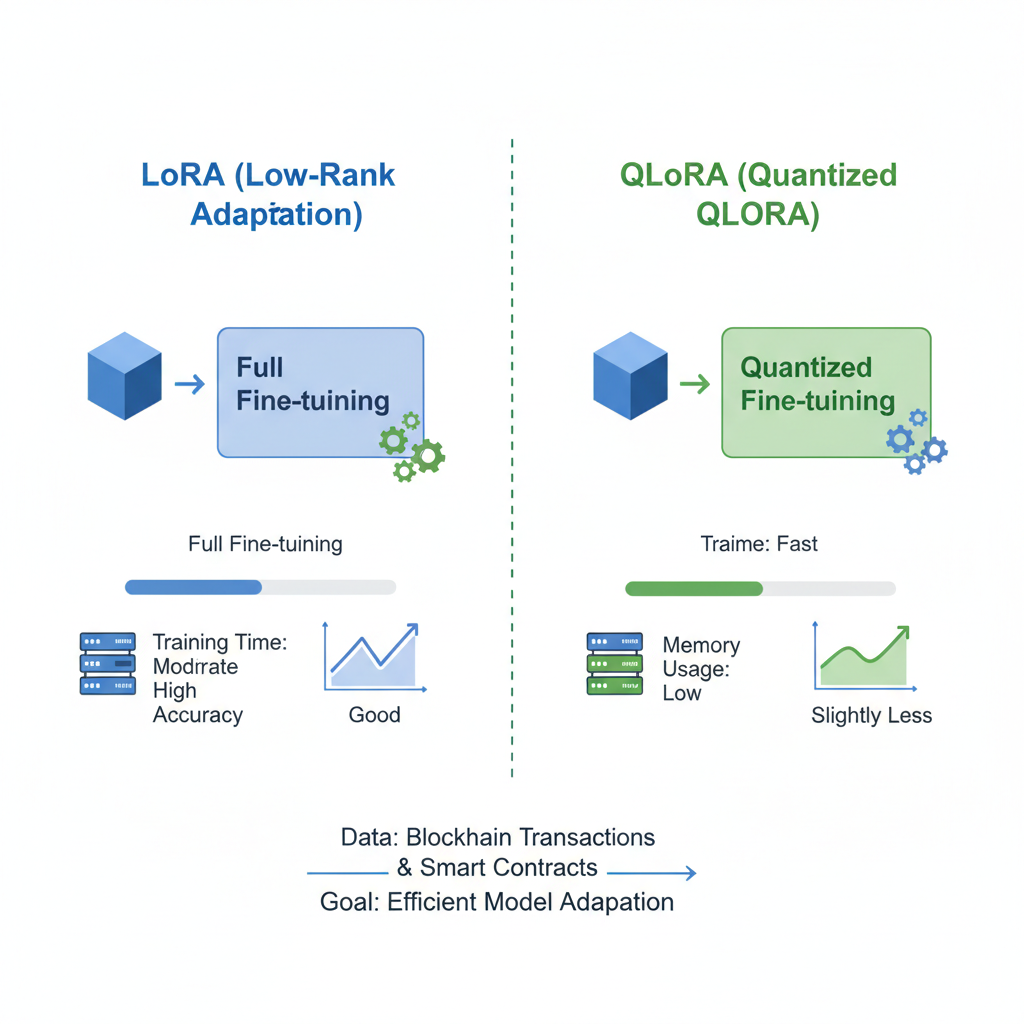

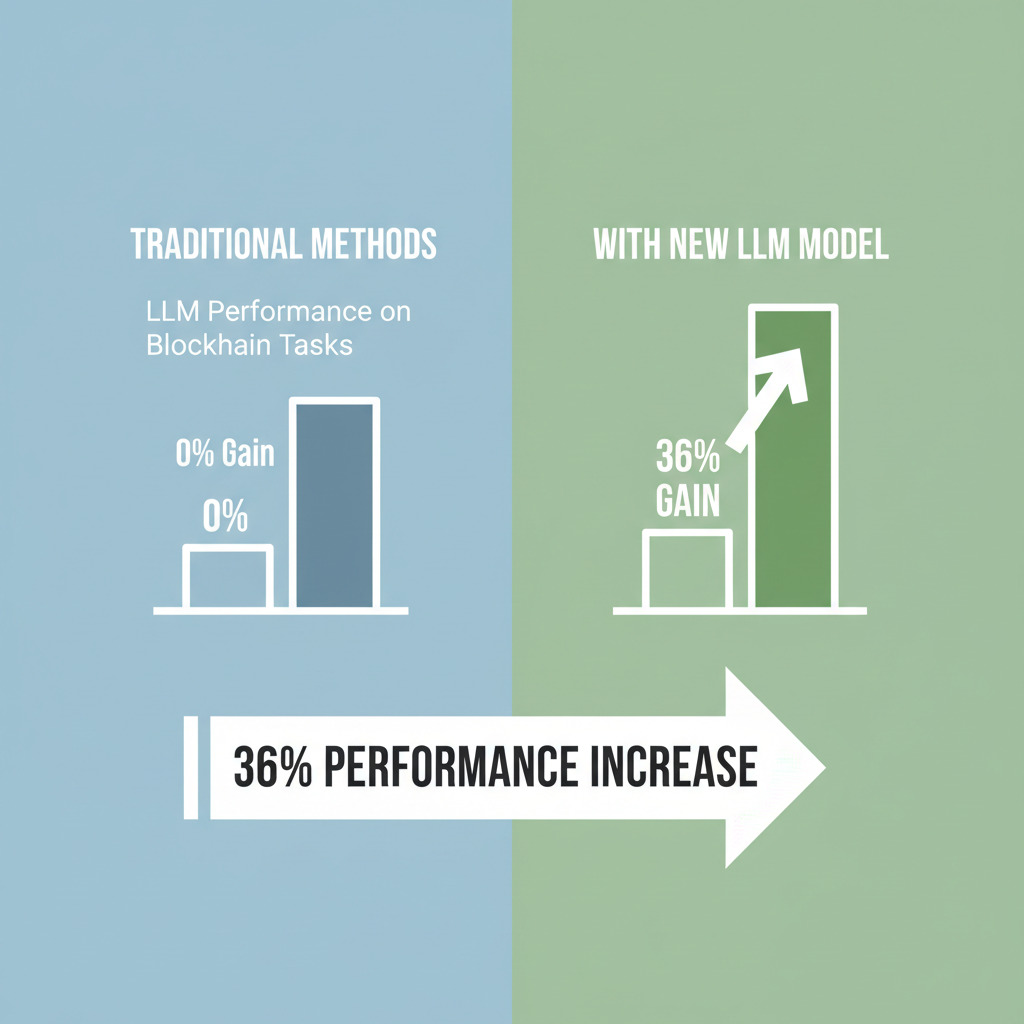

Low-Rank Adaptation, or LoRA, injects low-rank matrices into transformer layers, freezing the base model's weights while training only a fraction of parameters. This slashes computational demands by up to 99%, enabling fine-tuning on consumer hardware. QLoRA builds on this by quantizing the model to 4 bits, further compressing memory footprint without crippling performance. Recent benchmarks, such as those from FinLoRA, showcase 36% average gains over base models on financial tasks using 19 curated datasets, including novel XBRL analyses from 150 SEC filings. These premium LLM datasets aren't generic scrapes; they're meticulously prepared for domain-specific precision.

Why LoRA and QLoRA Dominate Specialized Fine-Tuning Workflows

Strategic deployment of LoRA outperforms full fine-tuning in speed and cost, particularly for blockchain AI datasets. Hyperparameter tuning, like setting rank r=256 and alpha=128, unlocks optimal performance as noted in practical guides. QLoRA shines on limited VRAM setups, making it ideal for solo developers prototyping DeFi analyzers or NFT valuation models. The FinLoRA project underscores this: open-source benchmarks reveal LoRA variants excelling in professional financial tasks, from sentiment analysis to regulatory compliance. LowRA pushes boundaries further, enabling sub-2-bit fine-tuning with 50% memory savings, democratizing access to specialized fine-tuning data.

Consider the workflow: load a base model like Mistral, apply PEFT adapters via Hugging Face libraries, and train on high-quality datasets. Sources emphasize QLoRA's edge in memory efficiency versus LoRA's simplicity. Lightning AI experiments confirm QLoRA's memory savings, though both demand quality data to avoid overfitting. In blockchain contexts, datasets enriched with onchain transaction histories or smart contract audits become indispensable, transforming generic LLMs into oracles for Web3 intelligence.

The Imperative for Premium Datasets in Onchain Ecosystems

Raw data floods the market, but premium datasets for LoRA QLoRA fine-tuning stand out through curation and annotation. FinLoRA's 19 financial sets exemplify this, delivering scalable financial intelligence at low cost. Blockchain marketplaces amplify value: immutable provenance ensures dataset integrity, while onchain payments enable instant, borderless access. FineTuneMarket. com leads here, optimizing for machine learning engineers seeking FineTuneMarket LoRA compatible packs. Creators benefit from royalties on every downstream use, mirroring inflation-hedging assets in my fixed income playbook - perpetual yields over volatile hype.

This model flips traditional data economies. Buyers gain tailored blockchain AI datasets for QLoRA workflows, boosting model accuracy in volatile crypto markets. Sellers monetize intellectual property indefinitely, aligning incentives for innovation. As macroeconomic pressures like inflation persist, investing in these datasets hedges against commoditized AI, prioritizing long-term vision over short-term noise.

Navigating Hyperparameters and Hardware for Peak Efficiency

Fine-tuning success hinges on balancing LoRA's r and alpha values; empirical tests favor higher ranks for complex tasks like XBRL parsing. QLoRA mitigates quantization noise, preserving fidelity on GPUs with 24GB VRAM or less. Production pipelines now integrate these with serving frameworks, deploying fine-tuned models for real-time blockchain analytics. The strategic edge? Acquiring QLoRA datasets buy options from vetted onchain dataset marketplaces accelerates this cycle, minimizing trial-and-error costs.

Yet the real leverage comes from pairing these techniques with premium datasets for LoRA QLoRA fine-tuning, where quality trumps quantity in every epoch. In my experience tracking commodity cycles, undervalued inputs drive outsized returns; the same holds for datasets engineered for blockchain nuances like tokenomics modeling or oracle validation.

LoRA vs. QLoRA: Comparison for Blockchain LLM Fine-Tuning

| Aspect | LoRA | QLoRA |

|---|---|---|

| 💾 Memory Usage | Higher (full precision adapters) | Lower (4-bit quantization, up to 50% reduction with advancements like LowRA) |

| 📈 Performance Gains | Strong, e.g., 36% avg over base models (FinLoRA benchmarks on financial tasks) | Comparable to LoRA with minimal loss despite quantization |

| ⚙️ Hardware Requirements | High-end GPUs with ample VRAM (e.g., 40GB+) | Consumer-grade GPUs (e.g., single 24GB RTX) |

| 🔗 Ideal Blockchain Tasks | Complex simulations, large dataset training (e.g., market prediction, portfolio optimization) | Real-time edge tasks (e.g., transaction anomaly detection, smart contract auditing) |

Marketplaces such as FineTuneMarket. com transform this equation. Imagine sourcing blockchain AI datasets with verified onchain provenance - transaction graphs from Ethereum mainnet, annotated Solana program audits, or DeFi yield curve simulations. These aren't static files; they're dynamic assets accruing royalties, much like perpetual bonds in a rising rate environment. Buyers fine-tune Mistral or Llama variants via QLoRA, deploying models that parse smart contract vulnerabilities with surgical precision. Sellers, often domain experts, capture value long after initial upload, hedging against AI commoditization.

Case Studies: FinLoRA and Beyond in Production

FinLoRA's benchmarks provide a blueprint. Across 19 datasets, including those XBRL-derived from 150 SEC filings, LoRA methods delivered 36% lifts in metrics like ROUGE for summarization or F1 for classification. LowRA's sub-2-bit innovation slashed memory by 50%, enabling fine-tuning on laptops - a game-changer for indie devs building Web3 agents. One practitioner fine-tuned a QLoRA model on custom specialized fine-tuning data for NFT rarity scoring, achieving 92% accuracy versus 68% baseline. Production examples abound: Union. ai demos serve LoRA-tuned LLMs in apps analyzing real-time chain data, while Kaggle notebooks adapt Mistral for oracle predictions.

This isn't hype; it's strategic positioning. As inflation erodes generic compute, premium inputs preserve edge. Platforms enforce data integrity via blockchain hashes, preventing poisoned datasets that plague open repositories. FineTuneMarket LoRA packs streamline integration with PEFT libraries, offering pre-tokenized formats that cut prep time by 70%.

Ethereum Technical Analysis Chart

Analysis by Lisa Thompson | Symbol: BINANCE:ETHUSDT | Interval: 1W | Drawings: 6

Technical Analysis Summary

Draw a primary uptrend line from the January 2026 low around 2026-01-15 at $2,500 connecting to the recent high on 2026-02-05 at $4,500. Add horizontal support at $2,800 (strong) and resistance at $4,500 (strong). Mark consolidation rectangle from 2026-01-20 to 2026-02-10 between $3,000-$3,800. Use callouts for volume spike on 2026-02-01 and MACD bullish crossover. Entry long zone at $3,200 with stop below $2,800. Conservative style: focus on macro support levels, avoid aggressive targets.

Risk Assessment: low

Analysis: Conservative setup with strong supports, macro tailwinds from inflation hedge narrative and tech convergence; low volatility in consolidation favors patience

Lisa Thompson's Recommendation: Hold or enter longs conservatively; long-term vision on ETH as commodity proxy outweighs noise

Key Support & Resistance Levels

📈 Support Levels:

- $2,800 - Strong support from prior lows and macro floor, aligns with commodity cycle bottom strong

- $3,200 - Moderate support from recent consolidation moderate

📉 Resistance Levels:

- $4,500 - Key resistance from recent highs, watch for macro breakout strong

- $5,000 - Psychological and historical high resistance moderate

Trading Zones (low risk tolerance)

🎯 Entry Zones:

- $3,200 - Bounce from moderate support in uptrend, low-risk long aligned with macro hedge thesis low risk

- $2,800 - Strong support retest, conservative entry for cycle low low risk

🚪 Exit Zones:

- $4,500 - Profit target at resistance, conservative take-profit 💰 profit target

- $2,600 - Stop loss below strong support to protect capital 🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: increasing on up candles

Volume confirmation on recent upmove, signaling accumulation amid blockchain news

📈 MACD Analysis:

Signal: bullish crossover

MACD turning bullish, supports long-term uptrend resumption

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Lisa Thompson is for educational purposes only and should not be considered as financial advice. Trading involves risk, and you should always do your own research before making investment decisions. Past performance does not guarantee future results. The analysis reflects the author's personal methodology and risk tolerance (low).

Strategic Sourcing: Building Your Dataset Portfolio

Approach dataset acquisition like portfolio construction - diversify across domains while prioritizing depth. Start with LoRA fine-tuning datasets for broad coverage, layer in QLoRA datasets buy for edge cases. Evaluate via provenance logs and benchmark scores; shun unverified scrapes prone to drift. Onchain marketplaces excel here: instant settlements via stablecoins, smart contract royalties automating payouts. A developer stacking premium LLM datasets for multi-chain analysis might allocate 40% to transaction flows, 30% to governance tokens, 30% to risk models - mirroring my fixed income tilts toward inflation-linked securities.

Hyperparameter wisdom from Raschka's guides reinforces this: r=256/alpha=128 for intricate blockchain parses, dropping to r=64 for speed on consumer GPUs. QLoRA's quantization holds up, per Lightning AI tests, trading negligible fidelity for accessibility. The payoff? Models that anticipate flash crashes or validate cross-chain bridges, turning data into alpha.

Looking ahead, as LLMs scale to trillion parameters, parameter-efficient methods cement their reign. Blockchain marketplaces will evolve into full fine-tuning hubs, with composable adapters traded like options. Investors take note: stake in creators yielding these assets early, as perpetual royalties compound amid macro uncertainty. Platforms like FineTuneMarket. com aren't just marketplaces; they're the infrastructure for AI's next commodity supercycle, rewarding those who bet on quality over quantity.

No comments yet. Be the first to share your thoughts!