In the tactical precision of LLM fine-tuning, dataset volume acts as your position sizing - too small, and volatility wipes you out. Industry benchmarks consistently flag 1000 and high-quality dataset examples as the minimum threshold for reliable performance gains, particularly on onchain marketplaces like FineTuneMarket. com. Here, creators monetize premium datasets via blockchain royalties, turning data into perpetual leverage without the drag of intermediaries.

Dataset Scarcity: The Overlooked Risk in LLM Optimization

Lean datasets tempt with speed but deliver fragility. Sources like Dialzara underscore the pitfalls: models trained on fewer than 1000 examples per task suffer overfitting, brittle generalization, and inflated parameter hallucinations. ArXiv guides echo this, advocating at least 1000 samples to manage scale while curbing noise. Even Meta's fine-tuning playbook, while nodding to 50-100 examples for tweaks, concedes deeper adaptation demands volume. On onchain AI datasets, where transactions demand auditability, low-volume sets amplify risks - think poisoned inputs exploiting smart contract blind spots.

This isn't theory; GitHub's mlabonne/llm-datasets repo catalogs code-heavy collections, proving diverse examples sharpen code generation without syntax drift. Sapien's strategies for small sets? Mere band-aids - PEFT tricks and distillation buy time, but can't substitute raw high quality datasets for fine-tuning.

Top 6 Onchain LLM Dataset Platforms

- DataXID: Blockchain-based synthetic data platform for privacy-compliant, domain-specific LLM fine-tuning datasets. Key features: Ensures accuracy and compliance; supports onchain data generation. Visit

- OpenDataBay: AI and LLM data marketplace for buying, selling, or exchanging fine-tuning datasets. Key features: Categories include financial and consumer data; onchain exchange capabilities. Visit

- Amazon Bedrock: Cloud platform generating synthetic data to address scarcity in LLM fine-tuning. Key features: Context-based Q&A datasets; integrates with onchain workflows. Visit

- Xenoss: Enterprise-grade platform for LLM fine-tuning, sourcing high-quality datasets. Key features: Mitigates forgetting and drift; production-ready data handling. Visit

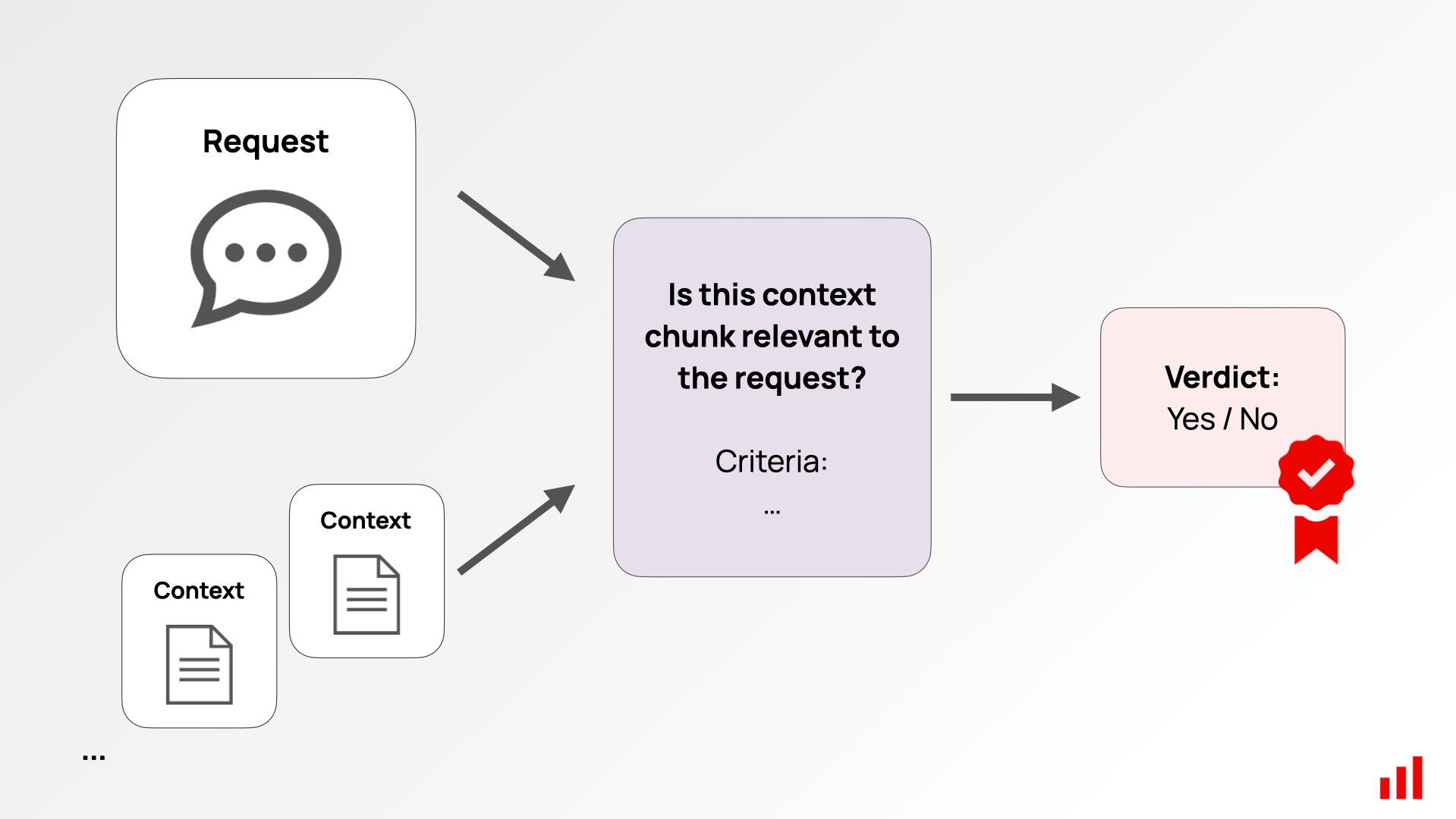

- Refine-n-Judge: Iterative LLM-driven framework to refine and judge datasets for premium quality. Key features: Automated enhancement; suitable for onchain dataset curation. Read

- PIXIU: Comprehensive financial LLM framework with instruction datasets and benchmarks. Key features: Domain-specific financial data; supports fine-tuning for onchain apps. Read

Emerging Onchain Ecosystems Fueling Dataset Abundance

2026's landscape shifts decisively toward blockchain-native solutions, resolving data silos with tokenized access. FineTuneMarket. com leads by streamlining discovery of premium datasets LLM specialists crave, from computer vision to instruction-tuned text. DataXID's synthetic generators ensure privacy-compliant volumes for domain-specific tuning; OpenDataBay trades financial datasets ripe for PIXIU-style financial LLMs. Amazon Bedrock's synthetic Q and A data bridges scarcity, while Refine-n-Judge automates quality iteration via LLM self-critique - churning 1000 and refined pairs from raw inputs.

FlowerTune's federated benchmarks reveal cross-domain needs, demanding 1000 and examples to adapt models sans centralization risks. Xenoss tackles enterprise hurdles like forgetting and drift, provisioning GPU-optimized volumes. DigitalOcean's curation playbook stresses authoritative sourcing via Hugging Face, aligning perfectly with onchain provenance tracking. These tools collectively arm traders of intelligence - you - with the scale to fine-tune large language models examples needed for production edges.

Quality Over Quantity: Tactical Vetting for Blockchain Marketplaces

Volume alone falters without rigor. ODSC's 10-dataset spotlight - from sentiment corpora to translation pairs - illustrates variance: general-purpose sets like those in LocalLLaMA repos excel in breadth, but blockchain marketplace datasets prioritize verifiability. DataCamp examples show translation tasks blooming post-1000 examples, sentiment stabilizing against adversarial noise. Holden Karau's multi-source synthesis via embeddings boosts diversity, countering homogeneity traps. AWS warns of preprocessing pitfalls; unclean data cascades into model bias, nullifying onchain immutability perks.

Enter the vetting playbook: score datasets on diversity, recency, and domain fidelity before committing tokens. Onchain ledgers like FineTuneMarket. com etch provenance into the blockchain, letting you audit lineage without trust assumptions. This tactical edge separates alpha-generating fine-tunes from noise trades.

Benchmarking Thresholds: 1000 and Examples as the Inflection Point

Empirical edges emerge post-1000: Dialzara's analysis pins minimum thresholds there for task robustness, where parameter counts align without catastrophic overfitting. ArXiv's ultimate guide reinforces this for scalable processing, noting smaller sets fracture under volatility - akin to naked options in a gamma squeeze. OpenAI's lighter touch works for nudges, but LLM fine-tuning dataset size scales rewards exponentially beyond four figures. Code repos like mlabonne's prove it; Python-heavy collections yield syntax-coherent outputs only after volume saturation.

Comparison of LLM Fine-Tuning Outcomes by Dataset Size

| Dataset Size (Examples) | Overfitting Risk | Generalization Score | Onchain Suitability |

|---|---|---|---|

| 100 | High | Poor (20-40%) | Not Recommended ❌ |

| 500 | Medium-High | Fair (40-60%) | Marginal ⚠️ |

| 1000 | Medium | Good (60-80%) | Recommended ✅ |

| 5000 | Low | Excellent (80-95%) | Optimal 🚀 |

Synthetic boosters like DataXID and Bedrock accelerate to these levels, generating compliant volumes for financial or Q and A niches. Refine-n-Judge iterates ruthlessly, distilling gold from base metals via self-judgment loops. FlowerTune's federated tests demand this scale for domain hops, exposing weak links in sparse regimes.

Tactical Deployment on Blockchain Marketplaces

Picture this: you scout premium datasets LLM on FineTuneMarket. com, filter for 1000 and verified examples, and deploy via onchain purchase. Royalties flow perpetually as your tuned model trades intelligence in DeFi protocols or NFT analytics. OpenDataBay's financial troves pair with PIXIU benchmarks, fueling LLMs that parse SEC filings with street-level acuity. Xenoss mitigates drift in live deployments, ensuring your edge persists through market regimes.

DigitalOcean's sourcing rituals - blending Hugging Face with custom scrapes - mesh seamlessly here, provenance tokenized for resale. Holden Karau's embedding fusions create hybrid sets, amplifying fine-tune large language models examples needed without redundancy bloat. The result? Models that don't just parrot; they anticipate, adapting to onchain flux like volatility surfaces in a straddle setup.

Onchain Dataset Checklist

- Diversity: Confirm varied examples across tasks, domains, and formats, as in mlabonne/llm-datasets code sets spanning languages.

- Size Threshold: Target 1,000+ examples per task minimum, per arXiv guides and Dialzara insights, avoiding small-dataset pitfalls.

- Provenance: Trace origins via blockchain or platforms like Hugging Face, ensuring authenticity as in DigitalOcean curation best practices.

- Royalty Structure: Evaluate smart contract royalties on platforms like OpenDataBay for sustainable creator incentives.

- Domain Fit: Align with onchain needs (e.g., DeFi via PIXIU datasets), matching marketplace applications like financial Q&A.

Skeptics cling to small-set hacks, but markets punish fragility. Sapien's PEFT playbook concedes: it's scaffolding, not structure. YouTube syntheses and DataCamp demos converge on the 1000 and sweet spot for translation fidelity and sentiment nuance. AWS's prep manifesto hammers cleanliness; one tainted batch erodes blockchain's purity pledge.

Onchain marketplaces rewrite the game, commoditizing abundance. FineTuneMarket. com's ecosystem - with its instant settlements and royalty rails - positions dataset creators as options writers, collecting premium on every downstream fine-tune. Traders of tomorrow source here, stacking 1000 and slabs of premium granite to sculpt LLMs that dominate onchain AI datasets arenas. The leverage is real, the risks hedged, the upside uncapped.

No comments yet. Be the first to share your thoughts!