In the cutthroat arena of LLM development, supervised fine-tuning datasets make or break your model. Nail 1000 and high-quality examples, and you're not just tweaking; you're forging a beast that rivals giants. The LIMA paper proved it: a 65B LLaMA crushed benchmarks on a mere 1,000 curated samples. Now, imagine scaling that firepower with onchain dataset marketplaces - decentralized hubs pumping out premium data with blockchain royalties baked in. Speed is everything; grab these assets instantly, pay onchain, and watch your LLM scalp precision like a pro trader riding pips.

Top 7 Onchain Data Marketplaces

- Dune: 1.5M+ datasets across 100+ blockchains for AI training. Tools for analysts & devs. dune.com

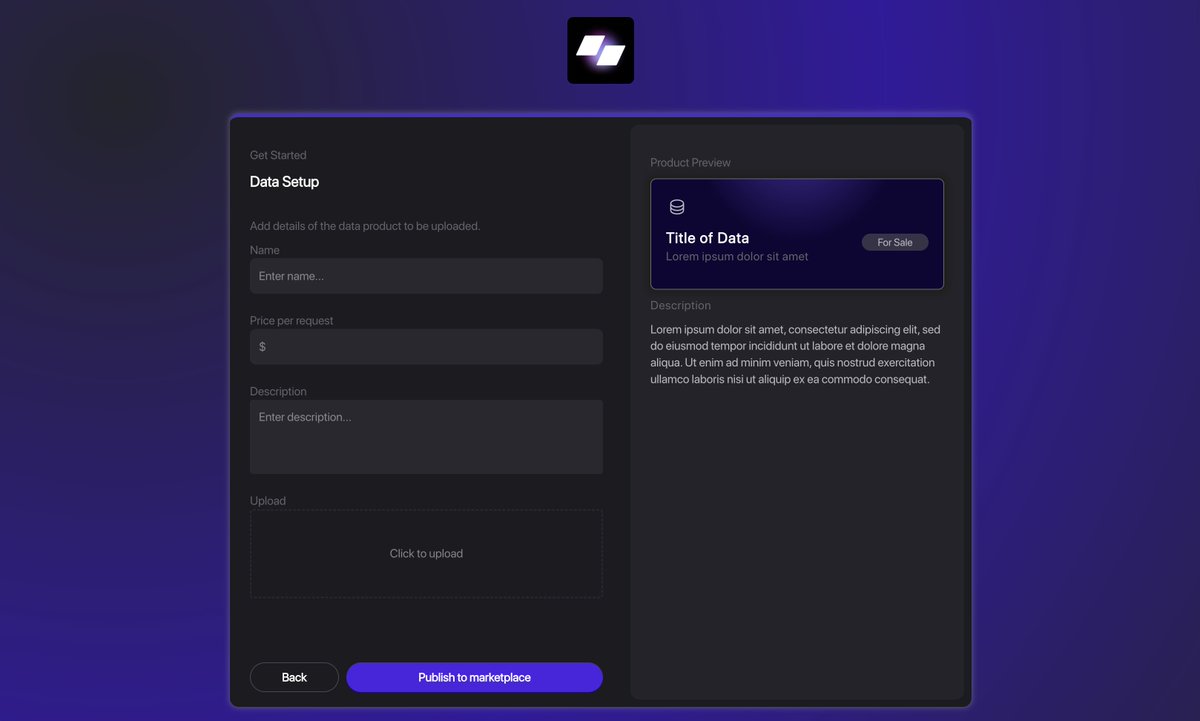

- Syncport Data Marketplace: Monetize data as onchain assets. Set pricing, access rules for AI datasets. syncport.io

- Aidataset: Buy/sell datasets, models via crypto. Seamless for AI agents. aidataset.io

- Data Context Market (DCM): Decentralized storage & monetization. x402 micropayments for AI agents. ethglobal.com

- DataLink by Chainlink: Institutional onchain data publishing. Access control & instant distribution. chain.link

- OORT DataHub: Decentralized high-quality AI datasets. Retain ownership, ethical development. oortech.com

- OpenDataBay: Free/premium datasets for AI/ML. AI-powered aggregation engine. opendatabay.com

These platforms flip the script on data hunts. No more scraping Kaggle scraps or Reddit repos. Dune blasts 1.5 million datasets across 100 and blockchains, perfect for crypto-savvy LLMs. Syncport lets creators tokenizes datasets as onchain assets, setting prices and rules while buyers snag AI fuel for training. Aidataset mixes datasets, models, and crypto payments for seamless agent access.

Onchain Marketplaces Fueling the Dataset Boom

DCM bridges data creators to AI agents via x402 micropayments, storing and monetizing without middlemen. DataLink by Chainlink delivers institutional-grade feeds with access controls, integrating directly into dApps. OORT DataHub keeps data pristine and contributor-owned, ideal for ethical fine-tuning. OpenDataBay's AI engine matches free or premium datasets to your needs, from synthetic to raw.

Key Onchain Marketplaces for Sourcing LLM Fine-Tuning Datasets

| Platform | Datasets Available | Unique Features | Best For |

|---|---|---|---|

| Dune | Over 1.5M datasets | Multi-chain (100+ blockchains), tools for analysts, AI systems, developers | AI analysts, developers, onchain data exploration |

| Syncport Data Marketplace | High-quality datasets | Monetize data as onchain assets, decentralized exchange, custom pricing and access rules | Data providers and buyers for AI training and analytics |

| Aidataset | Datasets, models, files | Crypto transactions, seamless access for AI agents | AI agents buying/selling data |

| Data Context Market (DCM) | Datasets | Decentralized storage/monetization, autonomous transactions via x402 micropayments | AI agents and data creators |

| DataLink by Chainlink | Onchain data feeds | Institutional-grade, access control, instant distribution, dApp integration | Decentralized applications and platforms |

| OORT DataHub | High-quality AI datasets | Decentralized/transparent, data integrity, ethical AI, retain ownership | AI training with ethical data sourcing |

| OpenDataBay | Free, premium, synthetic, open, raw datasets | AI-powered data aggregation and matching engine | AI/ML projects needing diverse datasets |

This ecosystem thrives because it aligns incentives. Creators earn perpetual royalties every time their data trains an LLM - pure blockchain magic. Developers get premium AI fine-tuning data without quality roulette. Think high-frequency trading: low latency access to order flow data translates to low-latency model upgrades here.

High-Impact Datasets Ready for SFT Deployment

Dive into proven corpora. The Pile stacks 886 GB of diverse English text from 22 sub-datasets, a cornerstone for broad LLM training. OpenCodeInstruct delivers 5 million code samples with questions, solutions, tests, and feedback - turbocharge code generation. TaP Framework auto-generates preference data across languages, ensuring diverse coverage.

Don't sleep on niche gems. Kaggle's Crypto and Blockchain dataset sharpens LLMs for onchain lingo. Reddit's r/LocalLLaMA repo curates SFT-focused lists. Databricks drops 27,000 ecommerce QA pairs for conversational prowess. GitHub's mlabonne/llm-datasets packs code examples across languages. ODSC spotlights 10 versatile sets for unique model boosts.

Top Onchain Marketplaces for LLM Fine-Tuning Datasets

| Marketplace | Total Datasets Available | Royalty Rates for Creators | Key Features |

|---|---|---|---|

| Dune | 1.5M+ | N/A | 🪐 100+ blockchains 🔧 Tools for AI & devs 📊 Onchain analytics |

| Syncport Data Marketplace | N/A | Custom pricing 💰 | 📈 Monetize as onchain assets 🔄 Decentralized exchange 🔑 Define access rules |

| Aidataset | N/A | Crypto transactions ₿ | 🤖 Buy/sell datasets & models 🧠 Seamless for AI agents 💳 Crypto payments |

| Data Context Market (DCM) | N/A | x402 micropayments ⚡ | 🗄️ Decentralized storage 🤝 Autonomous transactions 🔗 For AI systems |

| DataLink by Chainlink | N/A | N/A | 🏦 Institutional-grade 🔒 Access control ⚡ Instant distribution & integration |

| OORT DataHub | N/A | Retain ownership 👑 | 🎯 High-quality AI datasets ☯️ Ethical & transparent 🔒 Data integrity |

| OpenDataBay | N/A | N/A | 📥 Free, premium & synthetic 🧬 AI-powered matching 🔍 Data aggregation |

Shaip tailors domain-specific packs, NVIDIA's NeMo Curator pipelines custom SFT data. Even arXiv papers show fine-tuned small models outpacing SOTA in vulnerability detection. Stack these with onchain sources, and you're assembling 1000 and LLM fine-tune examples that punch above weight. Quality trumps quantity; one vetted dataset marketplace haul beats endless web crawls.

Why Onchain Edges Out Traditional Sources

Traditional spots like Hugging Face or Kaggle lag in monetization and freshness. Onchain flips that: immutable provenance, instant micropayments, global liquidity. Rain Infotech notes 1,000 quality examples suffice, augmented smartly. Ahead of AI's Sebastian Raschka echoes LIMA's efficiency. Blockchain royalties datasets ensure creators keep earning, fueling more supply. Your LLM gets battle-tested data, provenance verified on-ledger. Scalpers know: precision data flow wins wars.

Provenance isn't fluff; it's your edge in the data pip wars. One bad sample poisons the batch, tanking alignment. Onchain logs every access, every resale - no fakes slip through. Pair that with Shaip's domain packs or NVIDIA's curation pipelines, and you're stacking supervised fine-tuning datasets that deliver surgical precision.

Curating Your 1000 and LLM Fine-Tune Arsenal

Hit the ground running. Start with Dune's multi-chain firehose for blockchain-native examples, then layer OpenDataBay's synthetic boosters. Need code? OpenCodeInstruct's 5 million samples with feedback loops crush it. Ecommerce chats? Databricks' 27k QA pairs build conversational muscle. Mix Kaggle's crypto sets with Reddit's curated SFT lists for that 1000 and threshold fast.

Quality audit like a scalper screens order flow: dedupe, filter noise, balance domains. Rain Infotech's augmentation tricks stretch 1,000 cores to 10k without dilution. TaP's taxonomy ensures preference diversity across tongues. GitHub repos like mlabonne/llm-datasets feed code hunger. ODSC's top 10? Versatile wildcards for niche punches. Sebastian Raschka nails it - efficiency rules; bloated pretraining bows to smart SFT.

Comparison of High-Impact SFT Datasets

| Dataset | Size/Examples | Focus Area | Best Use Case |

|---|---|---|---|

| The Pile | 886GB | Diverse text (22 sub-datasets) | Broad LLM base training and SFT |

| OpenCodeInstruct | 5 million samples | Code instructions with feedback | Fine-tuning code generation LLMs |

| Retail Ecommerce QA Pairs | 27,000 entries | Customer service QA (diverse categories) | Conversational fine-tuning for retail AI |

| LIMA | 1,000 high-quality examples | Instruction-response pairs | Efficient SFT emphasizing quality over quantity |

| Dune Onchain Datasets | 1.5 million datasets | Onchain data across 100+ blockchains | Sourcing blockchain data for crypto LLM fine-tuning |

arXiv experiments prove the point: fine-tuned compacts smoke bloated SOTA in vuln hunting. Your stack? Onchain hauls plus these gems yield 1000 and LLM fine-tune examples that adapt like lightning.

Monetization Magic: Royalties Supercharge Supply

Here's the killer hook - blockchain royalties datasets create infinite loops. Creators drop once, earn forever on resales and retrains. Syncport tokenizes, Aidataset crypto-pays, DCM micropayments flow. OORT's ownership model draws ethical goldmines. DataLink's feeds hit dApps at scale. Result? Flood of fresh, premium drops. No more stale Kaggle forks; this is live order book depth for data traders.

Scalpers thrive on flow; LLM devs will too. Instant buys mean your model iterates hourly, not monthly. Forget permissioned silos - global liquidity pools datasets like liquidity pools liquidity. FineTuneMarket. com exemplifies: onchain payments, perpetual cuts, specialized packs for vision and beyond. Your edge? Dive in, source ruthlessly, fine-tune relentlessly.

Deploy now. Grab Dune's 1.5M sprawl, spike with OpenCodeInstruct, audit via NeMo. Your LLM emerges not tuned, but tempered - ready to dominate domains from code to crypto. In this arena, data speed kills. Load up, execute, win.

No comments yet. Be the first to share your thoughts!