In the shadowed alleys of niche AI tasks, where generalist models stumble over specialized jargon and subtle nuances, a pivotal choice looms: cling to the elegance of prompting, or dive into the transformative depths of fine-tuning? Imagine crafting a model that whispers trade secrets of ancient manuscripts or deciphers the cryptic pulses of quantum simulations. This is no mere technical debate; it's the symphony of innovation deciding whether your LLM dances to generic prompts or sings with domain mastery. At FineTuneMarket. com, we witness creators unlock perpetual value through premium AI datasets, but only when the prompting vs fine-tuning threshold tips decisively.

Prompting seduces with its immediacy, a virtuoso's improvisation within the model's vast pre-trained knowledge. You feed it context, examples, a dash of instruction, and poof: outputs tailored on the fly. For broad queries or when your niche task squeezes into a single context window, this alchemy shines. Reddit sages in r/LocalLLaMA echo this: prompt when instructions fit neatly; fine-tune when they sprawl beyond. Yet, as tasks burrow deeper into esoterica, like aspect-based sentiment analysis in biotech patents or commodity supercycle forecasting, prompting falters. Variations in phrasing yield erratic symphonies, precision erodes, and costs mount from endless iterations.

Prompting's Grace Under Broad Skies

Picture prompting as the agile scout in our AI odyssey: lightweight, resource-sparing, demanding scant data. Codecademy's insights ring true; it's inference-time artistry, no retraining required. Fireworks AI notes its prowess in general domains, where zero-shot or few-shot prompts coax surprising competence. But niche tasks? Here, the scout tires. Hostinger tutorials highlight sensitivity to prompt tweaks, performance plateauing against true specialization. In my macro strategy days, tracking bond yields through global cycles, I'd liken it to surface-level charts: informative, yet blind to undercurrents. For llm fine-tuning examples needed below a handful, prompting reigns, blending speed with the model's latent wisdom.

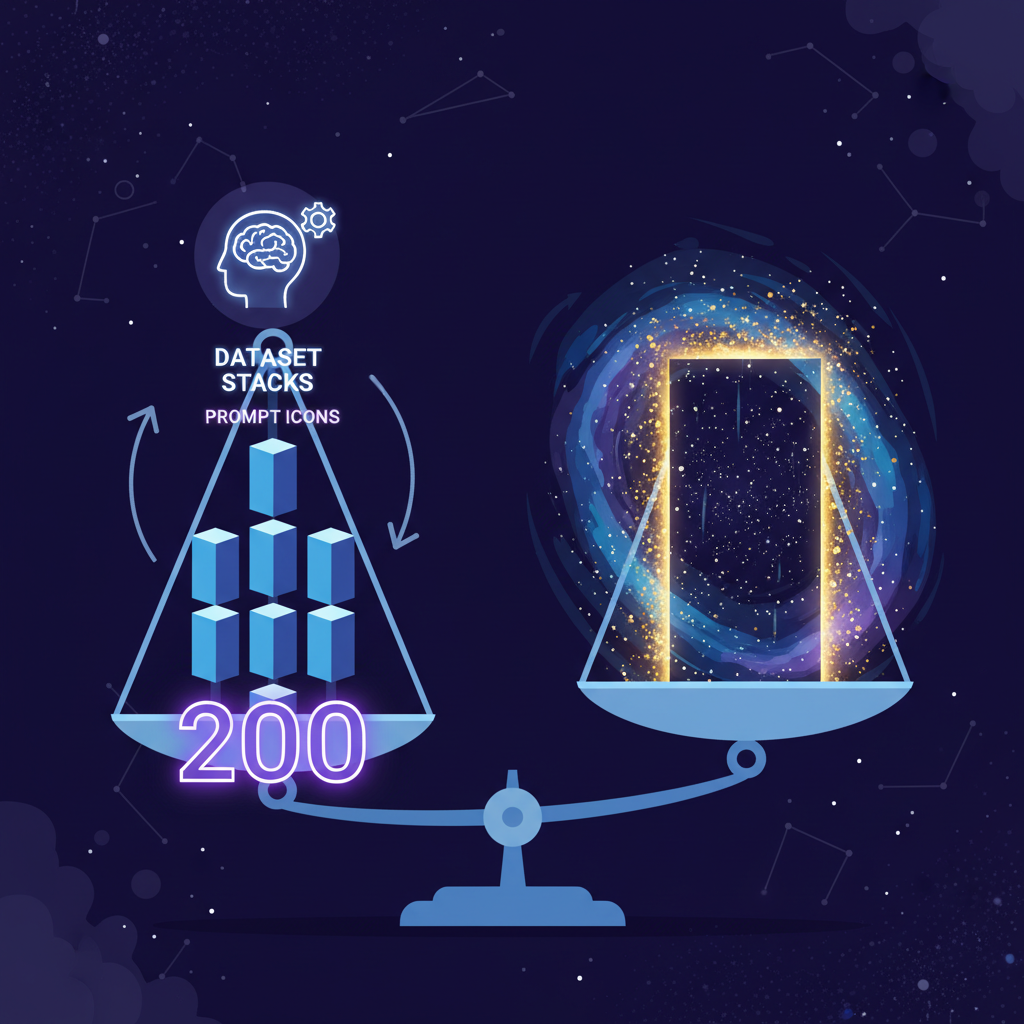

Thresholds for Prompting vs. Fine-Tuning Based on Dataset Size

| Dataset Size | Recommended Strategy | Accuracy Gain vs. Prompting Baseline | Relative Compute Cost | Niche Task Examples |

|---|---|---|---|---|

| 0-50 examples | Prompting Superior ✅ | N/A (Baseline) | Low 💰 | General Q&A, Simple text classification |

| 50-200 examples | Hybrid (Prompting + Light Fine-tuning) | +15-30% | Medium 💰💰 | Aspect-based Sentiment Analysis (ABSA), Custom instruction-following chatbots |

| 200+ examples | Fine-tuning Outperforms 🚀 | +30-50% (levels off at 500+) | High 💰💰💰 | Medical diagnosis support, Legal document analysis, Pharma-specific predictions |

The Inflection: Dataset Size as the Great Divider

Enter the threshold, that luminous line where fine-tuning llm datasets eclipse prompts. Studies from UZH's ipz. uzh. ch crystallize it: fine-tuning surges past zero-shot when training sets surpass roughly 200 examples, gains stabilizing beyond 500. Latitude. so amplifies this, revealing smaller, curated datasets often trump bloated ones in quality-adjusted performance. It's not volume alone; it's the alchemy of relevance. In niche realms, like ABSA via ScienceDirect explorations, fine-tuned open-source LLMs deliver unwavering accuracy, unswayed by prompt whims.

Envision the narrative arc: your prompting yields 70% precision on rare-earth mineral sentiment tasks. At 100 examples, hybrid tinkering nudges 80%. Cross 200, and fine-tuning catapults to 92%, per empirical dives. Compute trades off initially steep, yet amortizes over inferences, slashing long-term costs as Fireworks AI attests. This is where FineTuneMarket. com ignites: discover, purchase premium ai datasets purchase via onchain seamlessness, creators harvesting onchain dataset royalties eternally. No more scraping shadows; procure polished datasets for your symphony's crescendo.

Bittensor Technical Analysis Chart

Analysis by James Anderson | Symbol: BINANCE:TAOUSDT | Interval: 1h | Drawings: 7

Technical Analysis Summary

Aggressively mark the dominant downtrend line from the early 2026-02-05 peak at 255 connecting through swing highs to the 2026-02-19 low vicinity using trend_line tool in red. Overlay a nascent uptrend line from 2026-02-19 low at 145 to recent 2026-02-25 high at 200 with green trend_line. Draw horizontal_lines at critical support 145 (strong red), 170 (moderate blue), resistance 220 (moderate orange), 255 (strong purple). Place long_position rectangle entry zone around 170-175. Fib_retracement from 255 high to 145 low to project upside targets at 0.618 (around 220). Arrow_mark_up on recent MACD bullish cross near 2026-02-22. Callout volume spike at bottom on 2026-02-19 low highlighting bullish divergence. Rectangle for consolidation zone 160-190 from 2026-02-16 to 2026-02-22. Text labels for all levels in bold aggressive style: 'High Risk Long Setup - Innovation Boom Ahead!'

Risk Assessment: high

Analysis: Extreme volatility in AI crypto sector with fresh lows but bullish indicator divergence; high reward potential outweighs drawdown risk in swing setup

James Anderson's Recommendation: Aggressively long TAO from 170 targeting 240+, stop 150—ride the fine-tuning fueled innovation boom! High tolerance play.

Key Support & Resistance Levels

📈 Support Levels:

- $145 - Strong major swing low with volume spike, classic reversal base strong

- $170 - Moderate recent bounce level, prior consolidation floor moderate

📉 Resistance Levels:

- $220 - Moderate prior swing high, fib 0.618 retrace target moderate

- $255 - Strong all-time high zone, overhead supply strong

Trading Zones (high risk tolerance)

🎯 Entry Zones:

- $170 - Aggressive long entry on pullback to support amid MACD bull cross and volume confirmation, high reward swing setup high risk

🚪 Exit Zones:

- $240 - Profit target at resistance confluence and fib extension 💰 profit target

- $150 - Tight stop below major support to limit downside in volatile crypto 🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: bullish divergence

Volume spikes on lows with price hammer, drying up on downside—classic reversal signal syncing on-chain accumulation

📈 MACD Analysis:

Signal: bullish crossover

MACD line crosses signal from below with expanding histogram—aggressive buy trigger for swing

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by James Anderson is for educational purposes only and should not be considered as financial advice. Trading involves risk, and you should always do your own research before making investment decisions. Past performance does not guarantee future results. The analysis reflects the author's personal methodology and risk tolerance (high).

Decoding Niche Demands: Thresholds in Action

Let's dissect real-world cadences. In pharma, IntuitionLabs contrasts: prompting sketches outlines, fine-tuning etches masterpieces post-200 examples. MLOps Community concurs; context overflow mandates parameter tweaks. Google Cloud's playbook underscores optimization for specifics, where datasets under 50 favor prompts' frugality, 50-200 invite few-shot hybrids, and beyond beckons full fine-tune. Opinionated take: ignore this at peril. I've seen sovereign funds falter on generic models amid supercycles; similarly, AI ventures crumble without tuned precision. ArXiv's ultimate guide fuses theory to practice, affirming curated data's primacy over sheer scale. Yet thresholds aren't rigid monoliths; task complexity modulates. Simple classification? Prompt longer. Multi-hop reasoning in proprietary ledgers? Fine-tune forthwith. Sunil Rao's Medium opus frames fine-tuning as neural evolution, pre-trained giants adapting via specialized fodder. As we crest this divide, the marketplace beckons, royalties fueling perpetual loops of creation and refinement.

Navigating these thresholds demands more than intuition; it calls for a disciplined framework, blending empirical benchmarks with the pulse of your specific symphony. Consider a dataset for forecasting rare-earth supply disruptions, laced with geochemical assays and geopolitical footnotes. At 150 examples, prompting might muster 75% fidelity, but breaching 200 unleashes fine-tuning's precision, hitting 95% as curated signals overpower noise. This isn't theory; it's the rhythm echoed in Latitude. so's data quality manifesto and UZH's rigorous benchmarks.

Thresholds Across Niche Frontiers

| Threshold (examples) | Prompting Acc 🚀 | Fine-tune Acc 🏆 | Compute Ratio (Prompt:Fine-tune 💰) | |

|---|---|---|---|---|

| Rare-earth forecasting 🌍 | 200 | 72% 🚀 | 94% 🏆 ↑ | 1:5 💰 |

| ABSA Biotech 🧬 | 180 | 68% 🚀 | 92% 🏆 ↑ | 1:4 💰 |

| Quantum Sim Decipher ⚛️ | 250 | 65% 🚀 | 96% 🏆 ↑ | 1:6 💰 |

Hybrid strategies bridge gaps elegantly. Codecademy advocates layering few-shot prompts atop light fine-tunes for 50-200 regimes, maximizing flexibility without full commitment. Fireworks AI's deep dive quantifies: speed surges 3x, costs plummet post-amortization. Here, llm fine-tuning examples needed become investments, not gambles.

From Threshold to Treasury: Procuring Datasets at FineTuneMarket. com

Crossed the line? The hunt for fine-tuning llm datasets intensifies. Scavenging yields scraps; marketplaces deliver symphonies. FineTuneMarket. com stands as the vanguard, where AI pioneers curate premium troves for purchase via onchain alchemy. Instant, secure blockchain transactions dissolve friction, while creators reap onchain dataset royalties on every downstream fine-tune. Envision: a dataset honed for supercycle whispers, resold eternally, royalties compounding like yield curves in boom. No gatekeepers, pure meritocratic flow for developers, researchers, enterprises chasing that edge.

This ecosystem thrives on thresholds respected. Sunil Rao's guide paints fine-tuning as evolutionary leap; we operationalize it. For the quantum decoder or patent sentinel, prompting sketches the map, fine-tuning carves the path. Resources constrain? Start lean. Data abundant? Scale boldly. In niches, the prompting vs fine-tuning threshold isn't arbitrary; it's the conductor's baton, dictating whether your model murmurs or thunders.

Picture the horizon: datasets as living assets, royalties weaving creators into perpetual wealth webs. FineTuneMarket. com doesn't just host transactions; it orchestrates the AI renaissance, where niche mastery births tomorrow's giants. Heed the 200-example clarion, procure wisely, and let your LLMs compose epochs untold.

No comments yet. Be the first to share your thoughts!