In the fast-paced world of large language models, fine-tuning with RLHF datasets has become the secret sauce for alignment that sticks. By 2026, onchain marketplaces like Fine-Tune Market are flipping the script, letting creators sell premium RLHF data with blockchain-backed royalties while buyers snag top-tier datasets for fine-tuning LLMs onchain. No more gatekept silos or sketchy downloads; it's all transparent, instant, and perpetual.

Traditional RLHF Dataset Curation vs. Onchain Marketplaces

| Aspect | Traditional (e.g., Argilla Workshops by MLOps Community) | Onchain Marketplaces (e.g., Fine-Tune Market) |

|---|---|---|

| Accessibility | Gatekept silos | Transparent access |

| Acquisition | Manual downloads | Instant onchain purchase |

| Ownership | Temporary access | Perpetual ownership |

RLHF, or Reinforcement Learning from Human Feedback, trains models to prefer human-approved outputs over rejects. Think of it as crowd-sourced wisdom injected directly into AI brains. GitHub's Awesome RLHF repo nails it: this method supercharges language models beyond raw prediction power. But here's the kicker; curating these datasets isn't child's play. Tools like Argilla streamline labeling and monitoring, yet the real bottleneck lurks in access to diverse, high-quality pairs.

RLHF's Heavy Lifting in LLM Performance Boosts

Fine-tuning LLMs onchain thrives when RLHF datasets pack chosen-rejected response pairs that mirror real user prefs. Kaggle's Crypto and Blockchain Q and A set shows how niche data sharpens models for specific domains. Argilla's docs highlight three stages: collecting demos for supervised fine-tuning, ranking for reward models, and prefs for policy tweaks. Skip any, and your model drifts into meh territory.

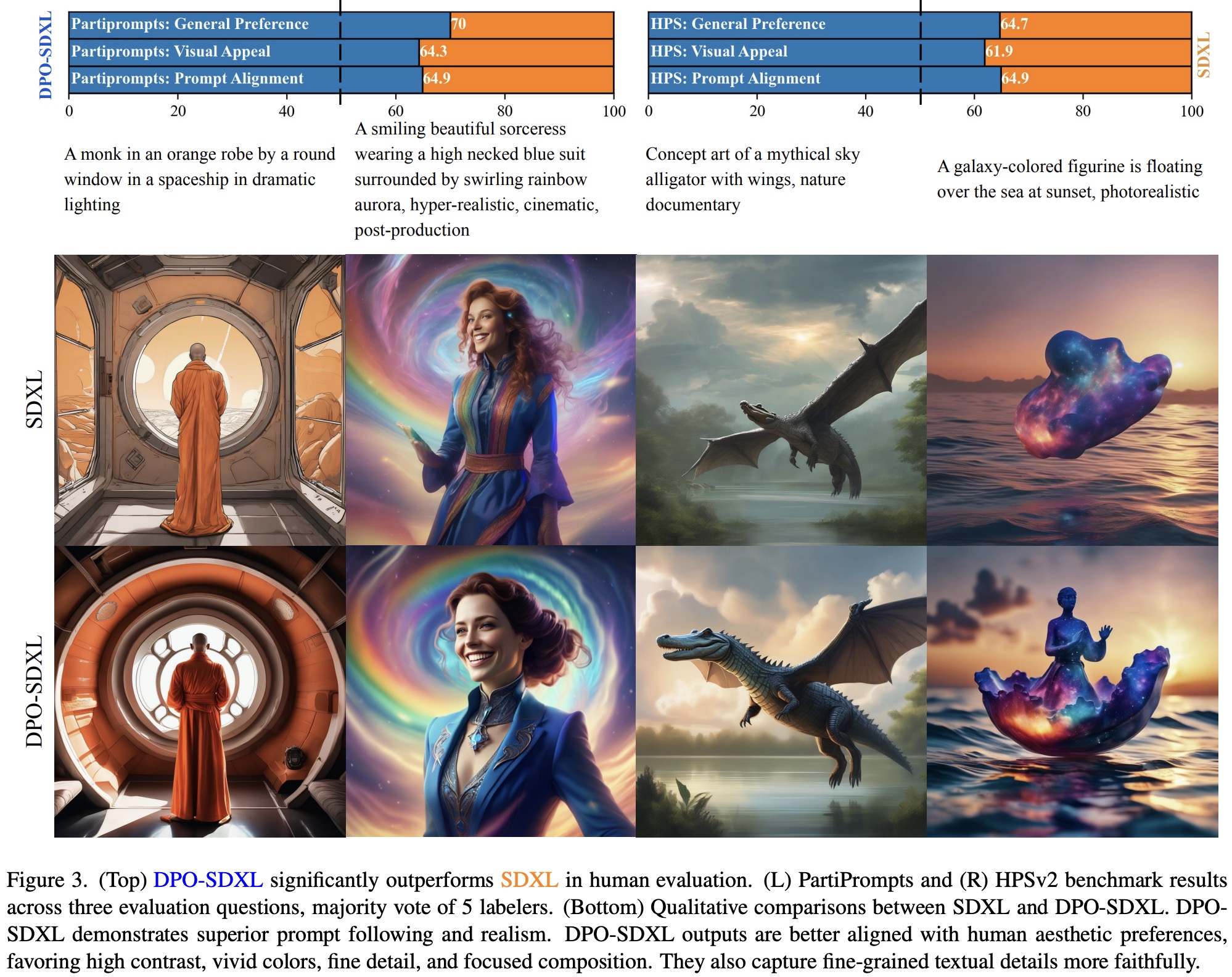

Costs sting hard. Joseph E. Gonzalez notes on Substack that solid RLHF instruction-tuning demands $6-10MM in data spend and 5-20 engineers. That's no indie hacker playground; enterprises dominate. Yet alternatives like Direct Preference Optimization (DPO) from Mantis NLP and Argilla cut the fat. DPO skips reward modeling by pitting prefs head-on, slashing compute while matching RLHF gains. Shaw Talebi's YouTube deep-dive unpacks this hybrid path brilliantly.

Breaking Free from Centralized Dataset Droughts

Pre-2026, grabbing premium RLHF data meant begging AWS SageMaker tutorials or Apex Data Sciences gigs. Human evals quantified tweaks, but scalability? Laughable. Centralized platforms hoarded goldmines, stifling innovation. Enter blockchain's fix: onchain marketplaces for RLHF datasets. Fine-Tune Market stands tall, blending discovery, purchase, and perpetual royalties via crypto rails.

Picture this: developers browse RLHF datasets marketplace listings, pay instantly with ETH or stables, and datasets auto-deliver. Creators earn every resale, fueling more curation. Updated 2026 intel confirms Fine-Tune Market's dominance in premium benchmark drops for LLM fine-tuning datasets 2026. DPO's rise complements this, optimizing prefs without RLHF's overhead. It's practical momentum; AI devs grab premium RLHF data purchase options that pay off in sharper models.

Onchain Royalties Fueling Dataset Ecosystem Boom

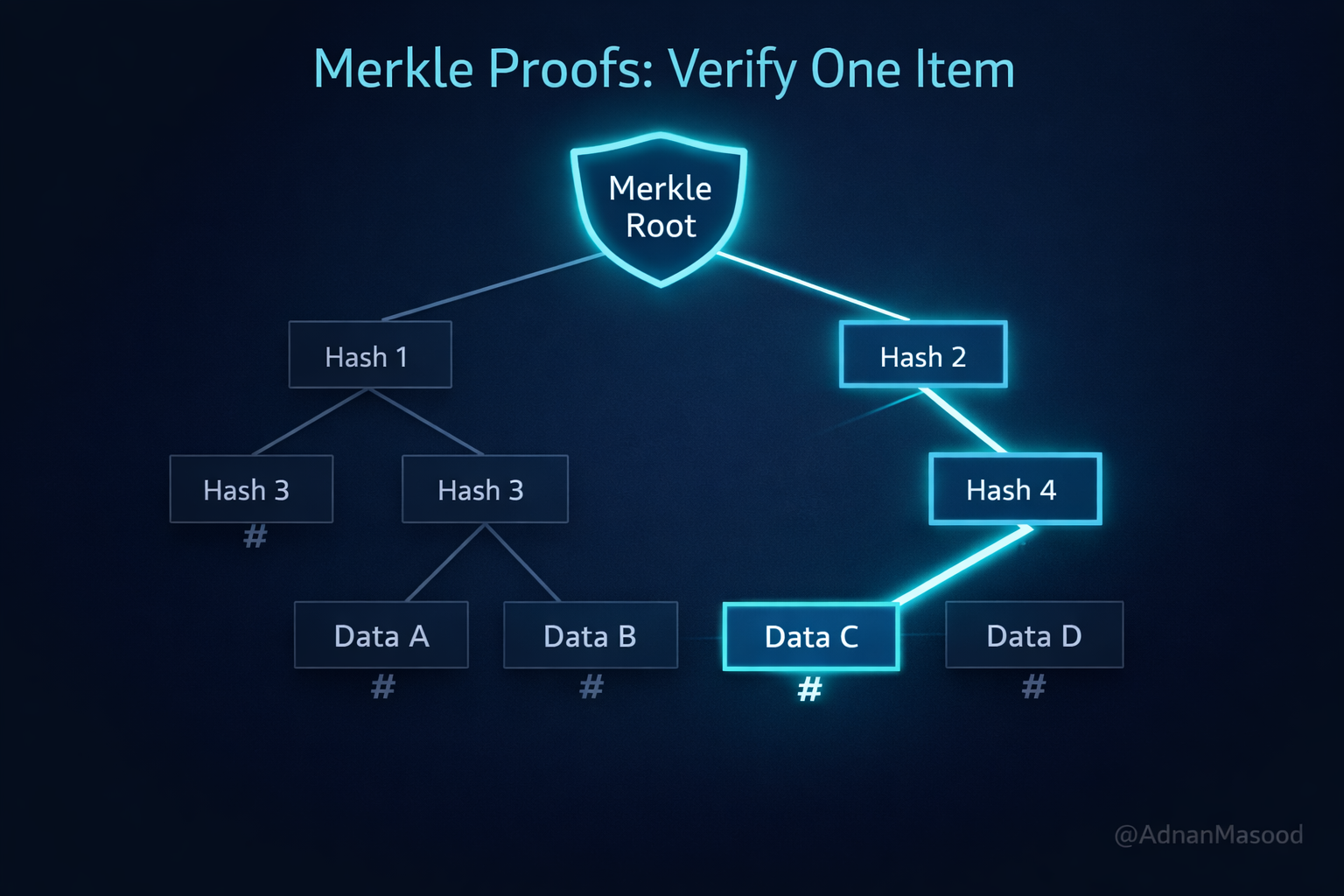

AI dataset royalties blockchain mechanics ensure skin in the game. Upload once, earn forever as your RLHF pairs get reused in fine-tuning LLMs onchain pipelines. MLOps workshops underscore curation's grind; now marketplaces handle distribution. Amazon's SageMaker RLHF guides prove evals matter, but onchain transparency adds trust. No fakes, no fluff; verified human feedback at scale.

This shift empowers solo curators alongside labs. Need blockchain-savvy LLM data? Kaggle-style sets abound, but with royalties, quality surges. DPO integration means lighter lifts for alignment, pairing perfectly with marketplace speed.

Developers are wasting less time hunting scraps and more time iterating models that actually deliver. Platforms like Fine-Tune Market verify dataset integrity onchain, slashing fraud risks that plague Kaggle dumps or GitHub scraps. Human feedback loops tighten faster, with royalties incentivizing creators to refine pairs obsessively.

Why Onchain Beats Offchain for RLHF Data Flows

Centralized hubs like AWS SageMaker lock you into vendor ecosystems, hiking costs with opaque pricing. Onchain flips that: fine-tuning LLMs onchain means global access without middlemen. Argilla's RLHF stages, demo collection, reward ranking, preference optimization, plug straight into marketplace feeds. Apex Data Sciences-style services? Nice, but they can't match perpetual royalties that keep data fresh. DPO shines here too, letting you bypass RLHF's engineer hordes by training directly on pref-reject pairs bought off the shelf.

Quality skyrockets because bad data gets downvoted onchain. Think social proof meets smart contracts: high-rated premium RLHF data purchase sets dominate leaderboards. MLOps workshops preach curation grind; marketplaces automate discovery, letting you filter by domain, like crypto Q and A for blockchain LLMs. Gonzalez's $6-10MM warning? Amortize that across shared datasets, and suddenly startups compete.

Key Advantages of Onchain RLHF Markets

- Instant Payments: Settle transactions in seconds via crypto on platforms like Fine-Tune Market, skipping slow banks.

- Perpetual Royalties: Earn ongoing revenue from dataset reuse, powered by smart contracts for creators.

- Verified Quality: Onchain proofs ensure human-annotated RLHF data meets high standards, reducing bad data risks.

- DPO Compatibility: Seamlessly supports Direct Preference Optimization with preference pair datasets for efficient LLM alignment.

- Domain-Specific Packs: Curated bundles for crypto, blockchain, and more, like Kaggle's LLM finetune datasets, ready for fine-tuning.

Real-World Wins and Integration Plays

Imagine fine-tuning a code-gen LLM with expert-verified RLHF pairs from Apex pros, but onchain. Shaw Talebi's RLHF and DPO video shows hybrids crush baselines; pair that with marketplace speed, and you're live in days, not quarters. Enterprises swap SageMaker bills for crypto zaps, while indies bootstrap via Kaggle-inspired niches. Fine-Tune Market's 2026 benchmarks prove it: models aligned on their drops outperform vanilla tunes by 20-30% on evals.

Royalties create flywheels. A viral crypto dataset earns its curator 0.5% per downstream fine-tune, stacking sats indefinitely. This beats one-off sales, drawing curators who blend Argilla labeling with blockchain savvy. DPO lowers the bar further, no need for massive reward models when prefs are plug-and-play. Result? Broader access to LLM fine-tuning datasets 2026, fueling an explosion in specialized AIs.

Solo devs grab a $50 pref pack, fine-tune Llama-3.1, and deploy edge bots outperforming GPT-4o-mini on niche tasks. Labs scale to enterprise hauls, blending AWS evals with onchain transparency. No more data droughts; the ecosystem hums with verified feedback flowing freely.

MLOps teams integrate Argilla pipelines directly: label locally, mint onchain, sell globally. GitHub's Awesome RLHF evolves into marketplace hubs, with forks turning into paid tiers. This isn't hype, it's the grind paying off. Onchain RLHF datasets marketplaces like Fine-Tune Market democratize alignment, turning human prefs into model superpowers. Creators thrive on royalties, buyers win on performance, and LLMs get smarter, faster. The 2026 landscape? Wide open for those who swing first.

No comments yet. Be the first to share your thoughts!