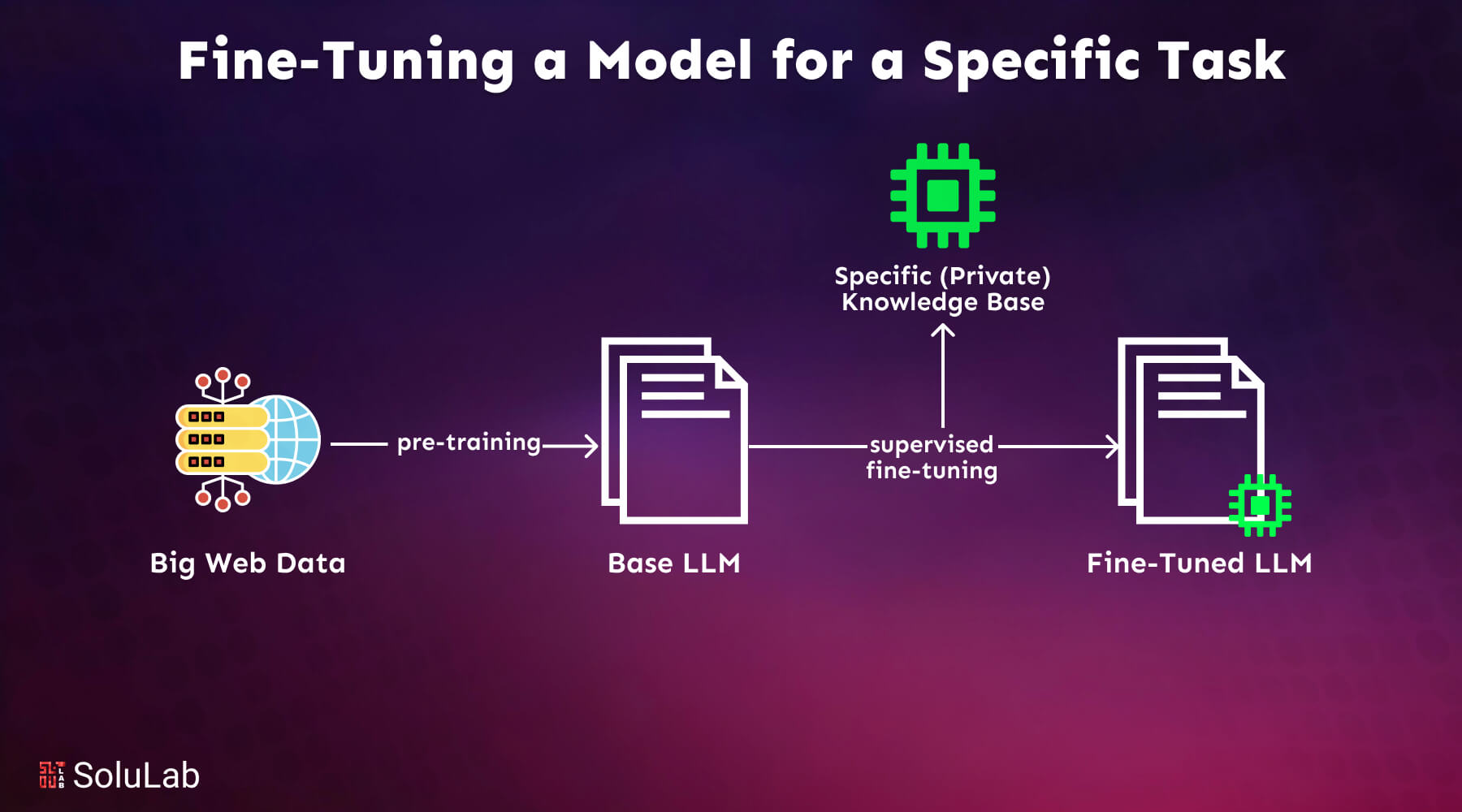

In the competitive arena of large language model development, securing high-quality datasets for fine-tuning remains a persistent bottleneck. Traditional data silos breed opacity, regulatory headaches, and one-off transactions that undervalue creators. Onchain dataset marketplaces flip this script, harnessing blockchain to deliver fine-tuning LLM datasets with built-in perpetual royalties. Creators earn indefinitely as their data fuels model iterations, while buyers tap premium, verifiable sources without the trust deficits of centralized hubs.

This convergence of blockchain and AI isn't mere hype; it's a pragmatic evolution addressing real pain points. Raw onchain data, often messy and unreconciled, demands preprocessing for LLM usability, as noted in best practices from platforms like Allium. so. Yet, when packaged into structured blockchain AI datasets, it unlocks domain-specific fine-tuning that centralized alternatives can't match in transparency or incentives.

Overcoming Data Scarcity in LLM Fine-Tuning

Developers chasing specialized performance in LLMs grapple with scarce, privacy-constrained data. Crypto and blockchain Q and amp;A pairs on Kaggle offer a glimpse-804 meticulously curated examples spanning the ecosystem-yet they pale against the volume needed for production-grade models. Synthetic datasets emerge as saviors, but without provenance, they risk model drift or compliance pitfalls.

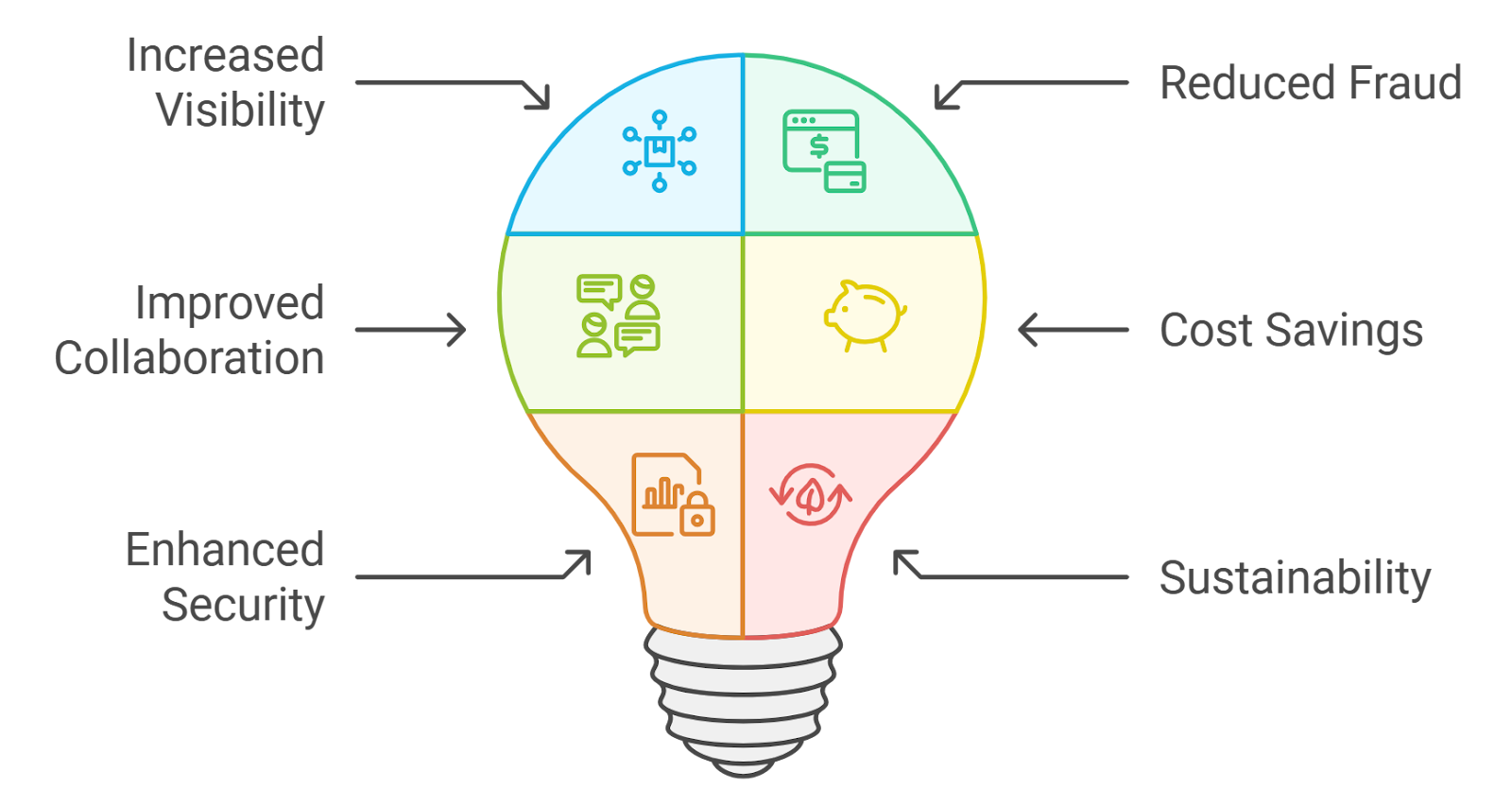

Key Onchain Dataset Benefits

- Transparency via Blockchain: Ensures immutable records of data usage, contributions, and provenance, as seen in platforms like onChainAI.

- Perpetual Royalties for Creators: Smart contracts enable ongoing compensation for dataset providers, exemplified by emerging marketplaces.

- Privacy-Preserving Synthetic Data: High-fidelity synthetic datasets from platforms like DataXID protect privacy while supporting LLM fine-tuning.

- Seamless Onchain Payments: Automated cryptocurrency transactions via smart contracts eliminate intermediaries for efficient data exchanges.

From a risk management standpoint, I've long advised institutions to prioritize verifiable assets over opaque promises. Onchain marketplaces enforce this through immutable ledgers, where every dataset upload triggers smart contract royalties. Synthik on GitHub exemplifies this, revolutionizing synthetic data sharing with full provenance, ensuring datasets aren't just usable but auditably robust.

Spotlight on Pioneering Platforms

Emerging leaders are already reshaping the landscape. DataXID stands out by generating high-fidelity synthetic datasets tailored for LLM domain adaptation, sidestepping privacy compliance and cost barriers. OmniLytics takes it further with a blockchain-secured marketplace for decentralized machine learning, letting owners contribute private data to collective training while thwarting malicious interference.

onChainAI pushes boundaries by embedding LLMs directly on blockchain, delivering censorship-resistant, verifiable inference sans oracles. FEDSTR leverages the NOSTR protocol for federated learning marketplaces, pairing dataset providers with trainers in a fair, open exchange. OpenDataBay rounds out the field as an AI data hub, streamlining access to categories like financial and medical fine-tuning datasets.

These aren't theoretical constructs; they're battle-tested frameworks. ChainScore Labs' guide on private data marketplaces underscores the blueprint: encryption, smart contracts, and computation proofs create tamper-proof ecosystems. In my two decades assessing derivatives risks, I've seen how misaligned incentives erode value-here, perpetual royalties datasets align them indelibly.

Smart Contracts Powering Perpetual Royalties

The crown jewel of these onchain dataset marketplaces is perpetual royalties via smart contracts. Unlike fleeting licenses, royalties accrue on every downstream use-purchase, fine-tune, or derivative model. This incentivizes premium contributions, fostering a virtuous cycle where creators invest in quality knowing returns compound.

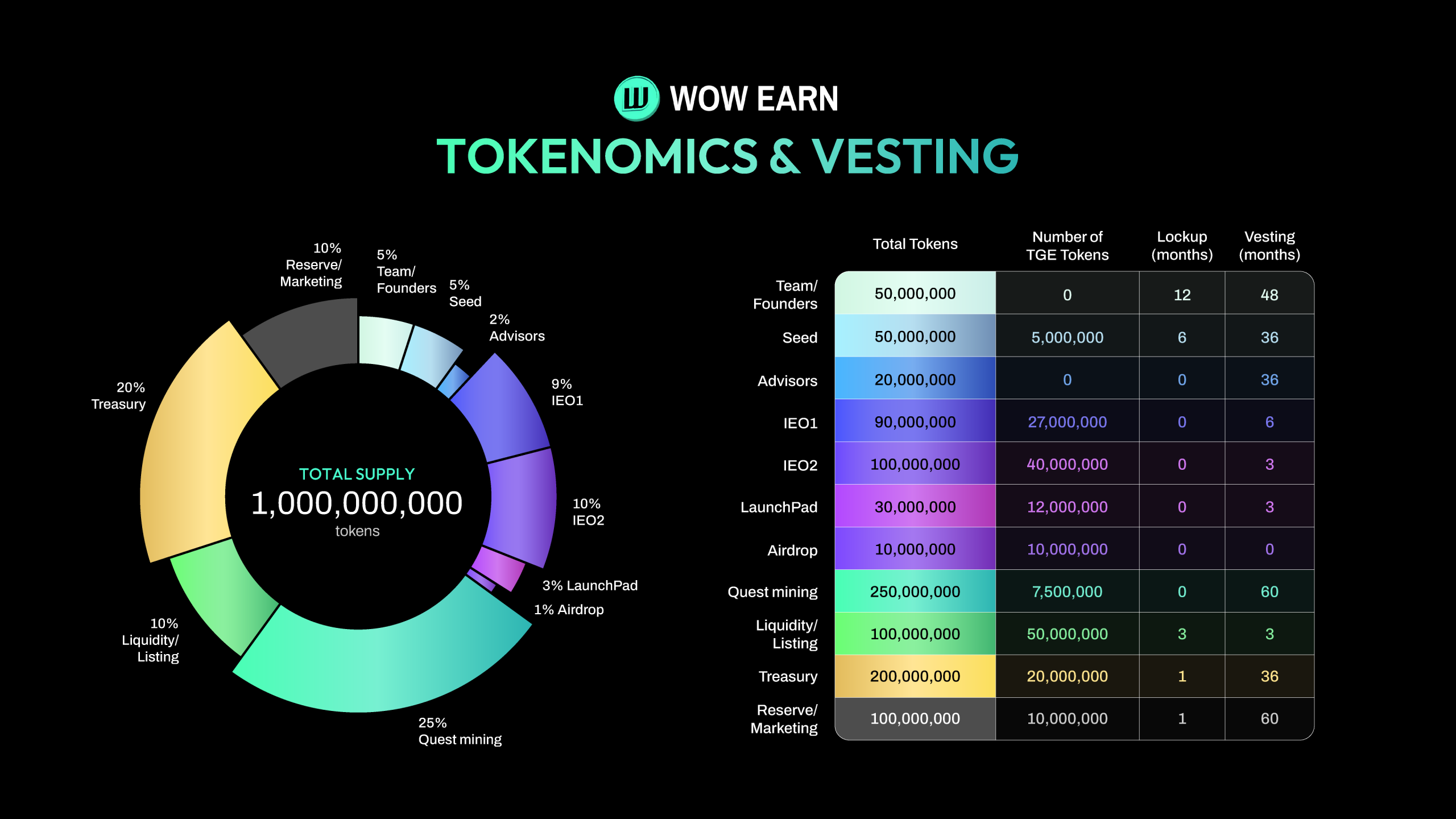

Consider the mechanics: A dataset uploaded to OpenDataBay triggers an NFT-like token with embedded royalty splits. Blockchain immutability ensures payments flow instantly upon usage verification, audited onchain. Risks persist-model poisoning or oracle failures-but platforms like FEDSTR mitigate via decentralized protocols. As a certified risk manager, I caution: vet platform tokenomics rigorously; unsustainable yields signal fragility. Yet, the upside is compelling; premium fine-tune data onchain democratizes elite training resources, bolstering model resilience across enterprises and independents alike.

Scalability tests these systems. High-volume fine-tuning demands datasets that don't buckle under load, yet blockchain's gas fees can erode margins for micro-transactions. Platforms counter with layer-2 solutions or optimized contracts, but I've seen enough protocol upgrades falter to advise thorough stress testing before committing capital. Synthik's decentralized synthetic data approach shines here, embedding provenance that flags quality issues early, turning potential liabilities into strengths.

Comparative Edge Over Traditional Marketplaces

Stack these against Hugging Face or Kaggle hubs, and the onchain dataset marketplace model reveals stark advantages. Legacy platforms rely on centralized curation, prone to takedowns or paywalls without residuals for originators. Blockchain flips this: perpetual royalties datasets ensure creators capture value indefinitely, while buyers gain tamper-evident quality. Take the Kaggle crypto Q and A set-804 pairs are solid starters, but onchain versions scale with community contributions, royalties fueling expansions.

Comparison of Key Onchain Dataset Marketplaces for LLM Fine-Tuning

| Platform | Key Features | Privacy Focus | Decentralization | Royalty Mechanism |

|---|---|---|---|---|

| DataXID | Synthetic datasets for LLM fine-tuning & domain adaptation | High-fidelity privacy-safe datasets | Blockchain technology | Perpetual royalties via smart contracts |

| OmniLytics | Secure data marketplace for decentralized ML | Private data contributions, secure against malice | Blockchain-based decentralized ML | Automated perpetual compensation via smart contracts |

| onChainAI | Decentralized LLMs & verifiable inference | On-chain transparency & verifiable outputs | On-chain smart contracts, censorship-resistant | Verifiable inference royalties, perpetual |

| FEDSTR | Federated learning & LLM training marketplace | Privacy-preserving federated learning | NOSTR federated protocol, open marketplace | Fair splits & perpetual royalties |

| OpenDataBay | Multi-category hub for AI/LLM datasets | Secure & streamlined data exchange | Decentralized protocols | Perpetual royalties for data providers |

This table underscores tactical differentiation. From my vantage in derivatives risk, where mispriced options wipe portfolios, I value these mechanics. No single platform dominates yet; diversify across them to hedge platform-specific risks like token depegs or governance votes gone awry.

Navigating Adoption Barriers

Regulatory shadows loom large. Datasets touching medical or financial realms, as in OpenDataBay's offerings, invite scrutiny under GDPR or SEC lenses. Blockchain's pseudonymity helps, but know-your-data protocols are essential. I've counseled firms through Basel stress tests; apply similar rigor here-audit trails via onchain proofs separate viable plays from vaporware.

Essential Onchain Data Checklist

- Assess dataset provenance and benchmarks: Trace data origins and review performance metrics on marketplaces such as OpenDataBay and Synthik, confirming blockchain-verified quality for LLM fine-tuning.

- Confirm royalty distribution transparency: Inspect smart contract mechanisms for perpetual royalties on platforms like FEDSTR, ensuring automated, verifiable payouts to data providers.

- Test integration with your LLM pipeline: Validate compatibility and run trials with datasets from OmniLytics, checking seamless onchain data flow into your training workflow.

- Monitor platform tokenomics for sustainability: Analyze token utility, incentives, and economics of marketplaces like onChainAI to assess long-term viability and avoid unsustainable models.

Best practices from Allium. so on blockchain data prep align seamlessly: reconcile raw onchain feeds into LLM-ready formats before marketplace ingestion. This preprocessing elevates fine-tuning LLM datasets, minimizing hallucinations in crypto or DeFi models. DePIN innovations, anchoring datasets onchain, add timestamps that bolster auditability, a nod to the LinkedIn insights on model offerings.

Looking ahead, convergence accelerates. Web3 AI reports from Onchain Magazine highlight projects blending inference with data markets, portending full-stack ecosystems. SettleMint's blockchain-AI use cases across industries suggest broader applications, from secure supply chains to autonomous agents. Yet caution tempers enthusiasm: as with early crypto winters, overhype precedes corrections. Prioritize platforms with proven throughput and deflationary token models to safeguard against downturns.

Ultimately, blockchain AI datasets with perpetual royalties redefine incentives, propelling LLMs toward robust, specialized prowess. Creators thrive on sustained yields, developers access vetted assets frictionlessly, and the ecosystem matures through aligned interests. In an era of fleeting AI gains, this onchain foundation promises enduring value-protect your models' edge by engaging thoughtfully today.

No comments yet. Be the first to share your thoughts!